7 Shocking Truths About Knowledge Distillation: The Good, The Bad, and The Breakthrough (SAKD)

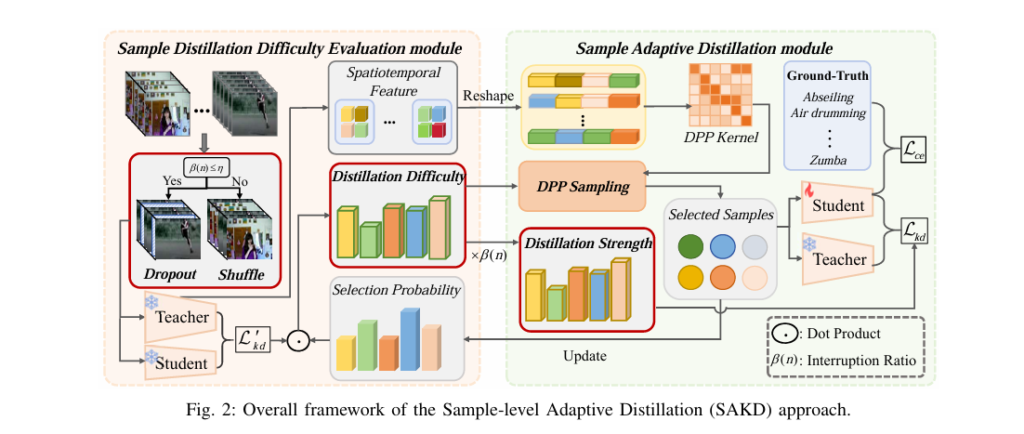

In the fast-evolving world of AI and deep learning, knowledge distillation (KD) has emerged as a powerful technique to shrink massive neural networks into compact, efficient models—ideal for deployment on smartphones, drones, and edge devices. But despite its promise, traditional KD methods suffer from critical flaws that silently sabotage performance. Now, a groundbreaking new framework—Sample-level […]