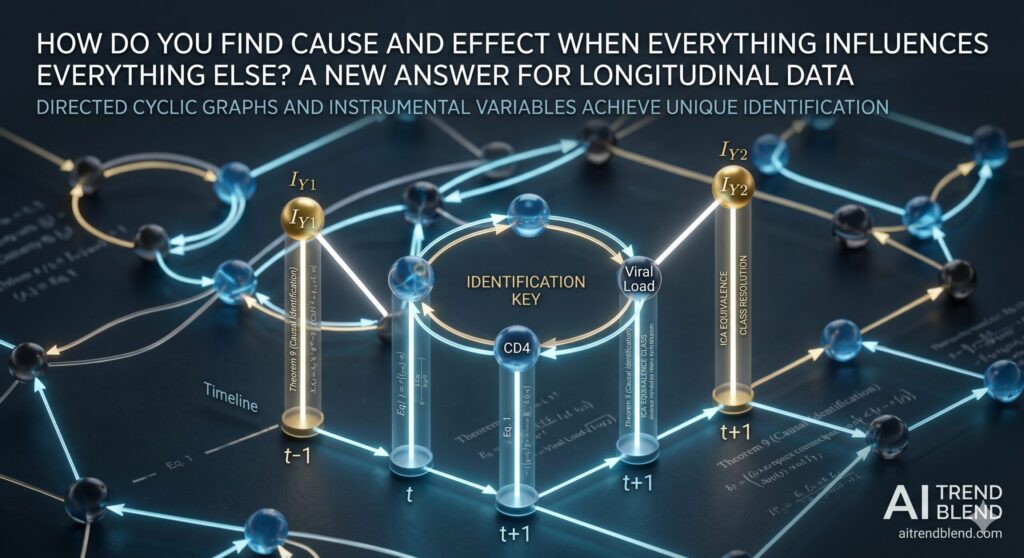

Directed Cyclic Graphs for Causal Discovery from Longitudinal Data

Directed Cyclic Graphs for Causal Discovery from Longitudinal Data | Research Breakdown AITrendBlend Machine Learning Mathematics About Causal Discovery · Journal of Machine Learning Research 26 (2025) 1–62 · 20 min read How Do You Find Cause and Effect When Everything Influences Everything Else? A New Answer for Longitudinal Data A team from Johns Hopkins […]

Directed Cyclic Graphs for Causal Discovery from Longitudinal Data Read More »