5 Revolutionary Breakthroughs in AI Safety: How CONFIDERAI Eliminates Prediction Failures While Boosting Trust (But Watch Out for Hidden Risks)

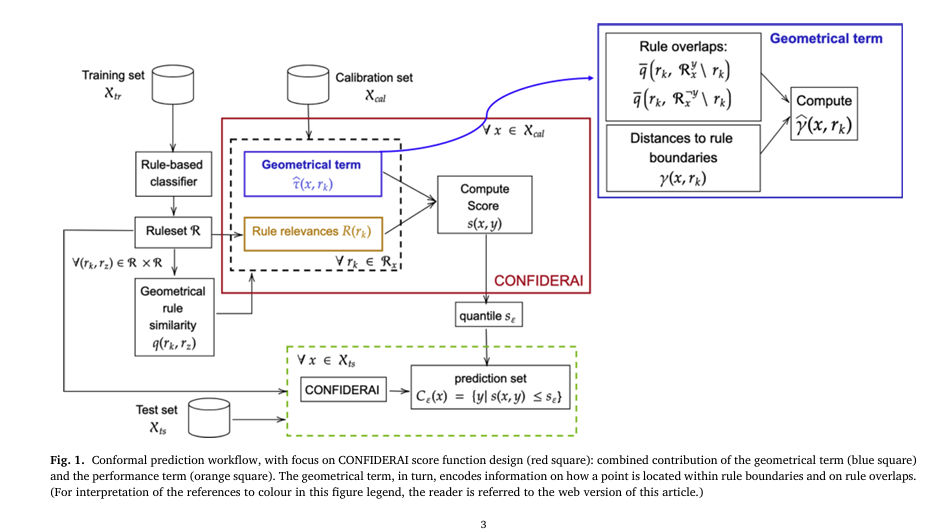

In the rapidly evolving world of artificial intelligence, one question looms larger than ever: Can we truly trust AI systems when lives are on the line? From detecting DNS tunneling attacks to predicting cardiovascular disease, the stakes have never been higher. While explainable AI (XAI) has made strides in transparency, a critical gap remains — […]