5 Breakthroughs in Dual-Forward DFPT-KD: Crush the Capacity Gap & Boost Tiny AI Models

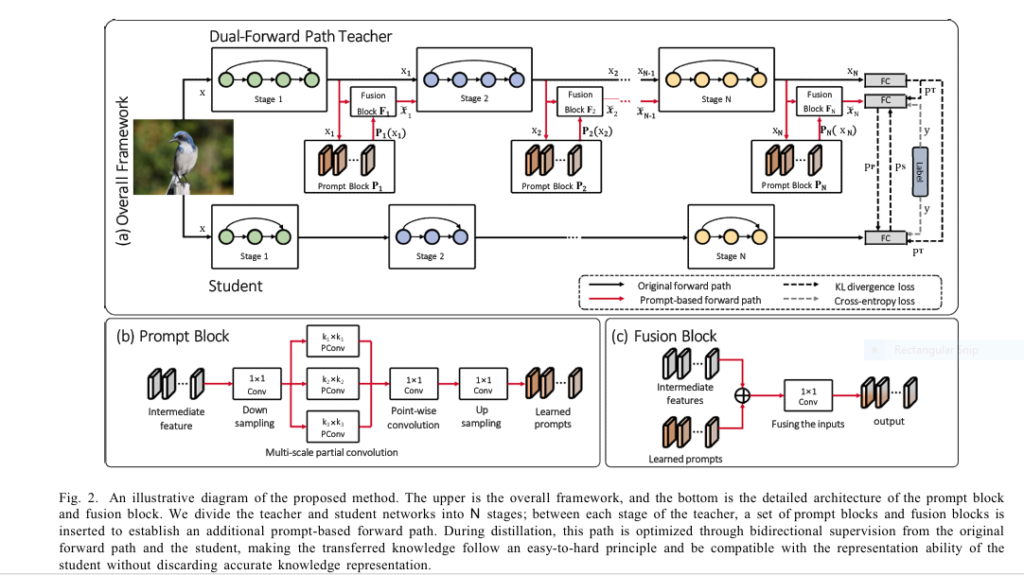

Imagine training a brilliant professor (a large AI model) to teach complex physics to a middle school student (a tiny, efficient model). The professor’s expertise is vast, but their explanations are too advanced, leaving the student confused and unable to grasp the fundamentals. This is the “capacity gap problem” – the Achilles’ heel of traditional Knowledge Distillation […]

5 Breakthroughs in Dual-Forward DFPT-KD: Crush the Capacity Gap & Boost Tiny AI Models Read More »