Unlock 57.2% Reasoning Accuracy: KDRL Revolutionary Fusion Crushes LLM Training Limits

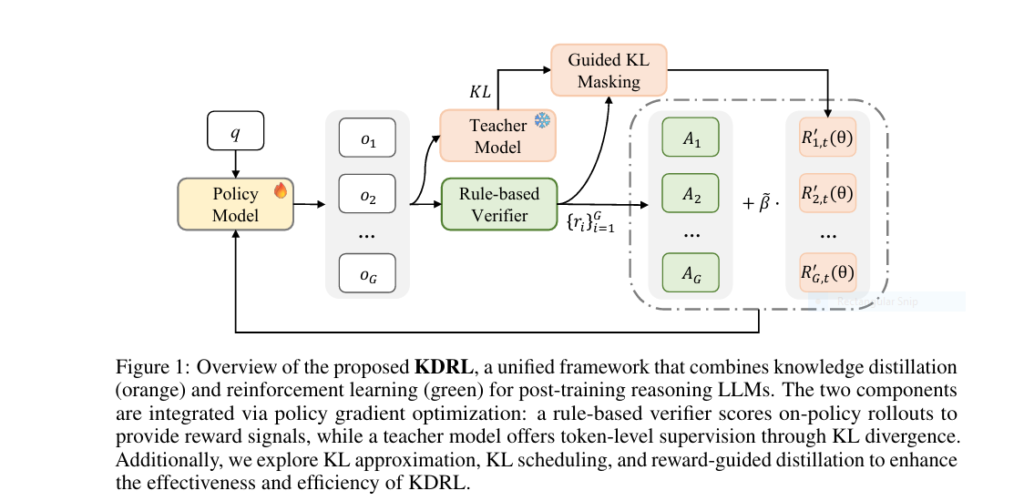

The Hidden Flaw Crippling Your LLM’s Reasoning Power Large language models (LLMs) promise revolutionary reasoning capabilities, yet most hit an invisible wall. Traditional training forces a brutal trade-off: Enter KDRL—a Huawei/HIT-developed framework merging KD and RL into a single unified pipeline. Results from 6 reasoning benchmarks reveal: How KDRL Shatters the KD-RL Deadlock Proposed model breakthrough lies […]

Unlock 57.2% Reasoning Accuracy: KDRL Revolutionary Fusion Crushes LLM Training Limits Read More »