Preference Score Distillation: Leveraging 2D Rewards to Align Text-to-3D Generation with Human Preference

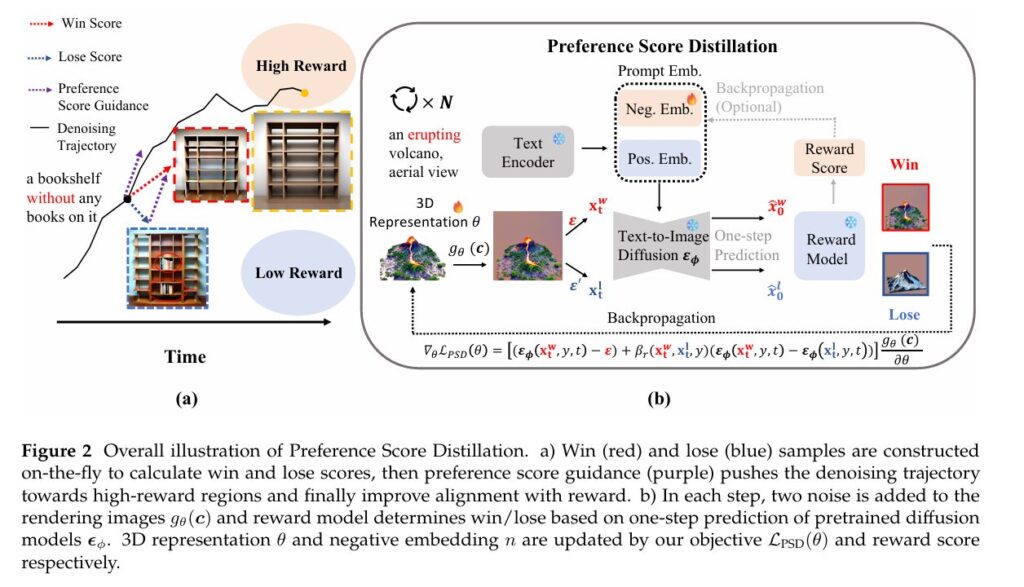

Preference Score Distillation: Leveraging 2D Rewards to Align Text-to-3D Generation with Human Preference | MedAI Research nn.Module: # Actual implementation would load from HuggingFace or checkpoint return nn.Identity() # Placeholder @torch.no_grad() def forward(self, images: torch.Tensor, prompts: List[str]) -> torch.Tensor: “”” Compute reward scores for images. Args: images: [B, 3, H, W] RGB images in [-1, […]