5 Shocking Mistakes in Knowledge Distillation (And the Brilliant Framework KD2M That Fixes Them)

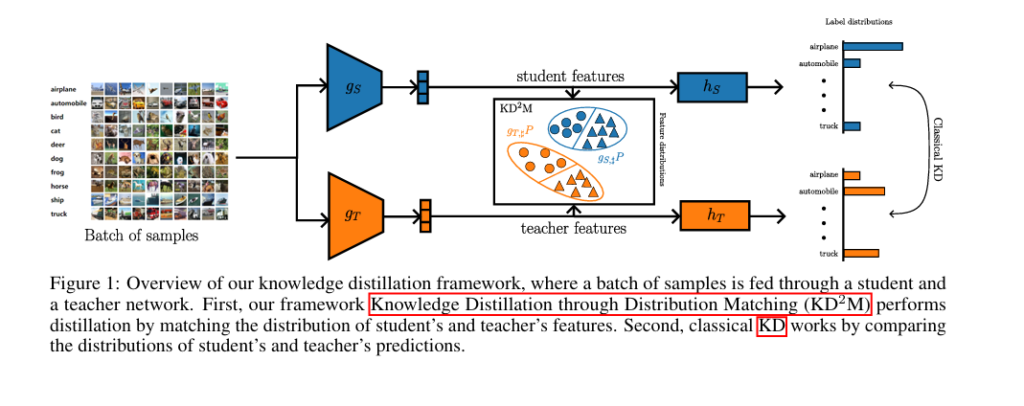

In the fast-evolving world of deep learning, one of the most promising techniques for deploying AI on edge devices is Knowledge Distillation (KD). But despite its popularity, many implementations suffer from critical flaws that undermine performance. A groundbreaking new paper titled “KD2M: A Unifying Framework for Feature Knowledge Distillation” reveals 5 shocking mistakes commonly made […]