Anchor-Based Knowledge Distillation: A Trustworthy AI Approach for Efficient Model Compression

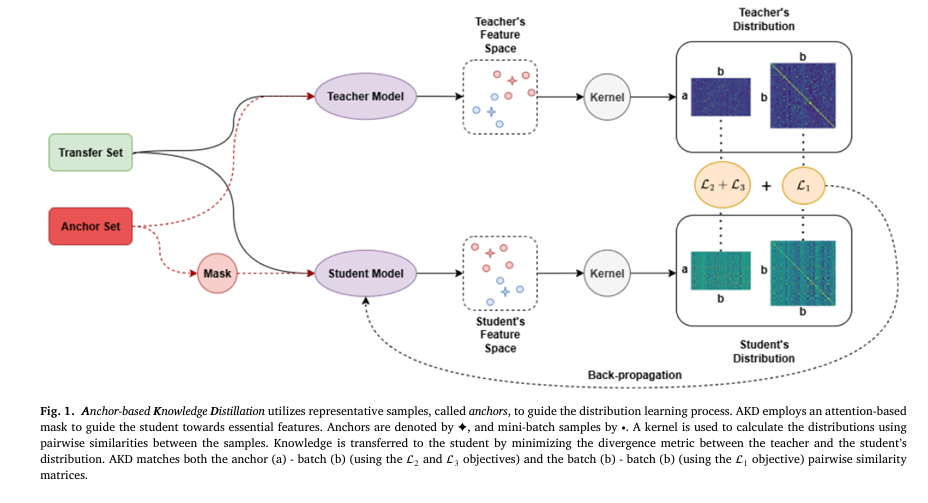

In the rapidly evolving field of artificial intelligence (AI), knowledge distillation (KD) has emerged as a cornerstone technique for compressing powerful, resource-intensive neural networks into smaller, more efficient models suitable for deployment on mobile and edge devices. However, traditional KD methods often fall short in capturing the full richness of a teacher model’s knowledge, especially […]