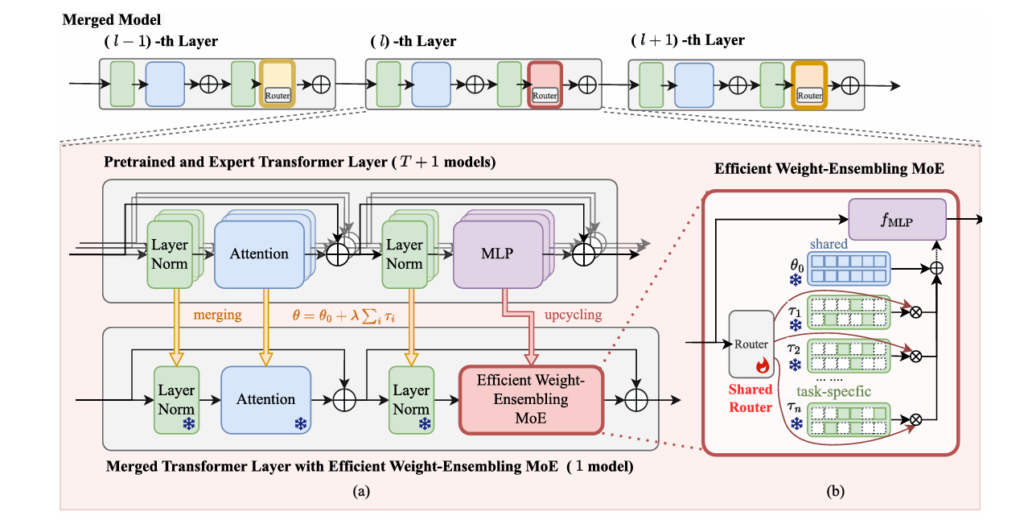

WEMoE: How a Mixture-of-Experts Approach Is Solving the Multi-Task Model Merging Problem

WEMoE: How a Mixture-of-Experts Approach Is Solving the Multi-Task Model Merging Problem | MedAI Research MedAI Research Machine Learning About Deep Learning · TPAMI, 2026 · 18 min read The Static Model Merging Problem — and How WEMoE Learned to Adapt WEMoE introduces a dynamic mixture-of-experts approach to multi-task model merging, transforming how we combine […]

WEMoE: How a Mixture-of-Experts Approach Is Solving the Multi-Task Model Merging Problem Read More »