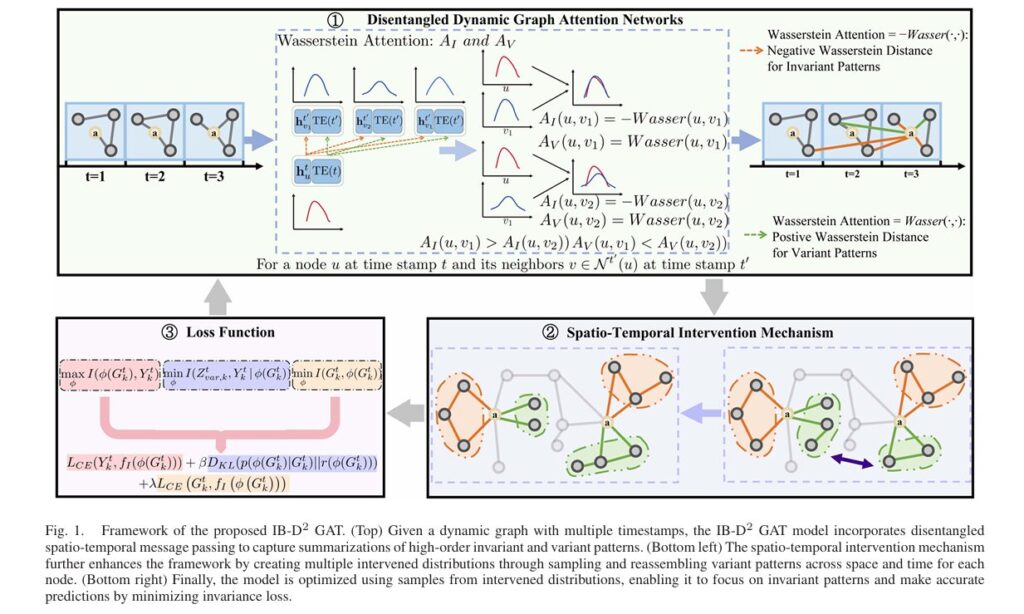

IB-D2GAT: How Information Bottleneck Theory Revolutionizes Dynamic Graph Learning Under Distribution Shifts

Introduction: The Critical Challenge of Evolving Graph Data In an era where financial transactions occur in milliseconds, social networks reshape human interaction by the minute, and traffic patterns shift with unpredictable urban dynamics, dynamic graph neural networks (DyGNNs) have emerged as essential tools for modeling real-world systems. Unlike static graphs that capture frozen snapshots of […]