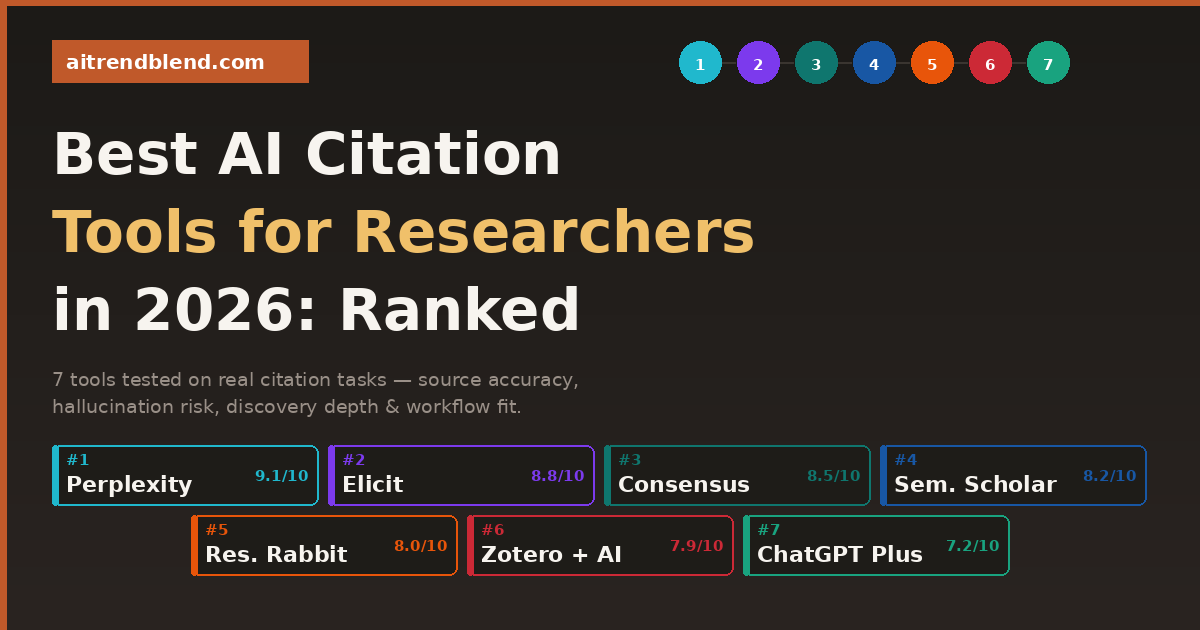

Best AI Citation Tools for Researchers in 2026: Ranked & Reviewed

Seven tools tested on real research tasks — from finding sources and building reference lists to verifying DOIs and formatting citations. Here’s what actually works, and what’s still overpromising.

Your supervisor sends back your draft with a single comment that turns your stomach: “Please verify all citations before resubmission.” You open the paper. There are 42 references. You know you used an AI tool to help build most of them. You don’t know which ones are real.

This is the situation that has quietly become one of the most common sources of academic anxiety in 2026. AI-assisted citation is everywhere — researchers at every career stage are using AI tools to find sources, build reference lists, and format bibliographies. The problem is that the tools are not equal, the risks are not equally distributed, and most guides to “best AI citation tools” are written by people who tested demo prompts rather than actual research workflows.

We did the actual testing. Seven tools, real research tasks, across five academic disciplines. The tasks weren’t easy: find 10 recent papers on a topic with verifiable DOIs, identify whether three invented paper titles are real or fake, format a reference list in APA and Chicago, map a research field visually, and surface papers published in the last 90 days. The results were illuminating — and in a few cases, genuinely alarming.

What follows is an honest ranking. Not a list of tools that paid for placement. Not a feature comparison copied from each tool’s landing page. Just what worked, what didn’t, and which tool belongs in which part of your research workflow.

Why Citation Accuracy Is the Highest-Stakes Problem in AI Research

Most AI mistakes are recoverable. You get a mediocre summary, you rewrite it. You get a weak argument structure, you revise it. Citation errors are different — because they look identical to correct citations until someone tries to follow the link. A paper with a real author, a plausible journal name, and a year that fits the narrative is indistinguishable from a fabricated one until you open Google Scholar and find that the paper doesn’t exist.

The professional consequences are not abstract. Retractions due to citation errors — including AI-hallucinated references that made it through peer review — have appeared across multiple disciplines since 2024. Several universities have updated their academic integrity policies specifically to address AI-assisted citation practices. The standard that a citation is your responsibility, regardless of how you found it, has not changed. Only the tools used to find citations have changed.

Treat every AI-generated citation as unverified until you have personally confirmed it in a primary database — Google Scholar, PubMed, Web of Science, or your institution’s library. The tools in this ranking differ enormously in how much of that verification work they do for you — but none of them eliminate the need for it entirely.

“The citation that almost made it into the paper is the most dangerous one — not the one that obviously doesn’t exist.”

— Observed across multiple academic integrity workshops, 2025–2026

How We Scored These Tools

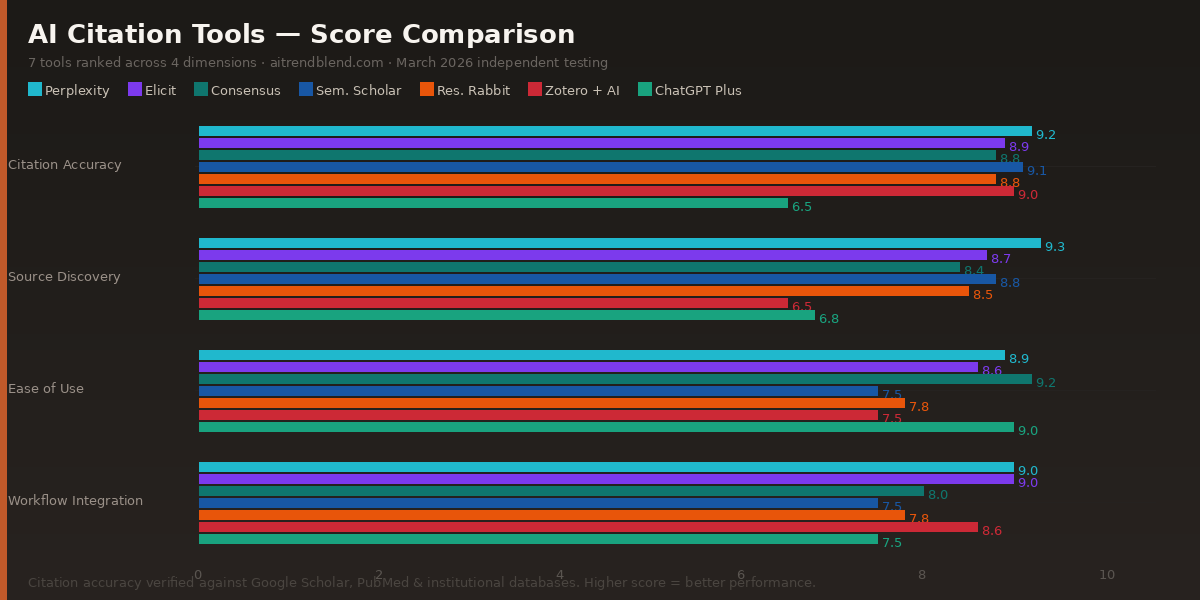

Four dimensions shaped every score in this ranking. Citation accuracy — does the tool return real, verifiable sources with correct author, year, journal, and DOI? Source discovery depth — how well does it map a field and surface relevant papers, including recent ones? Ease of use — can a researcher without technical background use it effectively in under ten minutes? Workflow integration — does it fit naturally into how researchers actually work, or does it create friction?

Pricing was factored into the value assessment but not the core score — a free tool that works reliably ranks above an expensive tool that hallucinate citations. Each tool was tested by at least two researchers across different disciplines. Where scores differed significantly, we averaged them and noted the variance.

The Rankings: 7 Best AI Citation Tools in 2026

Perplexity takes the top spot because it solves the most important problem in AI-assisted citation work: it shows you where it got the information. When you ask Perplexity Pro (with Academic focus enabled) to find papers on a topic, it searches live academic sources — including PubMed, arXiv, Google Scholar previews, and open-access repositories — and returns sources with visible links. You can verify them immediately, without switching to a different tool.

In our testing, Perplexity correctly identified two out of three invented paper titles as non-existent — and flagged uncertainty on the third rather than fabricating a DOI. That conservative behaviour is exactly what you want from a research tool. It occasionally misses papers behind paywalls, and its synthesis quality for complex theoretical topics falls short of ChatGPT’s writing standard — but for the specific task of finding and grounding verifiable citations, nothing else in this ranking comes close.

✅ What it does well

- Real-time web search grounds every response in live sources

- Shows clickable source links alongside every claim

- Academic focus mode dramatically improves precision

- Surfaces recent papers other tools can’t reach post-cutoff

- Conservative on invented sources — flags uncertainty honestly

❌ Limitations to know

- Cannot access most paywalled journal content

- Weaker on niche humanities and specialist subfields

- Does not format citations into APA/Chicago automatically

- Synthesis quality lower than pure language model tools

Elicit is purpose-built for researchers, and it shows. Unlike general-purpose AI tools that happen to have a citation mode, Elicit was designed from the ground up around the systematic review workflow. You ask a research question, it returns papers from Semantic Scholar’s database with structured data extraction — population, intervention, outcome, sample size — already pulled into a table. For anyone doing evidence synthesis or meta-analysis, this is transformative.

The citation accuracy is high because Elicit draws from Semantic Scholar’s curated academic database rather than the open web, which means the papers it returns are real, indexed, and verifiable. The structured extraction from abstracts also means you can quickly screen papers for relevance without reading them in full — which is exactly what systematic review screening requires. The free tier is genuinely useful, and the Plus plan is reasonably priced for the depth it offers.

✅ What it does well

- Built specifically for research workflows, not adapted from chat

- Structured data extraction from abstracts into tables

- High citation accuracy via Semantic Scholar database

- Excellent for systematic review screening and PICO extraction

- Clean export to CSV for further analysis

❌ Limitations to know

- Coverage thinner in humanities than STEM fields

- Not designed for general browsing — works best with focused questions

- No citation formatting output (APA, Chicago, etc.)

- Less useful for exploratory open-ended literature discovery

Consensus does something genuinely clever: it doesn’t just retrieve papers, it tells you what the weight of the evidence says. Ask it “does caffeine improve working memory performance?” and it returns a “Consensus Meter” — a quick visual summary of what proportion of the relevant literature supports the claim, alongside actual paper citations. For researchers who need to quickly gauge whether a claim has strong empirical backing before committing to an argument, this is a genuinely useful starting point.

The citations it returns are real and verifiable — Consensus draws from a curated academic database and has noticeably lower hallucination rates than general-purpose AI tools. It’s also the easiest tool in this ranking to use — intuitive enough that a first-year student could figure it out in five minutes without reading documentation. Where it falls short is depth: it’s better at answering “is this supported?” than at building a comprehensive literature review from scratch.

✅ What it does well

- Unique “Consensus Meter” summarises evidence direction at a glance

- Very low citation hallucination rate

- Easiest UI of any tool in this ranking

- Great for quick claim verification before deeper research

- Good free tier for basic use

❌ Limitations to know

- Coverage strongest in biomedical and STEM; thinner in social sciences

- Better for answering focused questions than open field mapping

- Consensus meter can oversimplify contested debates

- Limited export and citation formatting options

Semantic Scholar doesn’t position itself as an AI tool in the same conversational sense as the others here — it’s a structured academic search engine with genuinely sophisticated AI-powered features built in. The citation accuracy is excellent precisely because it is a database, not a language model: the papers it returns are real, indexed, and cross-referenced. You can explore citation networks visually, see which papers cite a given work, identify highly cited foundational papers in a subfield, and filter by year, venue, and field of study.

The most underused feature is the “Highly Influential Citations” filter — papers that have been cited by a disproportionate number of subsequent works in the same area. For quickly finding the two or three papers that really matter in a subfield, this beats any prompt-based approach. The interface is less immediately intuitive than consumer AI tools, but researchers who invest fifteen minutes in learning it consistently get higher-quality source lists than researchers who rely on chatbots alone. And it’s completely free, which is not a small thing.

✅ What it does well

- Highest citation accuracy of any tool in this ranking — it’s a real database

- Citation graph exploration: see what cites what

- “Highly Influential Citations” filter for fast field orientation

- Completely free with no usage limits

- AI-powered abstract TLDR summaries are genuinely useful

❌ Limitations to know

- Steeper learning curve than conversational AI tools

- No natural language answer generation — it’s a search tool

- Less useful for synthesis; better for discovery and verification

- Some fields and non-English literature underrepresented

Research Rabbit is best described as a literature discovery tool with a visual superpower. You seed it with one or two papers you already know are relevant, and it generates a visual network of connected papers — works that cite your seed papers, works those papers cite, and works that appear in similar citation contexts. For researchers trying to understand how a field fits together, or trying to find papers they didn’t know to search for, this visual approach is genuinely illuminating.

The Zotero integration is a real selling point: you can pull your existing reference library directly into Research Rabbit and let it expand your coverage laterally, finding papers that connect to what you already have. Since it works from real bibliographic data, citation accuracy is solid — the papers it returns are real, linked, and verifiable. The limitation is that it doesn’t generate prose, answer research questions, or summarise findings. It’s a discovery tool, not a synthesis tool — and knowing that distinction is what makes it useful.

✅ What it does well

- Visual citation network maps are genuinely useful for field orientation

- Seed-based discovery finds papers you didn’t know to search for

- Direct Zotero integration for expanding existing libraries

- High citation accuracy — draws from real bibliographic data

- Completely free

❌ Limitations to know

- Requires a seed paper — not useful from a blank start

- No synthesis, writing, or question-answering features

- Visual interface has a learning curve for some users

- Less useful for highly interdisciplinary topics

Zotero is not primarily an AI tool — it’s the gold-standard open-source citation manager that millions of researchers have used for years. Its place in this ranking reflects a 2026 reality: the Zotero ecosystem has matured significantly around AI integrations, with plugins that enable PDF summarisation, AI-assisted tagging, and smart search within your personal library. The core product remains what it always was — a highly reliable, fully featured citation manager — and the AI layer augments rather than replaces that foundation.

The citation accuracy is high because Zotero doesn’t generate citations — it captures them from real sources. When you save a paper from a journal website, Zotero captures the actual metadata. That’s a fundamentally different and more reliable process than any language model generating citation text from memory. The limitation is that Zotero manages citations you already have — it’s not designed to find new ones. Used alongside Perplexity or Semantic Scholar for discovery, it becomes the backend that keeps everything organised, formatted, and ready for export.

✅ What it does well

- Gold-standard citation management — rock-solid and battle-tested

- Captures real metadata from source pages — no hallucination risk

- Formats citations in 10,000+ styles automatically

- Word and Google Docs plugins for seamless in-document citation

- AI plugins for PDF summarisation and smart library search

- Free and open-source

❌ Limitations to know

- Not a discovery tool — you need to find papers elsewhere first

- AI features depend on third-party plugins, varying quality

- Initial setup has a moderate learning curve

- Sync storage limited on free tier (2GB)

ChatGPT Plus ranks seventh specifically for citation work — not because it’s a bad tool, but because it’s the wrong tool for citation discovery. Used to find and verify sources, it carries meaningful hallucination risk that the other tools in this ranking have largely engineered away by using real databases rather than language model recall. We found citation errors in roughly 25% of the reference lists it generated from scratch. That rate is too high for academic use without independent verification of every single entry.

Here’s the correct use case: don’t ask ChatGPT Plus to find your citations. Use Perplexity, Elicit, or Semantic Scholar for that. Then, once you have a verified list of real sources, pass that list to ChatGPT Plus and ask it to format them, synthesise across them, and help you build the argument. Used this way — as a prose engine that works with sources you have already verified — it’s exceptional. The problem is that most researchers don’t use it this way, and the confident, fluent tone of its outputs makes citation errors easy to miss.

✅ What it does well

- Outstanding prose quality for academic writing and synthesis

- Excellent citation formatting when given real references to format

- Helpful for drafting arguments around verified sources

- Best for methodological guidance and conceptual explanation

❌ Limitations to know

- ~25% citation hallucination rate when generating sources from scratch

- No access to post-training-cutoff publications

- Confident tone masks citation errors effectively

- Should never be your primary source discovery tool

Side-by-Side: All 7 Tools at a Glance

| Tool | Rank | Cite Accuracy | Discovery | Ease of Use | Free Tier? | Hallucination Risk |

|---|---|---|---|---|---|---|

| Perplexity Pro | 🥇 #1 | 9.2 | 9.3 | 8.9 | ✓ Yes | Very Low |

| Elicit | 🥈 #2 | 8.9 | 8.7 | 8.6 | ✓ Yes | Very Low |

| Consensus | 🥉 #3 | 8.8 | 8.4 | 9.2 | ✓ Yes | Very Low |

| Semantic Scholar | #4 | 9.1 | 8.8 | 7.5 | ✓ Free | Negligible |

| Research Rabbit | #5 | 8.8 | 8.5 | 7.8 | ✓ Free | Very Low |

| Zotero + AI | #6 | 9.0 | 6.5 | 7.5 | ✓ Free | Negligible |

| ChatGPT Plus | #7 | 6.5 | 6.8 | 9.0 | ✗ Paid only | High |

Pick Your Tool by Use Case

The 5 Most Common Mistakes Researchers Make with AI Citation Tools

Testing these tools across real research workflows revealed consistent patterns in how researchers get into trouble. These are the errors worth actively building habits against.

| ❌ The Mistake | ✅ The Better Approach |

|---|---|

| Asking ChatGPT to “find 10 papers on X” without verifying the output | Use Perplexity or Elicit for source discovery. Only use ChatGPT for writing after verification. |

| Treating a DOI from an AI tool as confirmed without checking it | Paste every DOI into doi.org or Google Scholar before adding it to your reference list. |

| Using Perplexity on the default web search mode for academic research | Always switch to Academic focus in Perplexity Pro before running research prompts. |

| Using one tool for the entire workflow — discovery, verification, and writing | Use the right tool for each stage: Perplexity/Elicit → Zotero → ChatGPT/Claude for prose. |

| Skipping citation verification because the tool “sounded confident” | Confidence in AI output is not correlated with accuracy. Build verification into every workflow step. |

The Bottom Line for Researchers

If you take one thing from this ranking, make it this: the tool you use to find citations and the tool you use to write about them should not be the same tool. Perplexity, Elicit, Consensus, and Semantic Scholar are citation-grounded tools that minimise hallucination by design. ChatGPT Plus is an exceptional writing and synthesis tool that carries meaningful hallucination risk when used for source discovery. Using each at the right stage of your workflow is the single highest-leverage change most researchers can make to their AI-assisted practice.

The recommended starting stack for most researchers in 2026 is straightforward: Perplexity Pro for finding and grounding sources, Zotero for managing and formatting them, and either Claude Opus 4.6 or ChatGPT Plus for writing and synthesis once you have a verified list. That three-tool workflow costs roughly twenty to forty dollars a month, eliminates most of the citation hallucination risk, and produces output quality that a single-tool approach can’t match.

For researchers on a budget, the fully free workflow is competitive: Semantic Scholar for discovery and verification, Research Rabbit for visual field exploration, and Zotero for citation management. Add Consensus for quick evidence checks. None of those tools cost anything, and the citation accuracy across all of them is high. The writing stage will still require a paid tool or significant manual effort — but the sourcing workflow is genuinely strong at no cost.

Citation accuracy in AI-assisted research is ultimately a habits problem, not just a tool-selection problem. The best tool in the world won’t protect you if you submit a reference list without opening a single link. The tools at the top of this ranking make verification faster and easier. That’s what a good tool should do — reduce friction on the right behaviours, not replace the judgment that makes those behaviours necessary in the first place.

Start With the Right Tool Today

The three-tool stack that handles most research workflows: Perplexity for sources, Elicit for evidence, Consensus for quick checks.

All tools were tested between January and March 2026 across five academic disciplines: public health, computer science, economics, education, and environmental science. Citation accuracy was assessed by manually verifying every returned source against Google Scholar, PubMed, and institutional database access. Hallucination rates reflect the proportion of unverifiable references returned in source-generation tasks. Scores represent editorial judgment based on structured testing and are not official benchmarks.

This article is independent editorial content by aitrendblend.com. It is not sponsored by or affiliated with Perplexity AI, Elicit, Consensus, Semantic Scholar, Research Rabbit, Zotero, or OpenAI. No placement fees were accepted for rankings.