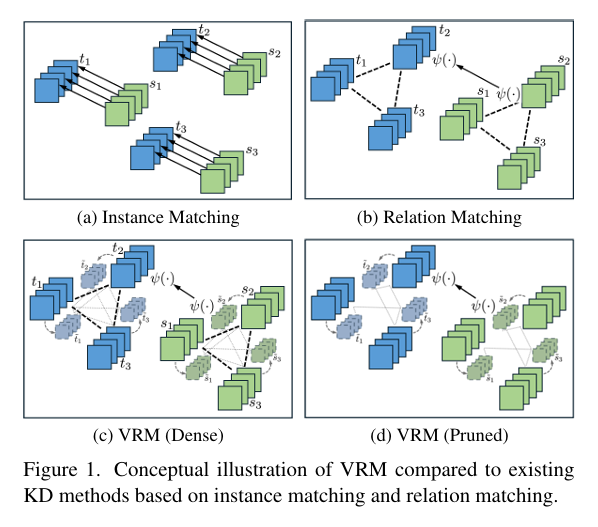

VRM: Knowledge Distillation via Virtual Relation Matching – A Breakthrough in Model Compression

In the rapidly evolving field of deep learning, knowledge distillation (KD) has emerged as a vital technique for transferring intelligence from large, powerful “teacher” models to smaller, more efficient “student” models. This enables deployment of high-performance AI on resource-constrained devices such as smartphones and edge sensors. While many KD methods focus on matching individual predictions—known […]