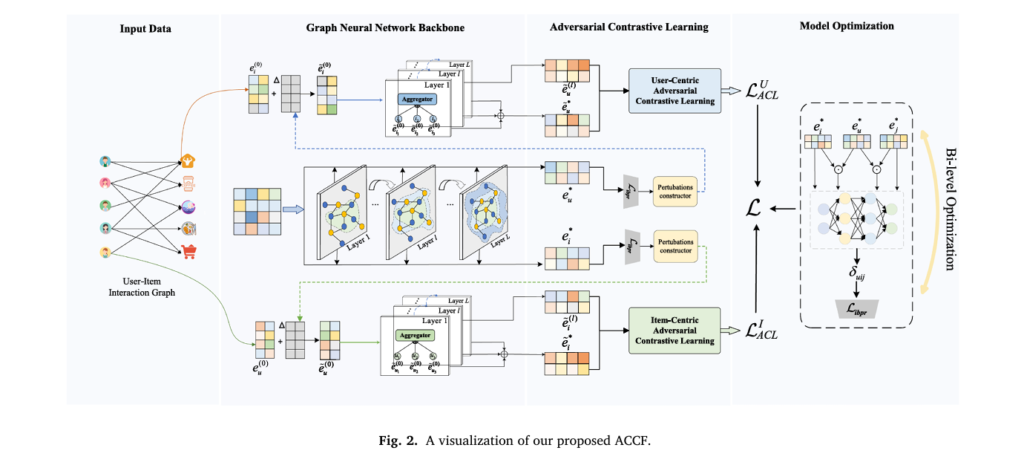

ACCF: Adversarial Contrastive Collaborative Filtering

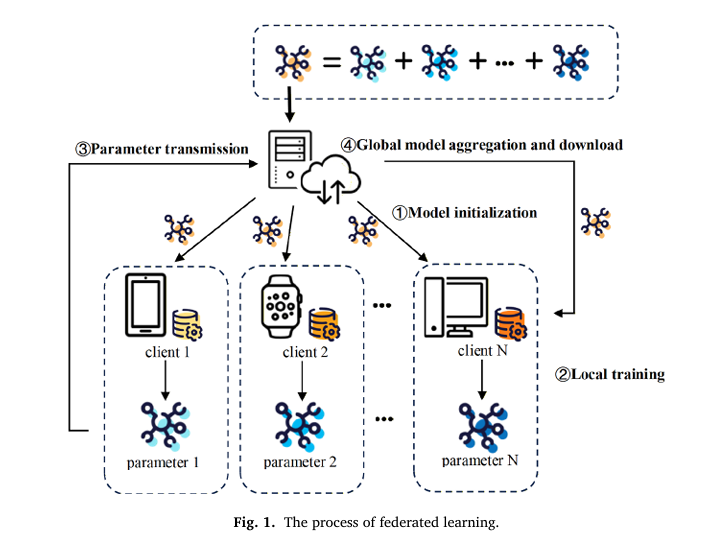

ACCF: Adversarial Contrastive Collaborative Filtering | AI Security Research AISecurity Research Machine Learning About Recommender Systems · Knowledge-Based Systems 2026 · 14 min read ACCF: Teaching Recommender Systems to Learn from Adversity Through Contrastive Learning A novel training paradigm that integrates adversarial perturbations with instance-sensitive optimization to enhance robustness and generality in graph neural network-based […]

ACCF: Adversarial Contrastive Collaborative Filtering Read More »