7 Incredible Upsides and Downsides of Layered Self‑Supervised Knowledge Distillation (LSSKD) for Edge AI

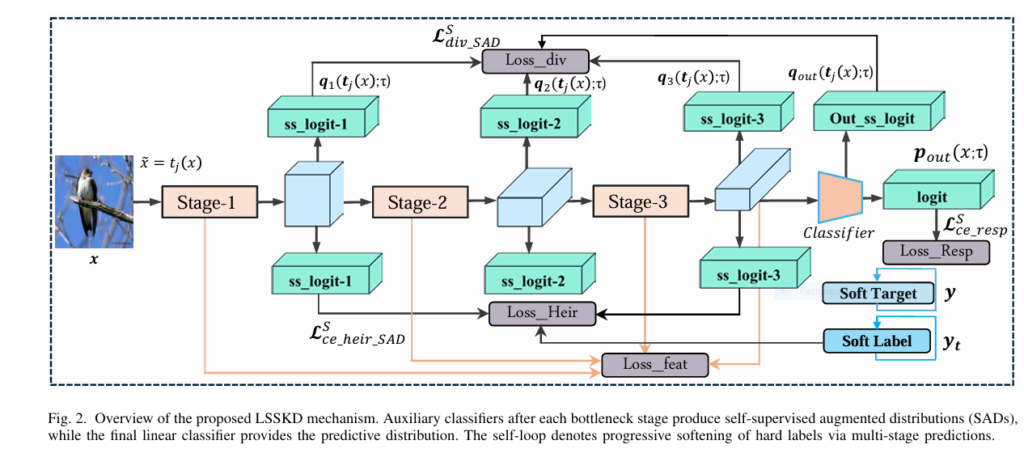

As deep learning continues its meteoric rise in computer vision and multimodal sensing, deploying high‑performance models on resource‑constrained edge devices remains a major hurdle. Enter Layered Self‑Supervised Knowledge Distillation (LSSKD)—an innovative framework that leverages self‑distillation across multiple network stages to produce compact, high‑accuracy student models without relying on massive pre‑trained teachers. In this article, we’ll […]