7 Shocking Ways Integrated Gradients BOOST Knowledge Distillation

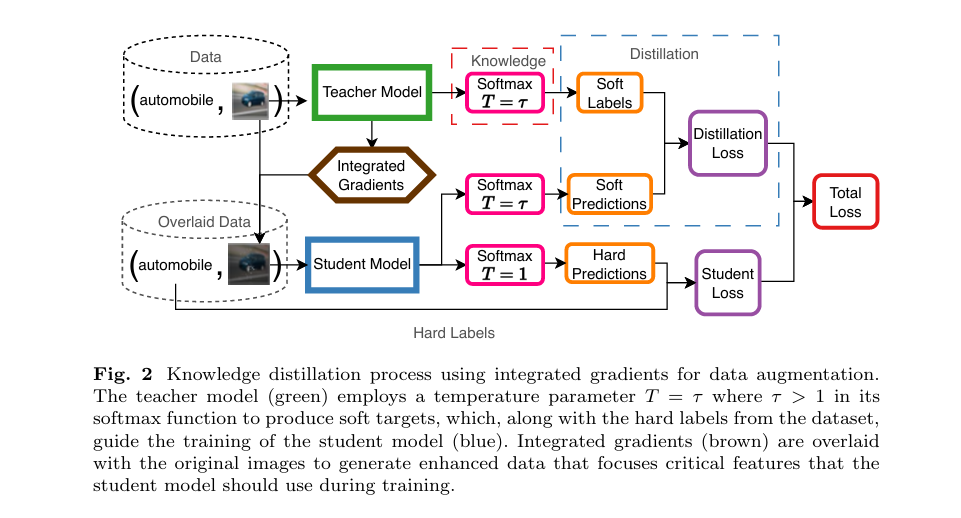

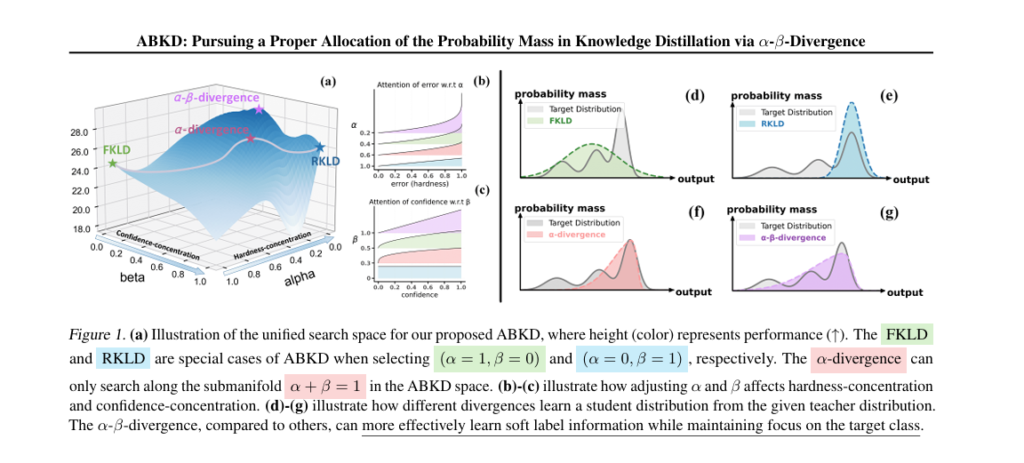

In the fast-evolving world of artificial intelligence, efficiency and accuracy are locked in a constant tug-of-war. While large foundation models like GPT-4 dazzle with their capabilities, they’re too bulky for smartphones, IoT devices, and embedded systems. This is where model compression becomes not just useful—but essential. Enter Knowledge Distillation (KD): a powerful technique that transfers […]

7 Shocking Ways Integrated Gradients BOOST Knowledge Distillation Read More »