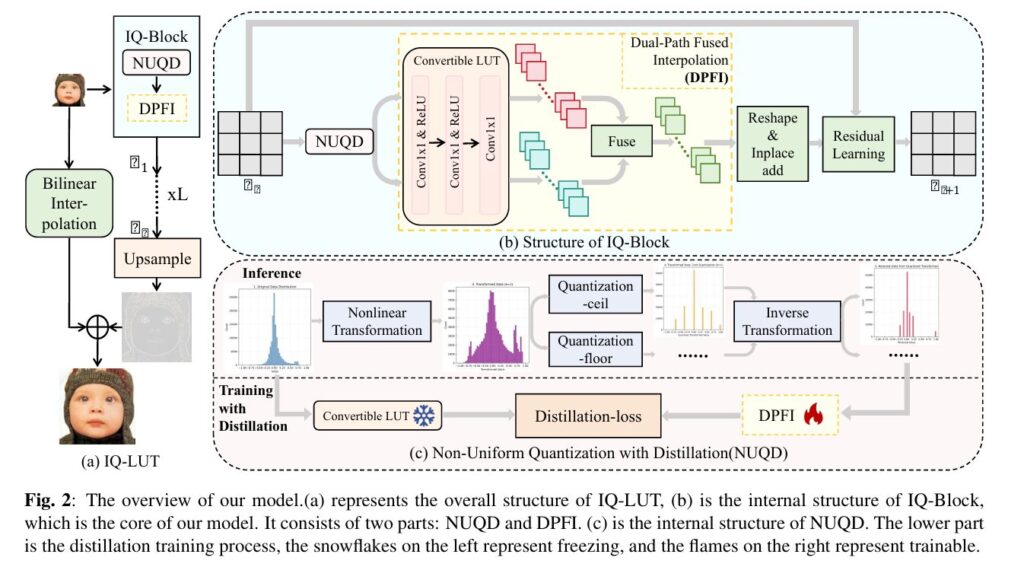

IQ-LUT: 34 KB of Super-Resolution That Beats 1.5 MB Models

IQ-LUT: 34 KB of Super-Resolution That Beats 1.5 MB Models | AI Trend Blend AITrendBlend Machine Learning Computer Vision About Image Super-Resolution · Edge AI · arXiv:2604.07000 | Shanghai Jiao Tong University · Rockchip Electronics (2026) · 19 min read IQ-LUT: How a 34 KB Lookup Table Beats a 1.5 MB Neural Network at Image […]

IQ-LUT: 34 KB of Super-Resolution That Beats 1.5 MB Models Read More »