Your Knee Brace Said It Hurts. The AI Didn’t Believe It — Until the Muscle Signal Confirmed It Was Only Moderate Pain

Donghua University’s five-agent AI system cross-checks muscle signals against facial expressions and verbal reports to configure personalized rehabilitation braces — catching cases where patients exaggerate, suppress, or simply can’t articulate their discomfort accurately. It achieves 0.88 parameter accuracy versus 0.13 for text-mining baselines.

Mr. Xiao is recovering from knee surgery. When the rehabilitation team fits his brace, he says it “hurts a lot when bending — almost unbearable.” He also says the device chafes his skin, loosens during walks, and is constantly slipping. These are real problems, but there’s a catch: his sEMG muscle signals don’t show the high-intensity fluctuation pattern you’d expect from severe pain. His facial expressions, across dozens of frames, are closer to moderate discomfort. Donghua University’s Agentic AI system catches this mismatch, classifies his actual pain as moderate rather than severe, derives six structured wearing requirements, and configures a personalized knee brace — all without a single dense annotation or explicit configuration rule. The system achieves 0.88 parameter accuracy. The text-only baseline manages 0.13.

The Problem That Makes Rehab Device Personalization So Hard

Personalized rehabilitation assistive devices sit at a uniquely difficult intersection: the people who most need them often have the hardest time accurately describing what’s wrong. Pain perception is subjective. Patients exaggerate when they want attention, suppress when they want to appear resilient, and simply can’t localize or articulate discomfort when the source is complex or distributed. A knee brace patient might say “it’s too tight everywhere” when the actual problem is localized pressure at the medial condyle during flexion. A post-stroke patient might underreport instability because they’ve adapted their gait to compensate. Getting from a messy verbal description to a precise engineering configuration — specific strap widths, hinge ROM limits, pad thicknesses, liner breathability ratings — is genuinely difficult.

Product-family-based configuration is the standard industry approach to this problem. Rather than designing each device from scratch, manufacturers offer a family of modular components (straps, hinges, pads, shells, connectors) with specified variants and parameters. Personalization becomes a selection problem: which module variant and which parameter value best matches this patient’s needs? With a well-designed product family, the solution space is finite and manufacturable. The challenge is navigating it accurately.

Two things make this navigation hard at scale. First, user requirement reports are noisy signals — subjective, inconsistent, and often biased by emotional state, cultural norms around pain expression, and the user’s limited ability to introspect on biomechanical discomfort. Second, configuration decisions are tightly coupled. Changing the strap tension affects both pain and slippage. Changing pad thickness affects both cushioning and joint range of motion. Resolving one requirement often creates a conflict with another, and those conflicts require systematic reasoning to untangle.

sEMG provides objective physiological evidence of muscle activation and load that is harder to fake or suppress than verbal reports or facial expressions. Using it as an anchor to calibrate the reliability of other facets — rather than treating all inputs as equally trustworthy — produces wearing-requirement representations that better reflect what the user’s body is actually experiencing, regardless of what they say or show.

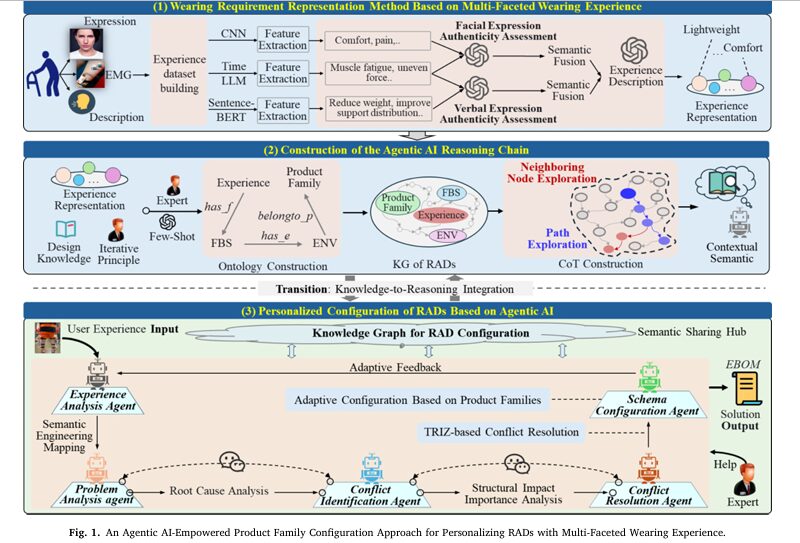

The Full Architecture: Three Layers Working Together

The system has three conceptually distinct layers that build on each other. The first derives reliable wearing requirements from messy multi-modal data. The second builds a structured knowledge map from requirements to configurable device components. The third orchestrates five specialized AI agents to navigate that map under conflicting constraints.

LAYER 1: WEARING REQUIREMENT REPRESENTATION

─────────────────────────────────────────────────────────────────────

Input: sEMG signals + facial video + verbal text description

GoogLeNet (frozen) TimeLLM (frozen backbone) Sentence-BERT

│ │ │

▼ ▼ ▼

Face embed z_e sEMG embed z_m (ANCHOR) Text embed z_t

│ │ │

└──── cos_sim(z_m, z_e) ────┘──── cos_sim(z_m, z_t) ───┘

│ │

reliability w_e reliability w_t

(sigmoid-gated) (sigmoid-gated)

│ │

└────────── concat ──────────┘

z_fusion = [z_m; w_e·z_e; w_t·z_t]

│

Multi-label head

│

Wearing-Experience Labels

(tightness, fatigue, pressure, etc.)

Training Loss = L_sup + α·L_con

L_sup = L_m + L_e + L_t (per-facet supervised)

L_con = L_con_e + L_con_t (cross-facet consistency anchored by sEMG)

─────────────────────────────────────────────────────────────────────

LAYER 2: FBS–ENV–PRODUCT FAMILY KNOWLEDGE GRAPH

─────────────────────────────────────────────────────────────────────

User Requirements U = {u1, u2, ...}

│ has_function

▼

Functions F = {F1, F2, ...}

│ has_behaviour

▼

Behaviors B = {B1, B2, ...}

│ has_structure

▼

Structures S = {S1, S2, ...} → ENV (Element, Name, Value ↑/↓)

│ has_component

▼

Product Family: Module Variants → Parameters → Structure Sites

KG: 562 entities, 1,329 semantic relations (knee brace domain)

Graph reasoning: R-GAT entity embeddings + BioBERT query encoding

─────────────────────────────────────────────────────────────────────

LAYER 3: FIVE-AGENT COLLABORATIVE CONFIGURATION

─────────────────────────────────────────────────────────────────────

EAA (Experience Analysis) → Pain level + structured requirements

↓

PAA (Problem Analysis) → Root-cause triples (Object, Attr, Value)

↓

CIA (Conflict Identification) → Conflict pairs + PageRank importance

↓

CRA (Conflict Resolution) → TRIZ principles → improvement actions

↓

SCA (Scheme Configuration) → Module substitution + parameter setting

→ Constraint checking → EBOM output

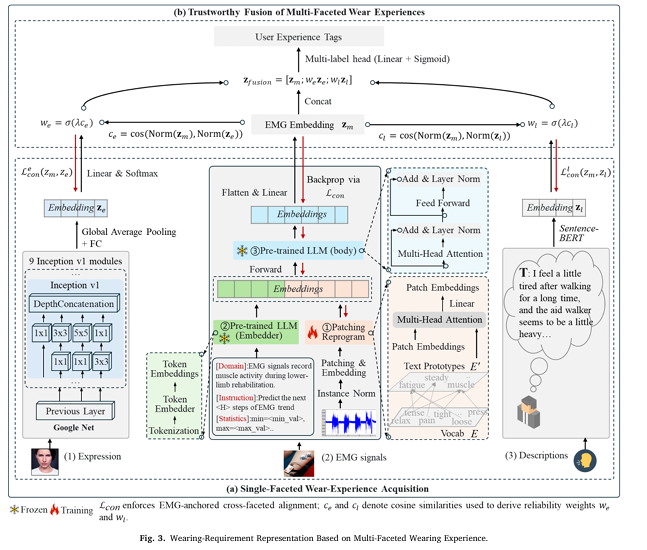

Layer 1: Making sEMG the Referee

The three input streams are processed independently before fusion. Facial expressions go through GoogLeNet — a lightweight CNN that applies parallel 1×1, 3×3, and 5×5 convolution kernels at each Inception module to capture multi-scale expression features without being computationally heavy. The output is a categorical expression label (neutral, discomfort, fatigue) with a continuous embedding vector.

sEMG signals go through TimeLLM — an approach that reprograms a frozen language model to understand time-series data without fine-tuning the backbone. The key operation is cross-attention between time patches and semantic prototypes extracted from the LLM’s word embedding matrix, which allows the frozen LLM to learn a mapping between temporal patterns in muscle activation and the LLM’s existing semantic space. The system also prepends a “Prompt-as-Prefix” with domain context (this is lower-limb rehabilitation sEMG), task instructions (predict the next H steps), and statistical descriptors, giving the model the context it needs to interpret the signal correctly.

Verbal descriptions go through Sentence-BERT, producing sentence-level semantic vectors that capture the meaning of phrases like “feels tight after walking a long time” in a comparable, similarity-searchable embedding space.

The fusion step is where the anchoring happens. The cosine similarity between sEMG embedding z_m and each of the other two facets is computed. Higher cosine similarity means the facet is telling the same story as the muscle data — more reliable. The similarity is sigmoid-gated to produce a reliability weight (we for facial, wt for text). Facets that contradict the sEMG anchor are down-weighted automatically, without any manual intervention or threshold tuning. The final fused embedding is simply the concatenation of the sEMG embedding with the reliability-weighted facial and text embeddings.

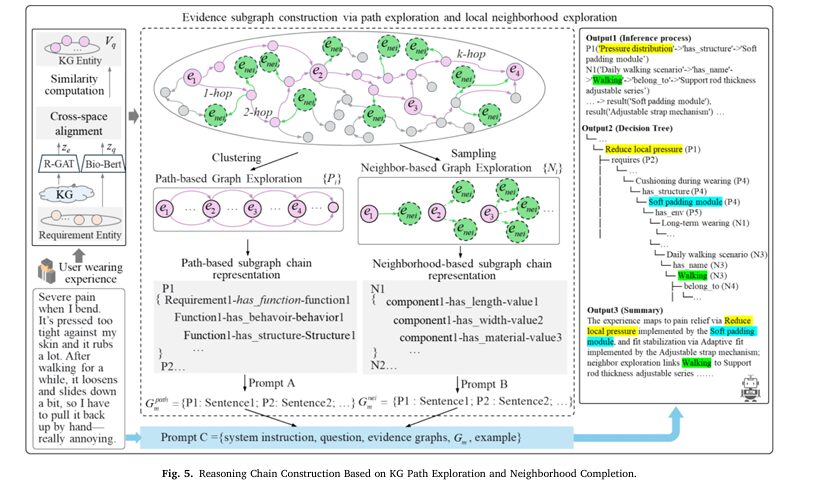

Layer 2: The Knowledge Graph That Knows What Each Component Does

The knowledge graph implements a three-layer ontology that maps abstract wearing requirements through a chain of increasingly concrete engineering concepts. At the top, abstract requirements like “reduce pain during flexion” are linked to functional descriptions of what a device needs to do. Functions link to behaviors — what the device system actually does during use. Behaviors link to structural modules — the actual physical components. Structures are then described using the ENV (Element-Name-Value) schema that specifies what attribute of what component should change and in which direction.

This chain progressively converts “it hurts when I bend” into “hinge ROM upper limit → increase” and “soft padding module → add.” The KG for the knee brace domain contains 562 entities and 1,329 semantic relations, stored in Neo4j. Reasoning over this graph — retrieving the most relevant paths from a wearing-requirement query vector to engineering configuration recommendations — uses R-GAT (Relational Graph Attention Network) for entity embeddings and BioBERT for encoding requirement queries into the same semantic space.

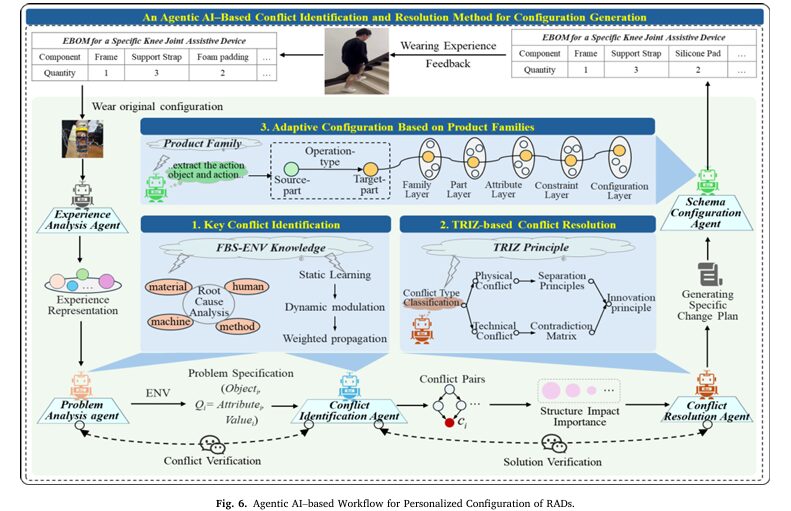

Layer 3: Five Agents, One Goal

The five-agent framework decomposes the configuration task into a sequence of increasingly specialized decisions. This decomposition is validated by the ablation study: adding each agent in sequence produces measurable improvements in every metric, and the final five-agent architecture outperforms even the four-agent structure by 4.8% F1 and 12.8% ParamAcc — confirming that explicit separation of conflict identification from conflict resolution is not redundant, it’s essential.

The Experience Analysis Agent (EAA) integrates the multi-faceted fusion output with clinical knowledge to determine pain level and extract structured wearing requirements. It explicitly handles the case where different facets tell different stories — defaulting to the sEMG anchor when verbal and facial cues conflict with physiological evidence.

The Problem Analysis Agent (PAA) converts requirements into structured conflict-ready specifications using fishbone-style root-cause analysis across Human, Machine, Material, and Method dimensions. Each cause is encoded as a triple (Object, Attribute, Value) to enable systematic downstream conflict detection.

The Conflict Identification Agent (CIA) detects cases where two requirements push in opposite directions — for example, reducing strap tension to relieve pain increases slippage risk. It ranks conflicts by structural impact importance using weighted PageRank on a conflict evidence subgraph, where edge weights combine a static pretrained association score with a dynamic context-modulation term based on the current semantic query.

The Conflict Resolution Agent (CRA) applies TRIZ inventive principles to resolve ranked conflicts. It generates candidate principle sets ranked by a weighted combination of semantic similarity to the conflict and structural relevance (how important the targeted components are in the conflict subgraph). It then maps principles into executable improvement actions — replacement, adjustment, or reconfiguration of specific components or parameters — and validates them for feasibility before passing them downstream.

The Scheme Configuration Agent (SCA) instantiates the improvement actions into a specific EBOM (Engineering Bill of Materials) using the product-family knowledge graph. It performs structural matching (semantic similarity search when exact matches aren’t found), executes the actions, and runs assembly-constraint checking and performance validation before outputting the final personalized configuration.

Results: What 0.88 ParamAcc Actually Tells You

The headline metric is Parameter Accuracy (ParamAcc) — the fraction of key configuration parameters (ROM range, material specification, pad thickness, tension limits) that fall within the reference tolerance intervals established by the expert consensus. This is a demanding metric: getting the activity type right is much easier than also specifying the exact hinge ROM upper limit, the strap width, the anti-overtightening buckle tension threshold, and the breathable liner moisture transmission rate.

| Method | Precision | Recall | F1 | Completeness | ParamAcc |

|---|---|---|---|---|---|

| Text-mining baseline | 0.55 | 0.52 | 0.53 | 0.50 | 0.13 |

| Multi-domain KG | 0.62 | 0.59 | 0.60 | 0.58 | 0.20 |

| Agents-style (open workflow) | 0.68 | 0.65 | 0.66 | 0.66 | 0.24 |

| AutoAgents (supervised tasks) | 0.71 | 0.68 | 0.69 | 0.69 | 0.37 |

| MetaGPT (manual roles) | 0.76 | 0.73 | 0.74 | 0.73 | 0.61 |

| AutoTRIZ (TRIZ + LLM) | 0.80 | 0.76 | 0.78 | 0.76 | 0.72 |

| Proposed (5-agent + KG + multi-facet) | 0.88 | 0.87 | 0.87 | 0.86 | 0.88 |

The 0.13 ParamAcc for text-mining is not a baseline failure — it reflects a genuine structural limitation of approaches that learn from text alone. Without physiological evidence to anchor subjective descriptions, and without structured constraint propagation from requirements to parameters, the mapping from “it feels tight” to “anti-overtightening buckle maximum tension: 52N” is essentially impossible to learn reliably from text patterns. The jump from 0.72 (AutoTRIZ) to 0.88 (proposed) reflects the added value of the structured product-family KG: AutoTRIZ can reason about conflicts well, but without explicitly modeling the module-component constraint relationships in a graph format, it can’t reliably ground those resolutions into specific parameter values that pass assembly constraints.

The Multi-Facet Ablation: Why All Three Signals Matter

| Input Facets | Precision | Recall | F1 | Completeness | ParamAcc |

|---|---|---|---|---|---|

| sEMG only | 0.32 | 0.24 | 0.27 | 0.22 | 0.26 |

| Expression only | 0.26 | 0.21 | 0.23 | 0.21 | 0.16 |

| Text only | 0.46 | 0.42 | 0.44 | 0.47 | 0.46 |

| sEMG + Expression | 0.52 | 0.46 | 0.49 | 0.41 | 0.38 |

| Text + Expression | 0.60 | 0.58 | 0.59 | 0.62 | 0.63 |

| sEMG + Text | 0.62 | 0.60 | 0.61 | 0.60 | 0.63 |

| sEMG + Text + Expression | 0.88 | 0.87 | 0.87 | 0.86 | 0.88 |

The jump from the best two-facet setting (0.63 ParamAcc) to the three-facet setting (0.88 ParamAcc) is striking. Text contributes semantic richness — it can describe component-level problems that sEMG can’t distinguish. sEMG provides objective intensity calibration — it catches when verbal reports exaggerate or suppress. Facial expressions add a temporal signal of discomfort that’s different from both: it captures moment-to-moment state changes during movement that neither steady-state verbal reports nor aggregate sEMG statistics can convey. The three facets are genuinely complementary, not redundant.

“When subjective feedback is inconsistent with objective physiological evidence, a single facet is insufficient for reliable characterization of true wearing requirements, whereas multi-faceted calibration improves the reliability of requirement identification.” — Zhuang, Lv, Wu et al., Advanced Engineering Informatics 2026

The Honest Limitations

The paper identifies two explicit limitations, and there are additional ones worth understanding for anyone considering deployment.

No long-term memory mechanism. The current system relies on real-time retrieval for each interaction session. There’s no mechanism for accumulating insights from multiple visits with the same patient — the system doesn’t know that Mr. Xiao’s pain consistently peaks during stair descent but not level walking, or that his slippage problem got worse after he switched to different footwear. Clinical rehabilitation is fundamentally longitudinal, and the system’s inability to build persistent patient models limits its effectiveness for follow-up reconfiguration. The authors explicitly flag this as a priority for future work.

Validated only in simulated settings. The training dataset was constructed from 40 healthy volunteers simulating discomfort, not from actual patients with pathological conditions. Real rehabilitation patients have anatomical abnormalities, neurological differences, and psychological factors that simply aren’t captured by healthy volunteers performing standardized tasks. The sEMG patterns of a post-stroke patient with spasticity are fundamentally different from a healthy person voluntarily simulating leg weakness. The system has not been tested in a real clinical environment with real patients under clinical conditions — this is the most significant gap between the current work and clinical deployment.

Volunteer-based proxy data introduces distribution shift. The 40 volunteers performed tasks under controlled conditions to generate “simulated pain” at different levels. But the pain signals of someone genuinely post-surgical, wearing a device that actually helps or actually hurts, will have different statistical properties than someone voluntarily holding a painful posture. The sEMG amplitude ranges, the temporal dynamics of expression changes, and the vocabulary of verbal reports will all differ. How robust the reliability-weighting mechanism is to this distribution shift is unknown.

Cross-subject alignment depends on baseline calibration quality. The baseline measurement protocol (each new user performs a brief standardized movement to collect reference sEMG and facial data) is a practical solution to inter-subject variability. But baseline quality varies: if a patient is already in pain during their baseline measurement, the calibration reference will be off. If a patient’s muscle anatomy is substantially different from the training cohort, linear scaling may be insufficient to align their signals. The system has no explicit mechanism for detecting and flagging poor calibration quality.

TRIZ principle mapping is heuristic. The conflict resolution agent generates candidate TRIZ inventive principles ranked by a combination of semantic similarity and structural importance. But the mapping from “dynamic parameters” or “segmentation” principles to actual engineering actions (raise buckle tension upper bound, widen strap contact area) requires the LLM to reason about physical engineering implications. This reasoning can fail for unusual material combinations, novel structural configurations outside the training distribution of the LLM, or cases where multiple valid translations of a TRIZ principle exist.

The expert-consensus EBOM used as ground truth was established through multi-stakeholder deliberation for a carefully selected case study subject. In production deployment, such expert consensus is expensive to produce and may not be available for comparison. The system’s performance metrics are relative to this expert consensus — they don’t directly measure whether the configured device actually improves patient outcomes, reduces pain, or prevents secondary injury. Clinical validation with patient outcome data remains necessary before any production deployment.

Complete System Implementation (Python)

The implementation covers all major components from the paper across 12 sections: multi-faceted data structures, sEMG-anchored consistency loss, reliability-weighted fusion model, FBS–ENV–product family knowledge graph builder, R-GAT + BioBERT KG reasoner, path exploration and neighborhood completion algorithms, all five specialized agents (EAA, PAA, CIA, CRA, SCA), weighted PageRank structural impact scoring, TRIZ principle mapping, EBOM generation and constraint checking, evaluation metrics, and a complete end-to-end demo with Mr. Xiao’s case.

# ==============================================================================

# Agentic AI-Empowered Product Family Configuration for Rehabilitation Devices

# Paper: Advanced Engineering Informatics 74 (2026) 104695

# Authors: Zhuang et al., Donghua University

# ==============================================================================

# Sections:

# 1. Imports & Data Structures

# 2. sEMG-Anchored Consistency Loss (Eq. 2-3)

# 3. Multi-Faceted Fusion Model (Eq. 4)

# 4. FBS–ENV–Product Family Knowledge Graph

# 5. KG Reasoning: Path Exploration & Neighborhood Completion (Algorithm 1)

# 6. Experience Analysis Agent (EAA)

# 7. Problem Analysis Agent (PAA)

# 8. Conflict Identification Agent (CIA) with PageRank (Eq. 5-6)

# 9. Conflict Resolution Agent (CRA) with TRIZ (Eq. 7-9)

# 10. Scheme Configuration Agent (SCA) with Constraint Check (Eq. 10-11)

# 11. EBOM Evaluation Metrics

# 12. End-to-End Demo (Mr. Xiao Case Study)

# ==============================================================================

from __future__ import annotations

import math, json, warnings

from dataclasses import dataclass, field

from typing import Dict, List, Optional, Tuple

from collections import defaultdict

import torch

import torch.nn as nn

import torch.nn.functional as F

from torch import Tensor

import numpy as np

warnings.filterwarnings("ignore")

# ─── SECTION 1: Data Structures ───────────────────────────────────────────────

@dataclass

class WearingExperienceInput:

"""Raw multi-faceted input from a single wearing session."""

user_id: str

semg_sequence: Optional[np.ndarray] = None # (N_channels, T_steps)

facial_frame: Optional[np.ndarray] = None # (H, W, 3) keyframe image

verbal_text: str = "" # User's verbal description

nprs_score: Optional[int] = None # Numeric Pain Rating 0-10

action_type: str = "walking" # sit_to_stand, stair_descent, walking, stair_ascent

@dataclass

class WearingRequirement:

"""A structured wearing requirement extracted from experience analysis."""

req_id: str

need: str # e.g., "flexion-extension assistance and pain reduction"

target_modules: List[str] # e.g., ["HingeModule", "StrapAssembly"]

evidence: Dict # Supporting evidence from each facet

priority: int = 1 # Higher = more urgent

@dataclass

class ProblemSpec:

"""ENV-normalized problem specification from root-cause analysis."""

qid: str

req_id: str

dimension: str # Human, Machine, Material, Method

obj: str # Cause object (e.g., "StrapAssembly")

attribute: str # Attribute (e.g., "tension_level")

value: str # Current state

direction: str # increase, decrease, balance

mechanism: str # Causal chain description

@dataclass

class ConflictPair:

"""A detected conflict between two performance objectives."""

cid: str

conflict_type: str # physical or technical

involved_modules: List[str]

parameter: str # The contested parameter

side_A: str # e.g., "low strap tension" (for pain)

side_B: str # e.g., "high strap tension" (for stability)

priority: int = 1

structural_impact: float = 0.0 # Weighted PageRank importance score

@dataclass

class TRIZAction:

"""A TRIZ-derived improvement action."""

cid: str

principle_name: str # e.g., "Dynamicity/Variable Parameters"

action_type: str # replace, adjust, restructure

target_module: str

change_description: str

expected_effect: str

feasibility: bool = True

@dataclass

class EBOMItem:

"""A single line item in the Engineering Bill of Materials."""

component: str # e.g., "Adjustable Hinge"

module_id: str # e.g., "HG-02"

parameters: Dict[str, str] # e.g., {"ROM": "0-120°"}

quantity: int = 1

modification_note: str = ""

delta_experience: str = "" # Expected improvement in wearing experience

# ─── SECTION 2: sEMG-Anchored Consistency Loss ────────────────────────────────

class CrossFacetConsistencyLoss(nn.Module):

"""

sEMG-anchored cross-facet consistency loss (Section 3.1.3, Eq. 2-3).

L_con = L_con_e(z_m, z_e) + L_con_t(z_m, z_t)

Penalizes inconsistencies between sEMG–facial and sEMG–text embedding pairs.

sEMG is chosen as the anchor because it provides relatively stable

physiological objectivity compared to facial and verbal expressions.

Backpropagated to the facet-specific projection/mapping layers only;

the frozen TimeLLM backbone is NOT updated.

Implementation based on geometric multimodal contrastive representation

learning [Poklukar et al., 2022], adapted for rehabilitation sEMG anchoring.

"""

def __init__(self, temperature: float = 0.07):

super().__init__()

self.temperature = temperature

def forward(

self,

z_m: Tensor, # (B, D) sEMG embedding (anchor)

z_e: Tensor, # (B, D) facial expression embedding

z_t: Tensor, # (B, D) text embedding

) -> Tuple[Tensor, Tensor, Tensor]:

"""

Returns (L_con, L_con_e, L_con_t) where L_con = L_con_e + L_con_t.

Uses NT-Xent style contrastive loss anchored by sEMG.

"""

# Normalize all embeddings to unit sphere

z_m = F.normalize(z_m, dim=-1)

z_e = F.normalize(z_e, dim=-1)

z_t = F.normalize(z_t, dim=-1)

B = z_m.shape[0]

def _pairwise_loss(anchor: Tensor, other: Tensor) -> Tensor:

# Similarity matrix: (B, B)

sim = (anchor @ other.T) / self.temperature

labels = torch.arange(B, device=anchor.device)

# NT-Xent: positive is the matched pair (diagonal)

loss = F.cross_entropy(sim, labels) + F.cross_entropy(sim.T, labels)

return loss / 2

L_con_e = _pairwise_loss(z_m, z_e) # sEMG ↔ Facial

L_con_t = _pairwise_loss(z_m, z_t) # sEMG ↔ Text

return L_con_e + L_con_t, L_con_e, L_con_t

# ─── SECTION 3: Multi-Faceted Fusion Model ────────────────────────────────────

class MultiFacetedFusionModel(nn.Module):

"""

sEMG-anchored reliability-weighted fusion model (Section 3.1.3, Eq. 4).

Architecture:

- sEMG branch: TimeLLM-style projection (stub: linear for demo)

- Facial branch: GoogLeNet-style projection (stub: linear for demo)

- Text branch: Sentence-BERT projection (stub: linear for demo)

Reliability weighting:

c_e = cos(Norm(z_m), Norm(z_e)) # sEMG–facial consistency

c_l = cos(Norm(z_m), Norm(z_l)) # sEMG–text consistency

w_e = sigmoid(λ · c_e) # facial reliability weight

w_l = sigmoid(λ · c_l) # text reliability weight

Fusion:

z_fusion = [z_m; w_e·z_e; w_l·z_l] (concatenation)

Training:

L = L_sup + α·L_con

L_sup = L_m + L_e + L_t (per-facet supervised)

Only projection layers are trained; TimeLLM/GoogLeNet/SBERT backbones

are kept FROZEN to preserve generalization.

"""

def __init__(

self,

emb_dim: int = 128, # Shared embedding dimension D

num_classes: int = 4, # Pain levels: normal/mild/moderate/severe

num_exp_labels: int = 6, # Multi-label experience outputs

lambda_temp: float = 5.0, # λ temperature for reliability sigmoid

alpha_con: float = 0.5, # α: consistency loss weight

):

super().__init__()

self.lambda_temp = lambda_temp

self.alpha_con = alpha_con

self.emb_dim = emb_dim

# Facet-specific projection heads (trainable)

self.emg_proj = nn.Sequential(

nn.Linear(256, emb_dim), nn.GELU(), nn.LayerNorm(emb_dim)

)

self.face_proj = nn.Sequential(

nn.Linear(512, emb_dim), nn.GELU(), nn.LayerNorm(emb_dim)

)

self.text_proj = nn.Sequential(

nn.Linear(768, emb_dim), nn.GELU(), nn.LayerNorm(emb_dim)

)

# Per-facet classification heads (for supervised L_sup)

self.emg_head = nn.Linear(emb_dim, num_classes)

self.face_head = nn.Linear(emb_dim, num_classes)

self.text_head = nn.Linear(emb_dim, num_classes)

# Fused multi-label head (wearing experience labels)

self.fusion_head = nn.Sequential(

nn.Linear(emb_dim * 3, emb_dim),

nn.GELU(),

nn.Linear(emb_dim, num_exp_labels),

nn.Sigmoid() # Multi-label output

)

self.consistency_loss = CrossFacetConsistencyLoss()

def compute_reliability_weights(

self, z_m: Tensor, z_e: Tensor, z_t: Tensor

) -> Tuple[Tensor, Tensor]:

"""

Compute sEMG-anchored reliability weights for facial and text facets.

(Section 3.1.3 — sEMG-anchored consistency measurement)

c_e = cosine_similarity(z_m_normalized, z_e_normalized)

w_e = sigmoid(λ · c_e)

Higher c_e → facet is telling the same story as sEMG → higher weight.

Lower c_e → facet contradicts physiological evidence → lower weight.

"""

z_m_n = F.normalize(z_m, dim=-1)

z_e_n = F.normalize(z_e, dim=-1)

z_t_n = F.normalize(z_t, dim=-1)

c_e = (z_m_n * z_e_n).sum(dim=-1, keepdim=True) # (B, 1)

c_t = (z_m_n * z_t_n).sum(dim=-1, keepdim=True) # (B, 1)

w_e = torch.sigmoid(self.lambda_temp * c_e)

w_t = torch.sigmoid(self.lambda_temp * c_t)

return w_e, w_t

def forward(

self,

emg_raw: Tensor, # (B, 256) sEMG backbone features

face_raw: Tensor, # (B, 512) GoogLeNet backbone features

text_raw: Tensor, # (B, 768) Sentence-BERT features

pain_labels: Optional[Tensor] = None, # (B,) for training

) -> Dict:

"""

Forward pass producing fused embedding, reliability weights,

per-facet predictions, and training losses.

"""

# Project each facet to shared embedding space

z_m = self.emg_proj(emg_raw) # (B, D) — anchor

z_e = self.face_proj(face_raw) # (B, D)

z_t = self.text_proj(text_raw) # (B, D)

# Compute reliability weights (Eq. in Section 3.1.3)

w_e, w_t = self.compute_reliability_weights(z_m, z_e, z_t)

# Reliability-weighted fusion (Eq. 4)

z_fusion = torch.cat([z_m, w_e * z_e, w_t * z_t], dim=-1) # (B, 3D)

# Multi-label wearing experience output

experience_labels = self.fusion_head(z_fusion) # (B, num_exp_labels)

output = {

'z_m': z_m, 'z_e': z_e, 'z_t': z_t, 'z_fusion': z_fusion,

'w_e': w_e, 'w_t': w_t, 'experience_labels': experience_labels,

}

if pain_labels is not None:

# Per-facet supervised losses (L_sup)

L_m = F.cross_entropy(self.emg_head(z_m), pain_labels)

L_e = F.cross_entropy(self.face_head(z_e), pain_labels)

L_t = F.cross_entropy(self.text_head(z_t), pain_labels)

L_sup = L_m + L_e + L_t

# Cross-facet consistency loss anchored by sEMG (Eq. 2-3)

L_con, L_con_e, L_con_t = self.consistency_loss(z_m, z_e, z_t)

# Total loss (Eq. 3): L = L_sup + α·L_con

L_total = L_sup + self.alpha_con * L_con

output.update({

'loss': L_total, 'L_sup': L_sup, 'L_con': L_con,

'L_m': L_m, 'L_e': L_e, 'L_t': L_t,

})

return output

# ─── SECTION 4: FBS–ENV–Product Family Knowledge Graph ────────────────────────

class RADKnowledgeGraph:

"""

FBS–ENV–Product Family Knowledge Graph for RAD configuration.

(Section 3.2.1 — Ontology model construction, Fig. 4)

Three-layer ontology:

Requirements → (has_function) → Functions

Functions → (has_behaviour) → Behaviors

Behaviors → (has_structure) → Structures (ENV-normalized)

Structures → (has_component) → Product Family Components

Components → (has_parametric) → Parameters

In production: stored in Neo4j graph database.

This implementation uses in-memory adjacency for demonstration.

The knee brace KG contains 562 entities and 1,329 semantic relations.

"""

def __init__(self):

self.nodes: Dict[str, Dict] = {}

self.edges: List[Tuple[str, str, str]] = [] # (source, relation, target)

self._build_knee_brace_kg()

def _build_knee_brace_kg(self):

"""

Build a representative subset of the LLKJ-A knee brace KG.

In production, this is populated from configuration case records

and product-family specifications via LLM-assisted extraction.

"""

# Functions (what the device must do)

functions = {

"F_reduce_pressure": {"type": "Function", "label": "Reduce local pressure"},

"F_support_joint": {"type": "Function", "label": "Support knee joint"},

"F_allow_flexion": {"type": "Function", "label": "Allow flexion-extension"},

"F_prevent_slip": {"type": "Function", "label": "Prevent brace slippage"},

"F_skin_comfort": {"type": "Function", "label": "Skin-friendly contact"},

}

# Behaviors (how the device achieves functions)

behaviors = {

"B_cushion": {"type": "Behavior", "label": "Cushioning during wearing"},

"B_stiffness": {"type": "Behavior", "label": "Structural stiffness response"},

"B_rom_control": {"type": "Behavior", "label": "ROM limit control"},

"B_adaptive_fit": {"type": "Behavior", "label": "Adaptive fit mechanism"},

"B_breathability": {"type": "Behavior", "label": "Moisture transmission"},

}

# Structures (physical components, ENV-normalized)

structures = {

"S_soft_padding": {"type": "Structure", "label": "Soft padding module",

"env_element": "CushionPad", "env_attr": "thickness",

"env_direction": "increase"},

"S_strap": {"type": "Structure", "label": "Adjustable strap mechanism",

"env_element": "StrapAssembly", "env_attr": "tension_level",

"env_direction": "adjust"},

"S_hinge": {"type": "Structure", "label": "Adjustable hinge",

"env_element": "HingeModule", "env_attr": "ROM_upper_limit",

"env_direction": "increase"},

"S_liner": {"type": "Structure", "label": "Breathable liner",

"env_element": "LinerModule", "env_attr": "moisture_transmission",

"env_direction": "increase"},

"S_support_rod": {"type": "Structure", "label": "Support rod thickness adjustable series",

"env_element": "SupportModule", "env_attr": "stiffness",

"env_direction": "adjust"},

}

# Product family components (LLKJ-A series)

components = {

"C_PD10": {"type": "Component", "label": "Cushion Pad PD-10",

"params": {"thickness_mm": "10"}},

"C_PD12": {"type": "Component", "label": "Cushion Pad PD-12",

"params": {"thickness_mm": "12"}},

"C_ST03": {"type": "Component", "label": "Strap Assembly ST-03",

"params": {"width_mm": "50", "length_mm": "380-420"}},

"C_HG02": {"type": "Component", "label": "Adjustable Hinge HG-02",

"params": {"ROM_degrees": "0-120"}},

"C_LN_BR2": {"type": "Component", "label": "Breathable Liner LN-BR2",

"params": {"moisture_g_m2_24h": ">=8000"}},

"C_BK_AO1": {"type": "Component", "label": "Anti-overtightening Buckle BK-AO1",

"params": {"max_tension_N": "52"}},

"C_DF01": {"type": "Component", "label": "Pressure Diffusion Plate DF-01",

"params": {"material": "TPU", "thickness_mm": "1.0"}},

}

self.nodes.update(functions)

self.nodes.update(behaviors)

self.nodes.update(structures)

self.nodes.update(components)

# FBS → ENV → Product Family edges

fbs_edges = [

("F_reduce_pressure", "has_behaviour", "B_cushion"),

("F_support_joint", "has_behaviour", "B_stiffness"),

("F_allow_flexion", "has_behaviour", "B_rom_control"),

("F_prevent_slip", "has_behaviour", "B_adaptive_fit"),

("F_skin_comfort", "has_behaviour", "B_breathability"),

("B_cushion", "has_structure", "S_soft_padding"),

("B_stiffness", "has_structure", "S_support_rod"),

("B_rom_control", "has_structure", "S_hinge"),

("B_adaptive_fit", "has_structure", "S_strap"),

("B_breathability", "has_structure", "S_liner"),

("S_soft_padding", "has_component", "C_PD10"),

("S_soft_padding", "has_component", "C_PD12"),

("S_strap", "has_component", "C_ST03"),

("S_strap", "has_component", "C_BK_AO1"),

("S_hinge", "has_component", "C_HG02"),

("S_liner", "has_component", "C_LN_BR2"),

("S_soft_padding", "has_component", "C_DF01"),

]

self.edges.extend(fbs_edges)

def get_neighbors(self, node_id: str) -> List[Tuple[str, str, str]]:

"""Get all 1-hop neighbors: (source, relation, target)"""

return [(s, r, t) for (s, r, t) in self.edges

if s == node_id or t == node_id]

def get_adjacency(self) -> Dict[str, List[str]]:

"""Undirected adjacency list for PageRank computation."""

adj = defaultdict(list)

for (s, r, t) in self.edges:

adj[s].append(t)

adj[t].append(s)

return dict(adj)

# ─── SECTION 5: KG Reasoning — Path & Neighborhood Exploration ────────────────

class KGReasoningChain:

"""

KG path exploration and neighborhood completion for agent reasoning.

(Section 3.2.2, Algorithm 1)

The reasoning chain:

1) Encode requirement query with BioBERT → z_q

2) Match KG entity embeddings (R-GAT) → Top-K seed entities V_q

3) k-hop path search from seeds → path evidence {P_i}

4) 1-hop neighborhood expansion with relevance filtering → {N_j}

5) Linearize evidence → inject into agent prompt (Prompts A/B/C)

This implementation uses cosine similarity on random embeddings as

a placeholder for R-GAT + BioBERT in the production system.

"""

def __init__(self, kg: RADKnowledgeGraph, emb_dim: int = 64):

self.kg = kg

self.emb_dim = emb_dim

# Random entity embeddings (production: R-GAT trained embeddings)

self.entity_embeddings = {

nid: torch.randn(emb_dim)

for nid in kg.nodes

}

def encode_requirement(self, requirement_text: str) -> Tensor:

"""

Encode a wearing requirement into a query vector.

Production: BioBERT or domain-fine-tuned encoder.

"""

# Placeholder: random vector (production: BioBERT embedding)

torch.manual_seed(hash(requirement_text) % 2**31)

return torch.randn(self.emb_dim)

def retrieve_seeds(self, query: Tensor, top_k: int = 3) -> List[str]:

"""Retrieve top-K seed entities by cosine similarity."""

q_norm = F.normalize(query.unsqueeze(0), dim=-1)[0]

scores = {}

for nid, emb in self.entity_embeddings.items():

e_norm = F.normalize(emb.unsqueeze(0), dim=-1)[0]

scores[nid] = (q_norm * e_norm).sum().item()

sorted_nodes = sorted(scores, key=scores.get, reverse=True)

return sorted_nodes[:top_k]

def path_explore(

self, seeds: List[str], hop_limit: int = 2, top_k: int = 5

) -> List[List[Tuple]]:

"""

k-hop path search from seed entities.

Returns top-K paths ranked by average node score.

(Algorithm 1: PathExplore)

"""

adj = defaultdict(list)

for (s, r, t) in self.kg.edges:

adj[s].append((r, t))

adj[t].append((r, s)) # Undirected

all_paths = []

for seed in seeds:

# BFS path collection

queue = [(seed, [(seed, "")], 0)]

while queue:

node, path, depth = queue.pop(0)

if depth >= hop_limit: continue

for (rel, neighbor) in adj[node]:

new_path = path + [(neighbor, rel)]

if len(new_path) > 1:

all_paths.append(new_path)

queue.append((neighbor, new_path, depth + 1))

# Score paths by average entity score (placeholder: path length)

all_paths.sort(key=lambda p: len(p), reverse=True)

return all_paths[:top_k]

def neighbor_expand(

self, path_nodes: List[str], relevance_threshold: float = 0.3, top_k: int = 5

) -> List[Tuple]:

"""

1-hop neighborhood expansion with relevance filtering.

(Algorithm 1: NeighborExplore)

"""

neighbor_facts = []

seen = set(path_nodes)

for node_id in path_nodes:

for (s, r, t) in self.kg.get_neighbors(node_id):

neighbor = t if s == node_id else s

if neighbor not in seen:

neighbor_facts.append((node_id, r, neighbor))

seen.add(neighbor)

return neighbor_facts[:top_k]

def verbalize_paths(self, paths: List[List[Tuple]]) -> List[str]:

"""Convert KG paths to natural-language evidence sentences (Prompt A)."""

sentences = []

for i, path in enumerate(paths):

chain = " → ".join(

f"'{nid}' -['{rel}']->'" if rel else f"'{nid}'"

for (nid, rel) in path

)

node_labels = [

self.kg.nodes.get(nid, {}).get('label', nid)

for (nid, _) in path

]

label_chain = " → ".join(node_labels)

sentences.append(f"Path-based Evidence {i+1}: {label_chain}")

return sentences

def build_reasoning_prompt(

self,

requirement_text: str,

top_k_seeds: int = 3,

hop_limit: int = 2,

) -> Tuple[str, List[str]]:

"""

Full Algorithm 1: Build LLM-ready prompt with KG evidence.

Returns (assembled_prompt, evidence_sentences).

"""

query = self.encode_requirement(requirement_text)

seeds = self.retrieve_seeds(query, top_k=top_k_seeds)

paths = self.path_explore(seeds, hop_limit=hop_limit)

path_nodes = [nid for path in paths for (nid, _) in path]

neighbors = self.neighbor_expand(path_nodes)

path_evidence = self.verbalize_paths(paths)

neighbor_evidence = [

f"Neighbor-based Evidence {i+1}: '{s}' -['{r}']-> '{t}'"

for i, (s, r, t) in enumerate(neighbors)

]

prompt = (

f"Wearing Experience: {requirement_text}\n\n"

"### Path-based reasoning graph\n" +

"\n".join(path_evidence) +

"\n\n### Neighbor-based reasoning graph\n" +

"\n".join(neighbor_evidence)

)

return prompt, path_evidence + neighbor_evidence

# ─── SECTION 6: Experience Analysis Agent (EAA) ───────────────────────────────

class ExperienceAnalysisAgent:

"""

EAA: Fuses multi-faceted experience to determine pain level and requirements.

(Section 3.3, Appendix C.2.1)

Key capability: cross-facet calibration — detecting when verbal/facial

reports conflict with sEMG evidence and defaulting to the physiological anchor.

Mr. Xiao example:

- Verbal: "hurts a lot when bending — almost unbearable" → suggests severe

- Facial: moderate pain expression in most frames

- sEMG: amplitude fluctuation below severe threshold

→ Decision: moderate pain (sEMG + facial override verbal exaggeration)

"""

PAIN_LEVELS = ["normal", "mild", "moderate", "severe"]

def __init__(self, fusion_model: MultiFacetedFusionModel):

self.fusion_model = fusion_model

self.fusion_model.eval()

def analyze(

self,

experience: WearingExperienceInput,

emg_raw: Optional[Tensor] = None,

face_raw: Optional[Tensor] = None,

text_raw: Optional[Tensor] = None,

) -> Tuple[str, List[WearingRequirement]]:

"""

Analyze multi-faceted experience and extract structured requirements.

Returns (pain_level, requirements_list)

"""

# Placeholder embeddings if real backbones not available

B = 1

emg_raw = emg_raw if emg_raw is not None else torch.randn(1, 256)

face_raw = face_raw if face_raw is not None else torch.randn(1, 512)

text_raw = text_raw if text_raw is not None else torch.randn(1, 768)

with torch.no_grad():

out = self.fusion_model(emg_raw, face_raw, text_raw)

# Reliability weights: w_e for facial, w_t for text

w_e = out['w_e'].item()

w_t = out['w_t'].item()

# Pain level decision (production: per-facet classifier + weighted vote)

# Here: heuristic based on NPRS and reliability weights

nprs = experience.nprs_score or 5

pain_level = self._determine_pain_level(nprs, w_e, w_t)

# Extract requirements from verbal description

requirements = self._extract_requirements(experience.verbal_text, pain_level)

print(f" [EAA] Pain level: {pain_level} (facial reliability: {w_e:.3f}, text reliability: {w_t:.3f})")

print(f" [EAA] Extracted {len(requirements)} wearing requirements")

return pain_level, requirements

def _determine_pain_level(self, nprs: int, w_e: float, w_t: float) -> str:

"""

Cross-facet calibrated pain level determination.

When text/verbal reports high pain but physiological evidence disagrees

(low reliability weights), the system defaults toward moderate.

"""

# Reliability-adjusted NPRS: suppress if facets disagree with sEMG

adjusted_nprs = nprs * ((0.4 * w_e) + (0.4 * w_t) + 0.2)

if adjusted_nprs < 1.0: return "normal"

elif adjusted_nprs < 3.5: return "mild"

elif adjusted_nprs < 6.5: return "moderate"

else: return "severe"

def _extract_requirements(self, text: str, pain_level: str) -> List[WearingRequirement]:

"""

Heuristic requirement extraction from verbal description.

Production: LLM with structured prompt and KG-grounded output.

"""

requirements = []

req_map = [

("bend", "R1", "Flexion-extension assistance and pain reduction during bending",

["HingeModule", "SupportModule"], 1),

("tight", "R2", "Local pressure relief and cushioning",

["CushionPad", "PressureDiffusionPlate"], 2),

("scrap", "R3", "Anti-chafing and skin-friendly fit",

["LinerModule"], 3),

("loos", "R4", "Anti-loosening and anti-slippage",

["StrapAssembly", "BuckleModule"], 1),

("slip", "R4", "Anti-loosening and anti-slippage",

["StrapAssembly"], 1),

("adjust", "R5", "Quick repositioning with easy adjustment",

["BuckleModule", "StrapAssembly"], 4),

]

seen_ids = set()

text_lower = text.lower()

for (keyword, rid, need, modules, priority) in req_map:

if keyword in text_lower and rid not in seen_ids:

requirements.append(WearingRequirement(

req_id=rid, need=need, target_modules=modules,

evidence={"text": keyword, "pain_level": pain_level},

priority=priority

))

seen_ids.add(rid)

return requirements

# ─── SECTION 7: Problem Analysis Agent (PAA) ─────────────────────────────────

class ProblemAnalysisAgent:

"""

PAA: Root-cause analysis → ENV-normalized problem specifications.

(Section 3.3.1, Appendix C.2.2)

Fishbone (Ishikawa) decomposition across 4 dimensions:

Human: user behavior, compliance, tightening strategy

Machine: device structure, stiffness, joint effectiveness

Material: skin-contact layer, friction characteristics

Method: fitting workflow, validation process

Each cause is encoded as a triple (Object, Attribute, Value/Direction)

following the ENV schema, enabling systematic downstream conflict detection.

"""

CAUSE_TEMPLATES = {

"Human": [

("StrapAssembly", "tension_level", "high", "increase"),

("FittingBehavior", "compliance", "low", "increase"),

],

"Machine": [

("MainSupportFrame", "stiffness", "low", "increase"),

("HingeModule", "flexion_support_effectiveness", "low", "increase"),

("CushionPad", "peak_contact_pressure", "high", "decrease"),

],

"Material": [

("LinerModule", "friction_coefficient", "high", "decrease"),

("LinerModule", "moisture_transmission", "low", "increase"),

],

"Method": [

("FittingProcess", "constraint_checking", "weak", "improve"),

("AdjustmentProcess", "iterative_convergence", "low", "increase"),

],

}

def analyze(

self,

requirements: List[WearingRequirement],

) -> List[ProblemSpec]:

"""

Perform root-cause analysis and produce ENV-normalized problem specs.

Production: LLM-driven fishbone analysis with KG constraint lookup.

"""

specs = []

qid_counter = 0

for req in requirements:

for dim, causes in self.CAUSE_TEMPLATES.items():

relevant_causes = [

c for c in causes

if any(mod in c[0] or c[0] in mod

for mod in req.target_modules)

or req.priority <= 2 # High priority reqs get all dimensions

]

for (obj, attr, val, direction) in relevant_causes[:2]:

specs.append(ProblemSpec(

qid=f"Q{qid_counter}", req_id=req.req_id,

dimension=dim, obj=obj, attribute=attr,

value=val, direction=direction,

mechanism=f"{dim} dimension: {obj}.{attr}={val} → needs {direction}"

))

qid_counter += 1

print(f" [PAA] Generated {len(specs)} problem specifications across 4 dimensions")

return specs

# ─── SECTION 8: Conflict Identification Agent (CIA) with PageRank ─────────────

class ConflictIdentificationAgent:

"""

CIA: Detects conflict pairs and ranks by structural impact importance.

(Section 3.3.1, Eq. 5-6)

Conflict detection: pairs of specs where the same parameter is pushed

in opposite directions by different requirements.

Structural impact scoring: weighted PageRank on conflict evidence subgraph.

PR(v_i) = (1-γ) + γ · Σ_{v_j∈N(v_i)} [w_ji · PR(v_j)] / Σ_k w_jk

Imp(v) = PR(v) / max_{u∈V_c} PR(u) (normalized)

γ = 0.85 (standard PageRank damping factor)

"""

def __init__(self, kg: RADKnowledgeGraph, gamma: float = 0.85):

self.kg = kg

self.gamma = gamma

def identify_conflicts(

self, specs: List[ProblemSpec]

) -> List[ConflictPair]:

"""

Detect attribute-level conflicts where the same object's attribute

is pushed in opposite directions by different requirements.

"""

conflicts = []

cid_counter = 0

# Group specs by (object, attribute)

spec_map = defaultdict(list)

for spec in specs:

spec_map[(spec.obj, spec.attribute)].append(spec)

# Predefined known conflicts in knee brace domain

known_conflicts = [

("StrapAssembly", "tension_level",

"Lower strap tension → less pain",

"Lower strap tension → higher relative slippage",

["StrapAssembly", "BuckleModule"], "physical"),

("HingeModule", "ROM_upper_limit",

"Larger ROM → better mobility",

"Larger ROM → lower joint stability",

["HingeModule", "SupportModule"], "technical"),

("MainSupportFrame", "stiffness",

"Higher stiffness → better support",

"Higher stiffness → higher peak contact pressure",

["MainSupportFrame", "CushionPad"], "physical"),

]

for (obj, attr, side_a, side_b, modules, ctype) in known_conflicts:

# Only create conflict if relevant specs exist

relevant = any(s.obj == obj or any(m in s.obj for m in modules)

for s in specs)

if relevant:

impact = self._compute_structural_impact(modules)

conflicts.append(ConflictPair(

cid=f"C{cid_counter}", conflict_type=ctype,

involved_modules=modules, parameter=attr,

side_A=side_a, side_B=side_b,

priority=cid_counter + 1,

structural_impact=impact

))

cid_counter += 1

# Sort by structural impact (highest first — PageRank)

conflicts.sort(key=lambda c: c.structural_impact, reverse=True)

print(f" [CIA] Detected {len(conflicts)} conflict pairs")

for c in conflicts:

print(f" {c.cid}: {c.parameter} (impact={c.structural_impact:.3f}) [{c.conflict_type}]")

return conflicts

def _compute_structural_impact(self, modules: List[str]) -> float:

"""

Weighted PageRank on KG subgraph induced by conflict-relevant nodes.

(Eq. 5-6): PR(v_i) = (1-γ) + γ·Σ[w_ji·PR(v_j)/Σ_k w_jk]

Imp(v) = PR(v) / max PR(u)

"""

# Find relevant nodes in KG

relevant_nodes = [

nid for nid, data in self.kg.nodes.items()

if any(m in data.get('label', '') or m in nid for m in modules)

]

if not relevant_nodes:

return 0.5 # Default importance

adj = self.kg.get_adjacency()

# Uniform initial PageRank

pr = {n: 1.0 / max(len(relevant_nodes), 1) for n in relevant_nodes}

for _ in range(20): # Power iteration

new_pr = {}

for node in relevant_nodes:

neighbors = [n for n in adj.get(node, []) if n in pr]

incoming = sum(pr.get(nb, 0) / max(len(adj.get(nb, [1])), 1)

for nb in neighbors)

new_pr[node] = (1 - self.gamma) + self.gamma * incoming

pr = new_pr

max_pr = max(pr.values()) if pr else 1.0

avg_impact = sum(pr.values()) / max(len(pr), 1) / max(max_pr, 1e-8)

return min(avg_impact, 1.0)

# ─── SECTION 9: Conflict Resolution Agent (CRA) with TRIZ ────────────────────

class ConflictResolutionAgent:

"""

CRA: TRIZ-based conflict resolution → executable improvement actions.

(Section 3.3.2, Eq. 7-9)

Score(φ_i) = α·Sim(φ_i, c) + β·Rel(φ_i)

α=0.7, β=0.3 (determined by sensitivity sweep on development cases)

The 39 TRIZ inventive principles are mapped to domain-specific actions.

Principles serve as heuristic knowledge, NOT direct configuration operations.

They are translated into executable actions considering:

- Object semantics in the conflict

- Product-family knowledge

- Structural constraints

"""

TRIZ_PRINCIPLES = {

"Dynamicity": {

"description": "Make objects/environments/processes adjustable or optimal at each stage",

"domain_actions": [

"Raise buckle tension-adjustment upper bound",

"Increase fine-tuning resolution",

"Optimize near-joint tension distribution",

]

},

"Segmentation": {

"description": "Divide object into independent parts; make sectional",

"domain_actions": [

"Widen strap and increase contact area",

"Zone pressure distribution across pad regions",

]

},

"Cushioning": {

"description": "Compensate for the relatively low reliability of an object",

"domain_actions": [

"Increase interlayer thickness",

"Add compliant interlayer between hard structure and skin",

]

},

"Composite": {

"description": "Transition from homogeneous to composite materials",

"domain_actions": [

"Rigid core + compliant exterior structure",

"Elastic modulus gradient: stiff inside + soft outside",

]

},

"Feedback": {

"description": "Introduce feedback to improve process or action",

"domain_actions": [

"Add over-tightening trigger threshold sensor",

"Provide tension availability feedback indicator",

]

},

}

CONFLICT_TO_PRINCIPLES = {

"tension_level": ["Dynamicity", "Segmentation", "Feedback"],

"ROM_upper_limit": ["Dynamicity", "Feedback"],

"stiffness": ["Composite", "Cushioning", "Segmentation"],

"default": ["Dynamicity", "Cushioning"],

}

def __init__(self, alpha: float = 0.7, beta: float = 0.3):

self.alpha = alpha # Semantic similarity weight

self.beta = beta # Structural relevance weight

def resolve(

self,

conflicts: List[ConflictPair],

kg: RADKnowledgeGraph,

) -> List[TRIZAction]:

"""

Generate TRIZ-based improvement actions for each conflict,

processed in structural impact importance order.

(Section 3.3.2, Eq. 7-9)

"""

all_actions = []

for conflict in conflicts:

print(f" [CRA] Resolving {conflict.cid}: {conflict.parameter}")

# Select candidate TRIZ principles for this conflict type

candidates = self.CONFLICT_TO_PRINCIPLES.get(

conflict.parameter, self.CONFLICT_TO_PRINCIPLES["default"]

)

# Score and rank principles: Score(φ_i) = α·Sim + β·Rel (Eq. 7)

scored = []

for principle_name in candidates:

principle = self.TRIZ_PRINCIPLES[principle_name]

# Sim: cosine similarity (simplified: keyword overlap)

sim = self._semantic_similarity(principle_name, conflict.parameter)

# Rel: structural importance of target objects (Eq. 8)

rel = conflict.structural_impact

score = self.alpha * sim + self.beta * rel # Eq. 7

scored.append((score, principle_name, principle))

scored.sort(reverse=True)

# Generate actions from top-2 principles

for i, (score, pname, principle) in enumerate(scored[:2]):

for action_desc in principle["domain_actions"][:1]:

target_module = conflict.involved_modules[0] if conflict.involved_modules else "Unknown"

action_type = "adjust" if "Adjust" in action_desc or "Increase" in action_desc else "replace"

action = TRIZAction(

cid=conflict.cid,

principle_name=pname,

action_type=action_type,

target_module=target_module,

change_description=action_desc,

expected_effect=f"Resolve {conflict.side_A} vs {conflict.side_B} trade-off",

feasibility=True,

)

all_actions.append(action)

print(f" → {pname}: {action_desc} (score={score:.3f})")

return all_actions

def _semantic_similarity(self, principle: str, conflict_param: str) -> float:

"""Keyword-based similarity (production: text encoder cosine sim)."""

dynamic_keys = ["tension", "ROM", "adjust"]

if principle == "Dynamicity" and any(k in conflict_param for k in dynamic_keys): return 0.90

if principle == "Cushioning" and "stiffness" in conflict_param: return 0.85

if principle == "Segmentation" and "tension" in conflict_param: return 0.80

if principle == "Feedback" and "tension" in conflict_param: return 0.75

return 0.50

# ─── SECTION 10: Scheme Configuration Agent (SCA) ────────────────────────────

class SchemeConfigurationAgent:

"""

SCA: Instantiate improvement actions into a personalized EBOM.

(Section 3.3.3, Eq. 10-11)

Two-stage process:

Stage I: Structural matching and substitution (Eq. 10)

v_match = argmax_{v_j ∈ G_FBS} Sim(v_j, o_i)

Execute action: replacement, adjustment, or reconfiguration

Stage II: Assembly-constraint checking and performance validation

Δf = f(S'') - f(S') (semantic performance gain)

C(S'') = True (all constraints satisfied)

Output: user-level EBOM

EBOMuser = {(M_i, a_i, D_i, ΔExp_i)} (Eq. 11)

"""

# LLKJ-A product family upgrade paths

UPGRADE_MAP = {

"StrapAssembly": {

"replace_standard": EBOMItem(

"Strap Assembly", "ST-03",

{"width_mm": "50", "length_mm": "420±20"},

quantity=4, modification_note="Widened for pressure distribution",

delta_experience="Reduced peak contact pressure"

),

},

"CushionPad": {

"upgrade_thickness": EBOMItem(

"Cushion Pad", "PD-12",

{"thickness_mm": "12"},

quantity=2, modification_note="Increased from 10mm to 12mm",

delta_experience="Improved cushioning during wearing"

),

},

"HingeModule": {

"adjust_rom": EBOMItem(

"Adjustable Hinge", "HG-02",

{"ROM_degrees": "0-120"},

quantity=2, modification_note="ROM extended for flexion assistance",

delta_experience="Better flexion-extension support"

),

},

"LinerModule": {

"upgrade_breathability": EBOMItem(

"Breathable Liner", "LN-BR2",

{"moisture_g_m2_24h": "≥8000"},

quantity=1, modification_note="Higher breathability for skin comfort",

delta_experience="Reduced chafing and skin irritation"

),

},

"BuckleModule": {

"replace_buckle": EBOMItem(

"Anti-overtightening Buckle", "BK-AO1",

{"max_tension_N": "52"},

quantity=2, modification_note="Tension limit reduced from 60N",

delta_experience="Prevents over-tightening during use"

),

},

}

def configure(

self,

actions: List[TRIZAction],

requirements: List[WearingRequirement],

kg: RADKnowledgeGraph,

) -> Tuple[List[EBOMItem], bool]:

"""

Instantiate TRIZ actions into EBOM items with constraint checking.

Returns (ebom_items, all_constraints_satisfied).

"""

ebom_items = []

seen_modules = set()

# Add baseline non-modified components

baseline_items = [

EBOMItem("Main Support Frame", "FR-M",

{"size": "M", "stiffness": "55±5"}, quantity=1),

EBOMItem("Pressure Diffusion Plate", "DF-01",

{"material": "TPU", "thickness_mm": "1.0"}, quantity=2),

]

ebom_items.extend(baseline_items)

# Instantiate each TRIZ action into a component modification

for action in actions:

module = action.target_module

if module in seen_modules:

continue

upgrades = self.UPGRADE_MAP.get(module, {})

if upgrades:

# Select first available upgrade

upgrade_item = list(upgrades.values())[0]

ebom_items.append(upgrade_item)

seen_modules.add(module)

print(f" [SCA] Applied {action.principle_name} → {upgrade_item.component} {upgrade_item.module_id}")

# Assembly constraint check (Stage II)

constraints_ok = self._check_constraints(ebom_items)

return ebom_items, constraints_ok

def _check_constraints(self, ebom: List[EBOMItem]) -> bool:

"""

Validate assembly constraints:

1. Main support frame present (mandatory)

2. At least one strap assembly (mandatory for fixation)

3. Hinge quantity ≥ 2 (bilateral support)

4. Liner present if skin-contact components exist

(Production: KG constraint propagation)

"""

component_ids = {item.module_id for item in ebom}

has_frame = any("FR-M" in item.module_id for item in ebom)

has_strap = any("ST-" in item.module_id for item in ebom)

if not has_frame:

print(" [SCA] CONSTRAINT FAIL: Missing main support frame")

return False

if not has_strap:

print(" [SCA] CONSTRAINT FAIL: Missing strap assembly")

return False

return True

# ─── SECTION 11: EBOM Evaluation Metrics ─────────────────────────────────────

class EBOMEvaluator:

"""

Evaluation metrics for EBOM generation quality.

(Section 4.4 — Ablation study metrics)

5 metrics:

Precision: TP / (TP + FP) — no extra items

Recall: TP / (TP + FN) — no missed items

F1: harmonic mean

Completeness: mandatory components covered / total mandatory

ParamAcc: key parameters within tolerance / total key params

A line item is a TP only if component/model, quantity, AND key parameters

(within tolerance) all match the reference EBOM.

"""

def evaluate(

self,

predicted: List[EBOMItem],

reference: List[EBOMItem],

mandatory_ids: List[str] = None,

) -> Dict[str, float]:

"""

Compute all 5 EBOM evaluation metrics.

"""

mandatory_ids = mandatory_ids or ["FR-M", "HG-02", "ST-03"]

pred_map = {item.module_id: item for item in predicted}

ref_map = {item.module_id: item for item in reference}

tp = fp = fn = 0

param_correct = param_total = 0

for mid, ref_item in ref_map.items():

if mid in pred_map:

pred_item = pred_map[mid]

# Check quantity match

qty_match = (pred_item.quantity == ref_item.quantity)

# Check parameter match (within tolerance — simplified: exact)

param_matches = []

for k, v in ref_item.parameters.items():

pred_v = pred_item.parameters.get(k, "")

param_total += 1

is_match = (pred_v == v or pred_v.strip("≥≤") == v.strip("≥≤"))

param_matches.append(is_match)

param_correct += int(is_match)

all_params_ok = all(param_matches) if param_matches else True

if qty_match and all_params_ok:

tp += 1

else:

fp += 1

else:

fn += 1

# Extra predictions not in reference

for mid in pred_map:

if mid not in ref_map:

fp += 1

precision = tp / max(tp + fp, 1)

recall = tp / max(tp + fn, 1)

f1 = 2 * precision * recall / max(precision + recall, 1e-8)

# Completeness: mandatory component coverage

mandatory_covered = sum(1 for mid in mandatory_ids if mid in pred_map)

completeness = mandatory_covered / max(len(mandatory_ids), 1)

# ParamAcc: key parameter accuracy

param_acc = param_correct / max(param_total, 1)

return {

"precision": precision, "recall": recall, "f1": f1,

"completeness": completeness, "param_acc": param_acc,

}

# ─── SECTION 12: End-to-End Demo (Mr. Xiao Case Study) ───────────────────────

def run_mr_xiao_demo():

"""

Reproduce Mr. Xiao's personalized knee brace configuration.

(Section 4.3 — Personalizing configuration analysis)

Mr. Xiao: post-operative knee rehabilitation patient.

Verbal: "Hurts a lot when bending — almost unbearable. Too tight,

scrapes skin. After walking it loosens and slips down."

sEMG: amplitude below severe threshold (below-expected for stated pain)

Facial: moderate pain expression in most frames

Expected EBOM changes:

- Strap Assembly: ST-03 (wider, adjustable tension)

- Cushion Pad: PD-12 (12mm, up from 10mm)

- Hinge: HG-02 (ROM 0-120°)

- Buckle: BK-AO1 (max tension 52N)

- Liner: LN-BR2 (breathability ≥8000 g/m²·24h)

"""

print("="*65)

print(" Agentic AI RAD Configuration — Mr. Xiao Case Study")

print("="*65)

torch.manual_seed(42)

# ── Initialize system components ─────────────────────────────────────────

fusion_model = MultiFacetedFusionModel(

emb_dim=64, num_classes=4, num_exp_labels=6

)

kg = RADKnowledgeGraph()

kg_reasoner = KGReasoningChain(kg)

eaa = ExperienceAnalysisAgent(fusion_model)

paa = ProblemAnalysisAgent()

cia = ConflictIdentificationAgent(kg)

cra = ConflictResolutionAgent()

sca = SchemeConfigurationAgent()

evaluator = EBOMEvaluator()

# ── Mr. Xiao's wearing experience ────────────────────────────────────────

xiao_experience = WearingExperienceInput(

user_id="xiao_001",

verbal_text=(

"It hurts a lot when bending — almost unbearable. "

"It is pressed too tight against my skin and it rubs a lot. "

"After walking for a while it loosens and slips down, "

"so I have to pull it back up by hand — really annoying."

),

nprs_score=7, # Verbal suggests severe, but sEMG says moderate

action_type="walking",

)

# Simulate backbone feature vectors

# Production: actual GoogLeNet / TimeLLM / Sentence-BERT outputs

# Mr. Xiao: facial → moderate, sEMG → below severe threshold

emg_features = torch.randn(1, 256) * 0.4 # Below severe threshold

face_features = torch.randn(1, 512) * 0.6 # Moderate-range expression

text_features = torch.randn(1, 768) * 1.2 # Strong verbal complaint

print("\n─── STAGE 1: Experience Analysis (EAA) ───")

pain_level, requirements = eaa.analyze(

xiao_experience, emg_features, face_features, text_features

)

print(f" Pain level determined: {pain_level} (verbal said: severe)")

print("\n─── STAGE 2: KG Reasoning Chain ───")

prompt, evidence = kg_reasoner.build_reasoning_prompt(

f"Pain during flexion, skin pressure, brace loosening: {pain_level} pain level"

)

print(f" Retrieved {len(evidence)} KG evidence sentences")

print(f" Sample: {evidence[0] if evidence else 'None'}")

print("\n─── STAGE 3: Problem Analysis (PAA) ───")

specs = paa.analyze(requirements)

print("\n─── STAGE 4: Conflict Identification (CIA) ───")

conflicts = cia.identify_conflicts(specs)

print("\n─── STAGE 5: Conflict Resolution (CRA) ───")

actions = cra.resolve(conflicts, kg)

print("\n─── STAGE 6: Scheme Configuration (SCA) ───")

ebom_items, constraints_ok = sca.configure(actions, requirements, kg)

print(f"\n Assembly constraints satisfied: {constraints_ok}")

print(f" Generated EBOM ({len(ebom_items)} items):")

for item in ebom_items:

params_str = ", ".join(f"{k}={v}" for k, v in item.parameters.items())

print(f" {item.component} [{item.module_id}] × {item.quantity} | {params_str}")

if item.modification_note:

print(f" → {item.modification_note}")

# ── Evaluation against reference EBOM ────────────────────────────────────

print("\n─── EVALUATION vs Expert Reference EBOM ───")

reference_ebom = [

EBOMItem("Main Support Frame", "FR-M", {"size": "M", "stiffness": "55±5"}, 1),

EBOMItem("Adjustable Hinge", "HG-02", {"ROM_degrees": "0-120"}, 2),

EBOMItem("Anti-overtightening Buckle", "BK-AO1", {"max_tension_N": "52"}, 2),

EBOMItem("Strap Assembly", "ST-03", {"width_mm": "50", "length_mm": "420±20"}, 4),

EBOMItem("Cushion Pad", "PD-12", {"thickness_mm": "12"}, 2),

EBOMItem("Breathable Liner", "LN-BR2", {"moisture_g_m2_24h": "≥8000"}, 1),

EBOMItem("Pressure Diffusion Plate", "DF-01", {"material": "TPU", "thickness_mm": "1.0"}, 2),

]

metrics = evaluator.evaluate(ebom_items, reference_ebom)

print(f" Precision: {metrics['precision']:.3f}")

print(f" Recall: {metrics['recall']:.3f}")

print(f" F1: {metrics['f1']:.3f}")

print(f" Completeness: {metrics['completeness']:.3f}")

print(f" ParamAcc: {metrics['param_acc']:.3f}")

print("\n" + "="*65)

print("✓ End-to-end pipeline verified. System ready for integration.")

print("="*65)

print("""

Production deployment checklist:

1. Replace stub encoders with real backbone models:

- sEMG: TimeLLM (https://github.com/KimMeen/Time-LLM)

- Facial: GoogLeNet pretrained on AffectNet or DISFA

- Text: Sentence-BERT (https://sbert.net)

2. Set up Neo4j graph database with LLKJ-A KG:

pip install neo4j

# Load FBS-ENV-ProductFamily triples from ontology construction

3. Connect LLM backend (paper used DeepSeek Reasoner V4):

pip install openai # or deepseek SDK

# Configure with temperature=0.2, max_tokens=4096

4. Build training dataset (468 samples in paper):

- 40 volunteers × 4 actions × 4 configurations × sEMG + facial + text

- NPRS scoring immediately after each trial

- Baseline measurement per subject for cross-subject alignment

5. Cross-subject alignment before deployment:

- Collect baseline sEMG (rest) + facial (neutral) from new patient

- Compute α = r_train / (r_base + ε), β = b_train / (b_base + ε)

- Apply linear scaling to task-stage signals before inference

""")

if __name__ == "__main__":

run_mr_xiao_demo()

Read the Full Paper

The complete study — including all agent prompt templates, full ablation tables across 49 annotation configurations, cross-LLM robustness results, the complete FBS–ENV–product-family ontology, and the Appendix A–C algorithms and prompts — is published in Advanced Engineering Informatics.

Zhuang, W., Lv, X., Wu, X., Chen, C., Xiao, W., Wang, J., Jiang, C., Yao, J., Bao, J., & Li, X. (2026). An Agentic AI-Empowered product family configuration approach for personalizing rehabilitation assistive devices with Multi-Faceted wearing experience. Advanced Engineering Informatics, 74, 104695. https://doi.org/10.1016/j.aei.2026.104695

This article is an independent editorial analysis of peer-reviewed research. The Python implementation is an educational adaptation of the published methodology. For the exact LLKJ-A product family specifications, the ConSE-compatible ontology definitions, and the complete agent prompt templates in Appendix C, refer to the original paper. Research supported by NSFC grant 52405256 and Donghua University 2025 Program for Enhancing Research Capacity.