FedDRLPD: Deep Reinforcement Learning Defense Against Poisoning Attacks in Federated Learning

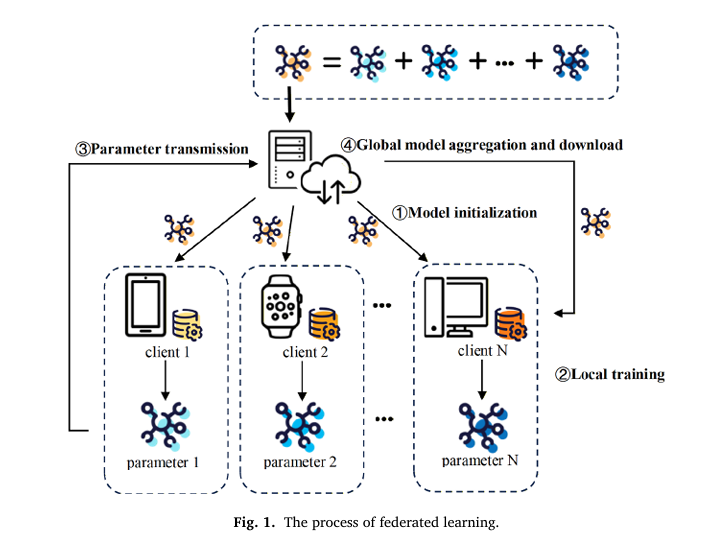

FedDRLPD: Deep Reinforcement Learning Defense Against Poisoning Attacks in Federated Learning | AI Security Research AISecurity Research Machine Learning About Federated Learning Security · Knowledge-Based Systems 2026 · 16 min read FedDRLPD: Teaching AI to Defend Itself Against Poisoning Attacks Through Deep Reinforcement Learning A novel defense framework that integrates Deep Q-Network algorithms with Mahalanobis […]