Perplexity vs ChatGPT for Academic Research: A Real Comparison

Not a spec sheet. We gave both tools the same real research tasks — finding sources, reviewing literature, drafting arguments, checking citations — and tracked where each one helped, and where it fell flat.

The question comes up constantly in researcher communities, postgrad Discord servers, and academic Twitter threads: should I use Perplexity or ChatGPT for my research? The honest answer is that most people asking this question are framing it slightly wrong — but we’ll get to that. First, let’s talk about what actually happens when you use both tools on the same task.

Here’s a scenario that will feel familiar. You’re three weeks into a research project. You need a strong literature review section, a clear map of where your argument sits within existing scholarship, and a set of citations that hold up when your supervisor checks them. You open an AI tool to speed up the process. The question is: which one, and at which point in your workflow?

Perplexity AI and ChatGPT Plus are both genuinely capable tools in 2026, but they approach research assistance from completely different angles. Perplexity is a search-grounded research engine — it finds real sources, surfaces them with links, and synthesises from what it finds. ChatGPT Plus is a language model that has internalised an enormous amount of academic knowledge and can reason, argue, and write at a graduate level. Conflating them — or choosing one to do everything — is where most researchers run into problems.

What follows is a direct, category-by-category comparison built on actual research tasks across five academic disciplines: public health, computer science, economics, education, and environmental science. No artificial prompts designed to flatter either tool. Just the tasks that actually matter to researchers, and honest reporting on what happened.

The Core Difference That Changes Everything

Before the round-by-round breakdown, there’s one architectural fact worth understanding — because it explains almost every difference between these two tools for academic work.

ChatGPT Plus runs on GPT-5, a model trained on a massive corpus of text that includes a huge proportion of publicly available academic writing: papers, textbooks, lecture notes, preprint repositories, and scholarly commentary. When you ask it about a research topic, it’s drawing on compressed patterns from that training data. It doesn’t retrieve — it reconstructs. The upside: it writes fluently, reasons well, and can synthesise arguments at a level that genuinely feels like graduate-quality scholarship. The downside: the sources it cites are reconstructed from memory, not retrieved from databases, which means they can be subtly or dramatically wrong.

Perplexity runs on a search-grounded architecture. Before answering, it queries live sources — academic databases, preprint servers, Google Scholar previews, journal abstracts, and the open web. You can see those sources, follow the links, and verify the claims. The upside: citation grounding you can actually trust more. The downside: it’s synthesising from what it can reach, and its analytical depth rarely matches the kind of structured argumentation ChatGPT produces effortlessly.

Perplexity finds. ChatGPT writes. This isn’t a simplification — it’s the central organising principle of how to use these tools. The research workflow that treats them as competing alternatives produces mediocre results from both. The workflow that treats them as complementary stages produces something genuinely useful.

“A hallucinated citation looks exactly like a real one until you try to find the paper.”

— A warning that applies to every AI-assisted research workflow in 2026

Round-by-Round: Eight Tasks That Matter to Researchers

Finding Real, Verifiable Sources on a Research Topic

We asked both tools to find 8 recent peer-reviewed papers on the same topic — the mental health impacts of remote work — with full citations and links where possible.

Mapping Research Gaps in an Existing Field

We asked both tools to identify the main unanswered questions and methodological weaknesses in the literature on AI use in secondary education.

Writing a Thematic Literature Review Section

We provided the same list of 10 verified sources to both tools and asked each to write a 500-word thematic review section synthesising them.

Checking Whether a Specific Paper Exists and Finding Its DOI

We gave both tools a paper title and asked them to confirm whether it exists, who the authors are, and what the DOI is. We ran this test with five papers — three real, two invented.

Explaining a Complex Theoretical Framework

We asked both to explain Bourdieu’s concept of cultural capital and its application in educational sociology — at a level suitable for a postgraduate reader encountering it for the first time.

Finding What Has Been Published in the Last 6 Months on a Topic

We asked both to surface recent developments — specifically papers published between September 2025 and March 2026 — on the topic of large language model hallucination in medical contexts.

Drafting a Research Argument from a Thesis Statement

We provided a thesis statement — “Remote work disproportionately disadvantages early-career employees in knowledge industries” — and asked both tools to draft a two-paragraph academic argument supporting it.

Methodological Advice for a Specific Research Design Problem

We described a research design challenge — “I’m doing a mixed-methods study on student wellbeing, but my quantitative and qualitative data are reaching different conclusions. How should I handle this?” — and asked both tools for methodological guidance.

Full Scorecard: How They Stack Up Across Six Dimensions

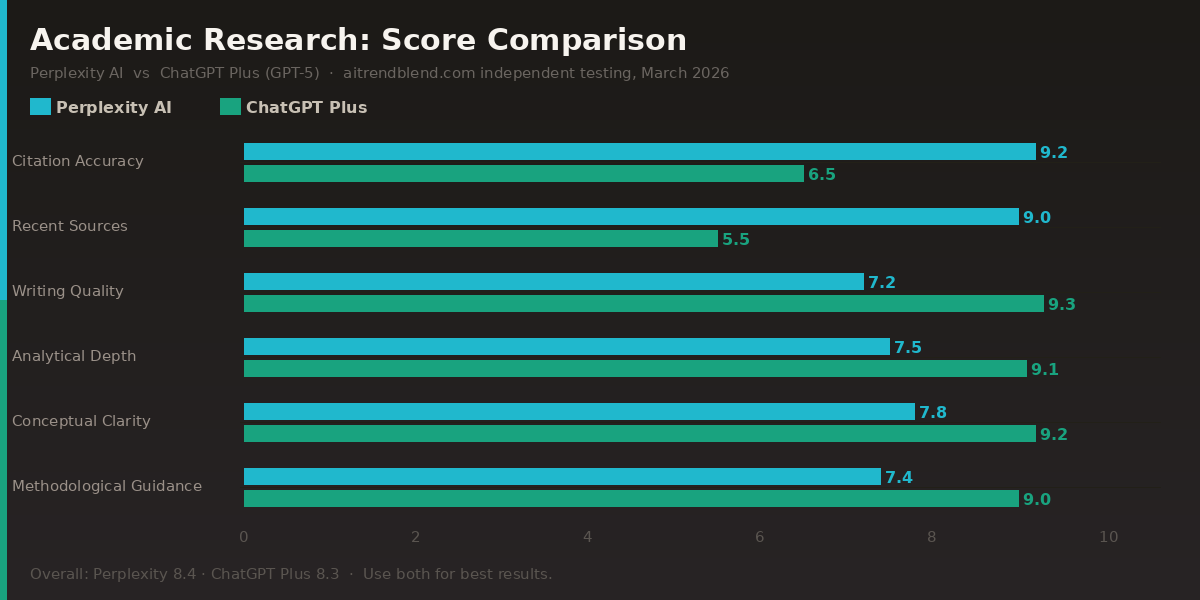

| Dimension | Perplexity AI | ChatGPT Plus 5 | Winner |

|---|---|---|---|

| Citation Accuracy | 9.2 / 10 | 6.5 / 10 | Perplexity |

| Real-time / Recent Source Discovery | 9.0 / 10 | 5.5 / 10 | Perplexity |

| Academic Writing Quality | 7.2 / 10 | 9.3 / 10 | ChatGPT Plus |

| Analytical / Argumentative Depth | 7.5 / 10 | 9.1 / 10 | ChatGPT Plus |

| Conceptual Explanation Clarity | 7.8 / 10 | 9.2 / 10 | ChatGPT Plus |

| Methodological Guidance | 7.4 / 10 | 9.0 / 10 | ChatGPT Plus |

| Ease of Use for Non-technical Researchers | 8.5 / 10 | 9.0 / 10 | ChatGPT Plus |

| Value for Money (Pro tier) | 8.8 / 10 | 8.0 / 10 | Perplexity |

| Overall Academic Research Score | 8.4 / 10 | 8.3 / 10 | Use Both |

The overall scores are nearly identical — which is the point. These tools are not substitutes for each other. They’re doing different things exceptionally well, and the overall number is almost meaningless without understanding which dimension matters most for your current task.

Perplexity AI — Detailed Profile

✅ Strengths

- Real-time, verifiable source retrieval

- Dramatically lower hallucination rate on citations

- Live access to recent preprints and journal updates

- Academic search focus mode reduces noise significantly

- Transparent sourcing — you see what it’s drawing from

- More affordable Pro tier for heavy usage

❌ Weaknesses

- Writing quality is functional, not polished

- Limited behind many academic paywalls

- Analytical depth falls short for complex theory work

- Weaker in niche humanities subfields

- Synthesis quality drops on highly specialised topics

ChatGPT Plus — Detailed Profile

✅ Strengths

- Graduate-quality academic writing and argument structure

- Exceptional at conceptual explanation and theory synthesis

- Nuanced methodological guidance across disciplines

- Handles complex multi-part research questions well

- Consistent tone and voice across long documents

- Great for translating ideas into polished prose

❌ Weaknesses

- Citation hallucination is a real and serious risk

- Cannot access sources published after training cutoff

- Overconfidence — it sounds right even when it’s wrong

- No direct source links you can verify immediately

- Needs explicit verification workflow by user

Stop Choosing — Here’s How to Use Both

The most practical output of this comparison isn’t “Perplexity beats ChatGPT” or vice versa. It’s a workflow. These tools are strongest at different stages of academic research, and building a process that uses each at the right point turns a good research session into a genuinely efficient one.

The Two-Tool Academic Research Workflow

The critical handoff point is between stages 2 and 3. When you move from Perplexity to ChatGPT Plus, you are passing it only the verified sources you have personally checked. This eliminates the hallucination risk at the synthesis stage entirely — because ChatGPT is no longer inventing references, it’s synthesising from the list you’ve confirmed is real. That boundary is the single most important habit in this workflow.

ChatGPT Plus writes better than Perplexity. Perplexity cites more reliably than ChatGPT. A research workflow that uses each for what it does best produces results that neither can match alone.

The Limits Both Tools Share

The thing that neither tool handles well — and that researchers get burned by most often — is the intersection of very specialised subject matter and very recent research. If you’re working at the frontier of a niche subfield, both tools are drawing from a shallow pool. Perplexity can only surface what’s indexed and open-access. ChatGPT’s training data thins out at the edges of specialised knowledge. Neither one is a substitute for direct database access through your institution’s library subscriptions, and neither should be your only source of discovery in fields where the important work appears in conference proceedings or behind paywalls.

Both tools are also worse at cross-disciplinary synthesis than they appear. Ask either one to bring together insights from, say, behavioural economics and cognitive neuroscience in the context of a novel argument you’re developing, and the output tends toward listing what each discipline says side by side rather than genuinely integrating them. That kind of synthesis — where you’re asking for something that hasn’t been written yet — is still mostly a human task.

And one final reminder that applies more to ChatGPT than Perplexity but deserves emphasis regardless: never submit a citation you haven’t verified independently. Not because these tools are careless — they’re actually quite good — but because the cost of a single wrong reference in academic work is disproportionate to the time saved by skipping verification. The workflow above builds verification in from the start rather than treating it as optional.

The Verdict, Honestly

If you’re only going to use one tool, and your primary concern is not fabricating sources, use Perplexity. It keeps you honest. The writing you’ll need to do yourself, or with another tool’s help — but you won’t submit a fake DOI by accident.

If you’re only going to use one tool, and your primary concern is writing quality and analytical depth, use ChatGPT Plus. Accept that you’ll need to verify every citation through a database before it goes anywhere near a submission, and build that into your process from day one rather than as an afterthought.

The better answer, though, is to stop treating this as an either/or choice. The two tools cost roughly the same, they complement each other almost perfectly, and using them together takes about the same amount of time as using either one badly. The research workflow where Perplexity does the finding and ChatGPT does the writing is not complicated — it just requires understanding what each tool is actually good for, which is what this comparison was designed to give you.

Six months from now, both tools will have iterated again. Perplexity is actively expanding its academic database integrations. ChatGPT’s web browsing capabilities continue to improve. The gap on citation accuracy may narrow. The gap on writing quality may narrow too. But the underlying principle — use search-grounded tools for source verification and language models for synthesis and prose — is going to hold for a while yet.

Ready to Try the Two-Tool Workflow?

Open Perplexity Pro, set Academic focus, run your field-mapping prompt — then bring your verified source list to ChatGPT Plus to draft.

All comparisons were conducted in March 2026 using Perplexity Pro (Academic search focus) and ChatGPT Plus (GPT-5). Tasks were run across five academic disciplines: public health, computer science, economics, education, and environmental science. Citation accuracy was assessed by manually checking every source returned against Google Scholar, PubMed, and institutional database access. Scores reflect testing at a specific point in time — both tools update frequently.

This article is independent editorial content by aitrendblend.com. It is not sponsored by or affiliated with Perplexity AI or OpenAI. All evaluations reflect direct independent testing and are editorial judgments, not official benchmarks.