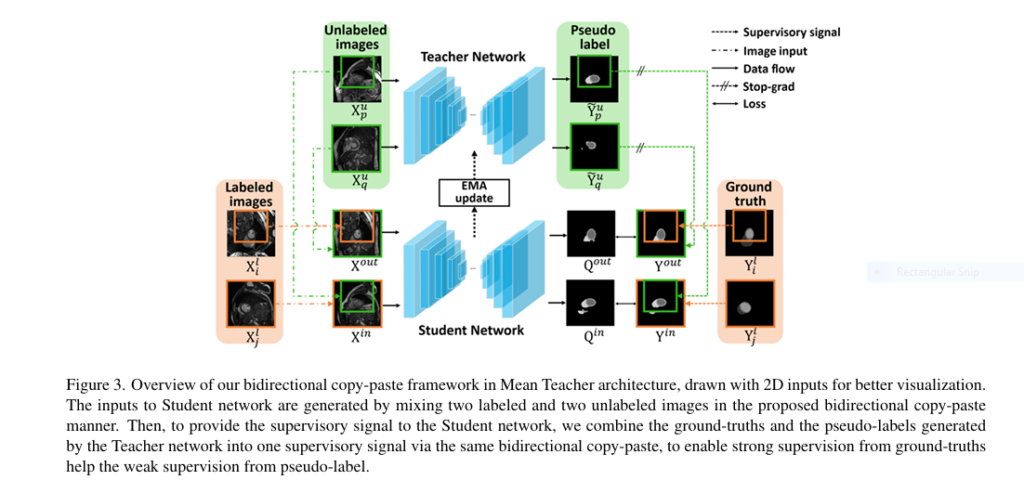

Bidirectional Copy-Paste Revolutionizes Semi-Supervised Medical Image Segmentation (21% Dice Improvement Achieved, but Challenges Remain)

Introduction: A Breakthrough in Medical Imaging with BCP In the ever-evolving field of medical imaging, precision and efficiency are paramount. The ability to accurately segment anatomical structures from CT or MRI scans is crucial for diagnosis, treatment planning, and research. However, the process of manually labeling these images is both time-consuming and expensive. Enter semi-supervised […]