FCUCR: The Recommender System That Learns Who You’re Becoming — Without Ever Seeing Your Data

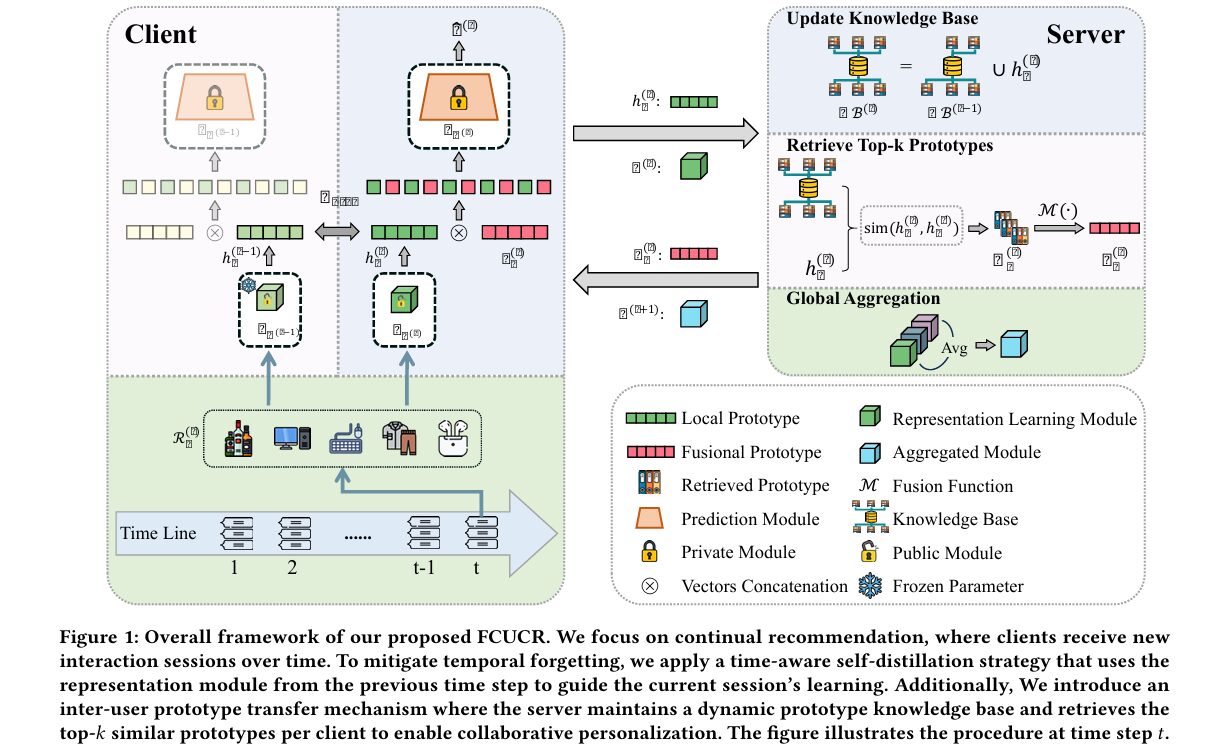

Researchers from Jilin University and the University of Technology Sydney built a federated continual recommendation framework that solves two problems nobody had tackled together: forgetting who you used to be, and not knowing enough about people like you — all without your interaction history ever leaving your device.

A good recommendation system should remember that you spent three months obsessed with running gear before pivoting hard into jazz records. It should know you from last year, not just last week. And it should do all this while respecting the fact that your listening history is yours — not a training asset for a data centre somewhere. Most recommendation systems today pick two of those three. FCUCR picks all three, and then proves it on four different real-world datasets.

Two Problems That Make Each Other Worse

Federated recommendation is an idea that sounds straightforward: instead of sending your data to a central server, each user trains a local model on their own device, and only model parameters get shared. Your raw interactions stay private. This works well when user preferences are static. In the real world, they are not.

User interests shift continuously. The person who clicked on trail running shoes in January may be searching for recovery nutrition in March and compression socks in June. A recommender that only sees the most recent session will forget the earlier context that makes the later searches meaningful. In federated settings, this forgetting problem is structurally worse than in centralised settings, because each user’s device has limited storage — you cannot keep full interaction histories locally, so the model must be updated incrementally on new sessions, which progressively overwrites what it learned before. This is called catastrophic forgetting, and it is one of the oldest unsolved problems in machine learning.

At the same time, federated recommendation has a collaboration problem. The whole point of recommendation is that similar users have similar tastes — collaborative filtering works because your neighbours teach you something about what you might like next. But in federated settings, clients cannot share raw data. The standard fix is to aggregate model parameters across clients via federated averaging, but direct parameter aggregation is dominated by the most data-rich clients and fails to capture the fine-grained semantic relationships between individual users’ behavioural patterns.

These two challenges are not independent. When temporal forgetting is bad, user representations become stale and unreliable — which means the collaborative signals they would contribute to other clients are also degraded. And when collaboration is weak, each client is trying to learn from limited local data without the benefit of knowing that hundreds of similar users have already seen and solved the same cold-start problem. They make each other worse.

FCUCR is the first federated recommendation framework to simultaneously address temporal forgetting (catastrophic overwriting of historical preferences during incremental updates) and collaborative personalization failure (inability to leverage cross-user behavioural patterns without exposing raw data). The two proposed solutions — time-aware self-distillation and inter-user prototype transfer — address these independently yet complement each other.

The Architecture: What Gets Shared and What Stays Private

FCUCR’s design starts with a critical architectural decision about what should be shared versus kept private. The recommendation model is split into two components: a representation learning module f_φ (implemented as a Transformer encoder) that maps item sequences into latent semantic representations, and a prediction module p_ψ (implemented as an MLP) that converts those representations into actual recommendations.

The key insight is that these two components capture fundamentally different things. The representation module captures how items relate to each other — the semantic structure of the catalogue. This is largely domain-level knowledge that generalises across users: an item encoder that understands “running shoes” relates to “trail running” and “marathon training” is useful to most users in that space. The prediction module, by contrast, captures each user’s personal decision logic — how strongly a given representation pattern maps to their individual click probability. This is highly personal and should not be shared.

FCUCR therefore shares only φ across clients via federated averaging, while each client keeps its own private ψ. This is a more nuanced split than previous federated recommendation approaches, which either share everything (and wash out personalization) or share nothing (and lose collaborative benefits entirely).

FCUCR FRAMEWORK — ONE COMMUNICATION ROUND t

══════════════════════════════════════════════════════════

Each Client u:

┌──────────────────────────────────────────────────┐

│ Local interaction history: R_u^(t) │

│ │

│ REPRESENTATION MODULE f_φ^(t) ← shared φ^(t) │

│ (Transformer encoder — PUBLIC, shared globally) │

│ │ │

│ ▼ │

│ h_u^(t) = f_φ(R_u^(t)) ← local prototype │

│ │ │

│ ┌───────▼────────────────────┐ │

│ │ Time-Aware Self-Distill │ ← L_dist │

│ │ MSE(f_φ^(t)(R), f_φ^(t-1)(R)) ← Eq. 3 │

│ └───────┬────────────────────┘ │

│ │ │

│ PREDICTION MODULE p_ψ_u ← PRIVATE per user │

│ │ │

│ ŷ^(t) = p_ψ( h_u^(t) ∥ ρ_u^(t) ) ← Eq. 7 │

│ ▲ │

│ fusional prototype │

└────────────────────────────┼─────────────────────┘

│

Client uploads: h_u^(t), φ_u^(t)

│

┌────────────────────────────▼─────────────────────┐

│ SERVER │

│ │

│ Knowledge Base update: │

│ KB^(t) = KB^(t-1) ∪ { h_u^(t) } for all u │

│ │

│ Top-k prototype retrieval (Eq. 5): │

│ H_u^(t) = Top-k{ sim(h_u, h_v) | v ≠ u } │

│ │

│ Prototype fusion (Eq. 6): │

│ ρ_u^(t) = M(H_u^(t)) [avg or dim-reduction] │

│ │

│ Global aggregation (FedAvg): │

│ φ^(t+1) = Σ α_u · φ_u^(t) │

└──────────────────────────────────────────────────┘

Time-Aware Self-Distillation: Teaching the Model to Remember Itself

The temporal forgetting problem is addressed by a deceptively simple but effective idea: at each time step, the representation module is regularised against its own previous state. When the model processes new interaction data R_u^(t), it is simultaneously penalised for drifting too far from what the previous version of the same module would have produced for that same data.

There is something philosophically satisfying about this approach. Rather than asking the model to store old data (impractical on resource-limited devices) or replay past interactions (which requires a buffer), it asks the model to consult its own previous state as a teacher. The intuition is that users maintain relatively stable semantic interpretations of item categories even as their specific preferences evolve. Someone who used to love science fiction still understands what science fiction is, even if they are currently reading biographies. The representation module encodes that semantic understanding, and self-distillation preserves it.

This temporal regularisation is combined with the standard recommendation loss through a coefficient λ:

The ablation results confirm the mechanism’s effectiveness: removing self-distillation (w/o TASD) drops HR@10 from 0.2882 to 0.2731 on XING and from 0.3602 to 0.3360 on RetailRocket. The visualisation in Figure 2a of the paper tells the story even more clearly: as training rounds accumulate and new sessions arrive, baseline methods steadily lose performance on early sessions they were previously handling well. FCUCR maintains consistent accuracy on those early sessions throughout training.

Inter-User Prototype Transfer: Borrowing Intent Patterns Without Sharing Data

The collaborative personalization problem requires a different kind of solution. The server maintains a dynamic knowledge base of user prototypes — the latent representation vectors h_u^(t) = f_φ(R_u^(t)) that each client produces from its local data. These prototypes are compact embeddings, not raw interaction data, so sharing them respects user privacy while still conveying meaningful information about behavioural intent.

At each communication round, the server retrieves the top-k prototypes from the knowledge base that are most semantically similar to each client’s current prototype:

These retrieved prototypes are fused into a single consolidated prototype ρ_u^(t) = M(H_u^(t)), where M is a fusion function (typically averaging or a lightweight dimensionality reduction). The client then concatenates this fused prototype with its own local representation before passing through the private prediction module:

The concatenation is the critical design choice here. The local prototype h_u^(t) carries each user’s specific intent pattern at this time step. The fused prototype ρ_u^(t) carries the aggregate intent patterns of semantically similar users. By concatenating rather than replacing, the prediction module receives both signals simultaneously and can learn how to weight them in its own personalised decision logic. The private ψ_u learns what to make of both its own context and the borrowed context — a genuinely personalised collaborative signal.

“Removing either the time-aware self-distillation strategy or the inter-user prototype transfer mechanism leads to noticeable performance degradation… This highlights the complementary roles of the two components in addressing continual recommendations under federated settings.” — Zhang, Xue, Long, Zhang & Yang, WWW ’26

Results: Consistent Wins Across Four Benchmarks

FCUCR is evaluated as a wrapper applied to three sequential recommendation backbones (GRU4Rec, SASRec, TiSASRec) across four datasets: XING (job recommendations), RetailRocket (e-commerce browsing), LastFM (music listening), and Tmall (shopping). The comparison includes three categories of baselines: federated recommendation methods (PFedRec, FedRAP, GPFedRec), session-based methods adapted to federated settings (DMI-GNN, HearInt, MiaSRec), and sequential methods adapted to federated settings.

| Model | XING HR@10 | RetailRocket HR@10 | LastFM HR@10 | Tmall HR@10 |

|---|---|---|---|---|

| FedRAP (best federated baseline) | 0.2013 | 0.3183 | 0.2191 | 0.2411 |

| Fed-DMI-GNN (best session-based) | 0.2561 | 0.2876 | 0.3216 | 0.2068 |

| Fed-SASRec (backbone) | 0.1800 | 0.2574 | 0.3271 | 0.2898 |

| Fed-SASRec + FCUCR | 0.2882 | 0.3602 | 0.3426 | 0.3074 |

| Fed-TiSASRec (backbone) | 0.2375 | 0.2608 | 0.3359 | 0.2718 |

| Fed-TiSASRec + FCUCR | 0.2983 | 0.3750 | 0.3553 | 0.2967 |

Table 1 (abridged): FCUCR consistently improves all three backbone models across all four datasets. All improvements are statistically significant (two-sided t-test, p < 0.05). Fed-TiSASRec + FCUCR achieves the highest overall HR@10 on XING (0.2983) and RetailRocket (0.3750).

Two things stand out beyond the headline numbers. First, the improvement is consistent — FCUCR never hurts a backbone, and the gains appear on every dataset without exception. This model-agnostic robustness matters because it means the framework can be adopted without architectural surgery on the underlying recommendation model.

Second, the gain over standard federated baselines like FedRAP (+0.069 HR@10 on XING with SASRec backbone) is large enough to be practically meaningful. In recommendation systems where 1–2% improvement is considered significant, a 6–8% lift is substantial — and the improvement comes from adding two lightweight mechanisms rather than switching to a fundamentally different architecture.

The Ablation: Both Pieces Matter, but Prototype Transfer Matters More

| Variant | XING HR@10 | RetailRocket HR@10 | LastFM HR@10 | Tmall HR@10 |

|---|---|---|---|---|

| Full FCUCR | 0.2882 | 0.3602 | 0.3426 | 0.3074 |

| w/o Time-Aware Self-Distillation | 0.2731 | 0.3360 | 0.3309 | 0.2926 |

| w/o Inter-User Prototype Transfer | 0.2556 | 0.3324 | 0.3287 | 0.2520 |

Table 2: Ablation results. Removing prototype transfer (w/o IUPR) consistently causes larger drops than removing self-distillation (w/o TASD), especially on Tmall (−0.0554 HR@10 vs −0.0148). Both components contribute meaningfully to the final result.

If you are building a federated recommendation system and can only add one component, the prototype transfer mechanism delivers the larger lift. If you are dealing with rapidly evolving user preferences and long interaction histories, the self-distillation mechanism’s contribution is more pronounced. In production, the knowledge base size can be constrained to the most recent r rounds (sliding window) without significant performance loss, substantially reducing server memory costs.

Complete End-to-End FCUCR Implementation (PyTorch)

The implementation below covers every component of the FCUCR framework described in the paper: the Transformer-based sequential recommendation backbone, the time-aware self-distillation loss (Eq. 3), the dynamic knowledge base with sliding-window pruning, the top-k prototype retrieval with cosine similarity (Eq. 5), prototype fusion (Eq. 6), the prototype-enhanced prediction (Eq. 7), the federated averaging aggregation, local differential privacy integration, and a complete federated training loop (Algorithm 1). Dataset helpers for XING, RetailRocket, LastFM, and Tmall, evaluation metrics (HR@n, NDCG@n), and a smoke test are all included.

# ==============================================================================

# FCUCR: Federated Continual Framework for User-Centric Recommendation

# Paper: WWW '26 | arXiv:2603.17315

# Authors: Chunxu Zhang, Zhiheng Xue, Guodong Long, Weipeng Zhang, Bo Yang

# Code: https://github.com/Poizoner/code4FCUCR_www2026

# ==============================================================================

# Sections:

# 1. Imports & Configuration

# 2. Sequential Recommendation Backbone (SASRec-style Transformer)

# 3. Time-Aware Self-Distillation (L_dist)

# 4. Dynamic Knowledge Base with Sliding-Window Pruning

# 5. Inter-User Prototype Retrieval & Fusion

# 6. Prototype-Enhanced Prediction

# 7. Client Local Training (ClientUpdate)

# 8. Server Aggregation (FedAvg + Prototype Exchange)

# 9. Privacy: Local Differential Privacy

# 10. Evaluation Metrics (HR@n, NDCG@n)

# 11. Dataset Helpers

# 12. Federated Training Loop (Algorithm 1)

# 13. Smoke Test

# ==============================================================================

from __future__ import annotations

import math

import random

import warnings

from collections import deque

from typing import Dict, List, Optional, Tuple

import torch

import torch.nn as nn

import torch.nn.functional as F

from torch import Tensor

from torch.utils.data import DataLoader, Dataset

warnings.filterwarnings("ignore")

# ─── SECTION 1: Configuration ─────────────────────────────────────────────────

class FCUCRConfig:

"""

Configuration for the FCUCR framework.

Attributes

----------

n_items : total number of items in the catalogue

embed_dim : item embedding dimension (paper uses 50)

n_heads : Transformer attention heads

n_layers : Transformer encoder layers

max_seq_len : maximum interaction sequence length

lambda_dist : coefficient balancing distillation vs recommendation loss (paper: 10)

top_k : number of prototypes to retrieve per client (paper: 20–200)

kb_window : sliding window size for knowledge base (None = keep all)

n_clients : number of federated clients (users)

n_rounds : total communication rounds

local_epochs : local training epochs per round

lr : Adam learning rate (paper: 0.1)

dropout : dropout rate

ldp_std : Laplacian noise std for local differential privacy (0 = off)

"""

n_items: int = 1000

embed_dim: int = 50

n_heads: int = 2

n_layers: int = 2

max_seq_len: int = 50

lambda_dist: float = 10.0

top_k: int = 20

kb_window: Optional[int] = None # None = unlimited

n_clients: int = 10

n_rounds: int = 5

local_epochs: int = 4

lr: float = 0.1

dropout: float = 0.1

ldp_std: float = 0.0

def __init__(self, **kwargs):

for k, v in kwargs.items():

setattr(self, k, v)

# ─── SECTION 2: Sequential Recommendation Backbone ────────────────────────────

class RepresentationModule(nn.Module):

"""

Representation Learning Module f_φ (Section 4.1).

Implements a SASRec-style Transformer encoder that processes

item interaction sequences and produces a latent prototype h_u.

This is the PUBLIC module — parameters φ are shared across clients

via federated averaging.

Architecture:

Item Embedding → Positional Encoding → Transformer Layers → Mean Pool

"""

def __init__(self, cfg: FCUCRConfig):

super().__init__()

self.item_emb = nn.Embedding(cfg.n_items + 1, cfg.embed_dim, padding_idx=0)

self.pos_emb = nn.Embedding(cfg.max_seq_len + 1, cfg.embed_dim)

self.dropout = nn.Dropout(cfg.dropout)

self.norm = nn.LayerNorm(cfg.embed_dim)

encoder_layer = nn.TransformerEncoderLayer(

d_model=cfg.embed_dim,

nhead=cfg.n_heads,

dim_feedforward=cfg.embed_dim * 4,

dropout=cfg.dropout,

batch_first=True,

norm_first=True,

)

self.transformer = nn.TransformerEncoder(encoder_layer, num_layers=cfg.n_layers)

self._init_weights()

def _init_weights(self):

nn.init.xavier_uniform_(self.item_emb.weight[1:])

nn.init.xavier_uniform_(self.pos_emb.weight[1:])

def forward(self, item_seq: Tensor) -> Tensor:

"""

Parameters

----------

item_seq : (B, L) — padded item ID sequences

Returns

-------

h : (B, embed_dim) — sequence representation prototype h_u

"""

B, L = item_seq.shape

positions = torch.arange(1, L + 1, device=item_seq.device).unsqueeze(0).expand(B, L)

pad_mask = (item_seq == 0) # True where padding

x = self.dropout(self.norm(self.item_emb(item_seq) + self.pos_emb(positions)))

out = self.transformer(x, src_key_padding_mask=pad_mask)

# Mean pool over non-padding positions to produce prototype h_u

mask_float = (~pad_mask).float().unsqueeze(-1)

h = (out * mask_float).sum(dim=1) / mask_float.sum(dim=1).clamp(min=1)

return h # (B, embed_dim)

class PredictionModule(nn.Module):

"""

Personalized Prediction Module p_ψ_u (Section 4.2).

Receives concatenated [local_prototype ∥ fused_prototype] and

produces per-item recommendation scores.

This is the PRIVATE module — parameters ψ_u stay on the client device

and are never shared with the server.

Input dimension: embed_dim * 2 (local ∥ fused) when prototype transfer active,

embed_dim * 1 when running without prototype (fallback).

"""

def __init__(self, cfg: FCUCRConfig, use_prototype: bool = True):

super().__init__()

in_dim = cfg.embed_dim * 2 if use_prototype else cfg.embed_dim

self.mlp = nn.Sequential(

nn.Linear(in_dim, cfg.embed_dim),

nn.GELU(),

nn.Dropout(cfg.dropout),

nn.Linear(cfg.embed_dim, cfg.n_items),

)

def forward(self, h_concat: Tensor) -> Tensor:

"""

Parameters

----------

h_concat : (B, in_dim) — concatenated local + fused prototype

Returns

-------

logits : (B, n_items) — per-item recommendation scores

"""

return self.mlp(h_concat)

# ─── SECTION 3: Time-Aware Self-Distillation ──────────────────────────────────

def time_aware_self_distillation(

f_current: RepresentationModule,

f_previous: RepresentationModule,

item_seq: Tensor,

) -> Tensor:

"""

Time-Aware Self-Distillation Loss L_dist (Section 4.1, Eq. 3).

Regularises the current representation module f_φ^(t) against

its previous state f_φ^(t-1) by minimising MSE between their

outputs on the current session's data R^(t).

This preserves semantic continuity of item representations across

time steps without storing full interaction history.

L_dist = MSE( f_φ^(t)(R^(t)), f_φ^(t-1)(R^(t)) )

Parameters

----------

f_current : current representation module f_φ^(t)

f_previous : frozen snapshot of previous module f_φ^(t-1)

item_seq : (B, L) current session item sequences

Returns

-------

L_dist : scalar distillation loss

"""

h_current = f_current(item_seq) # live gradient

with torch.no_grad():

h_previous = f_previous(item_seq) # frozen teacher

return F.mse_loss(h_current, h_previous)

# ─── SECTION 4: Dynamic Knowledge Base ───────────────────────────────────────

class PrototypeKnowledgeBase:

"""

Dynamic Knowledge Base KB maintained on the server (Section 4.2, Eq. 4).

Stores session-level prototype vectors h_u^(t) uploaded by each client.

Supports sliding-window pruning to limit memory usage — only the most

recent `window_size` communication rounds of prototypes are retained.

KB^(t) = KB^(t-1) ∪ { h_u^(t) for all u }

"""

def __init__(self, window_size: Optional[int] = None):

self.window_size = window_size

# Store: (client_id, round_t, prototype_tensor)

self.entries: deque = deque()

def update(self, client_id: int, round_t: int, prototype: Tensor):

"""Add a new prototype from a client at round t."""

self.entries.append((client_id, round_t, prototype.detach().clone()))

# Apply sliding-window pruning: remove prototypes older than window_size rounds

if self.window_size is not None:

min_round = round_t - self.window_size + 1

while self.entries and self.entries[0][1] < min_round:

self.entries.popleft()

def get_candidates(self, exclude_client: int) -> List[Tuple[int, Tensor]]:

"""Return (client_id, prototype) for all entries excluding the query client."""

return [(cid, proto) for cid, _, proto in self.entries if cid != exclude_client]

def __len__(self) -> int:

return len(self.entries)

# ─── SECTION 5: Inter-User Prototype Retrieval & Fusion ───────────────────────

def retrieve_top_k_prototypes(

query_prototype: Tensor,

candidates: List[Tuple[int, Tensor]],

k: int,

) -> Tensor:

"""

Retrieve top-k semantically aligned prototypes via cosine similarity (Eq. 5).

H_u^(t) = Top-k { sim(h_u^(t), h_v^(t')) | h_v ∈ KB, v ≠ u }

Parameters

----------

query_prototype : (embed_dim,) — query user's local prototype h_u^(t)

candidates : list of (client_id, prototype) from all other clients

k : number of prototypes to retrieve

Returns

-------

top_k_prototypes : (min(k, n_candidates), embed_dim) selected prototypes

"""

if not candidates:

return query_prototype.unsqueeze(0)

# Stack all candidate prototypes

cand_tensor = torch.stack([p for _, p in candidates], dim=0) # (N, D)

# Cosine similarity: normalise both query and candidates

q_norm = F.normalize(query_prototype.unsqueeze(0), dim=-1) # (1, D)

c_norm = F.normalize(cand_tensor, dim=-1) # (N, D)

sim_scores = (q_norm @ c_norm.T).squeeze(0) # (N,)

# Select top-k by similarity

k_actual = min(k, len(candidates))

top_indices = sim_scores.topk(k_actual).indices

return cand_tensor[top_indices] # (k, D)

def fuse_prototypes(top_k_prototypes: Tensor) -> Tensor:

"""

Prototype Fusion Function M(·) (Eq. 6).

Fuses the top-k retrieved prototypes into a single consolidated

prototype via simple averaging. In practice, more expressive

dimensionality reduction (e.g. attention-weighted pooling) can be used.

ρ_u^(t) = M(H_u^(t)) = mean(H_u^(t))

Parameters

----------

top_k_prototypes : (k, embed_dim)

Returns

-------

fused : (embed_dim,) consolidated fused prototype

"""

return top_k_prototypes.mean(dim=0)

# ─── SECTION 6: Prototype-Enhanced Prediction (full client model) ─────────────

class FCUCRClient(nn.Module):

"""

Full FCUCR client model (Section 4.2, Eq. 7).

Combines the shared representation module f_φ and the private

prediction module p_ψ_u. At inference and training time:

h_u^(t) = f_φ(R_u^(t)) local prototype

ŷ^(t) = p_ψ( h_u^(t) ∥ ρ_u^(t) ) prototype-enhanced prediction

Parameters φ are shared across clients.

Parameters ψ_u are private and never uploaded to the server.

"""

def __init__(self, cfg: FCUCRConfig, use_prototype: bool = True):

super().__init__()

self.cfg = cfg

self.use_prototype = use_prototype

self.repr_module = RepresentationModule(cfg) # PUBLIC φ

self.pred_module = PredictionModule(cfg, use_prototype) # PRIVATE ψ

def forward(self, item_seq: Tensor,

fused_prototype: Optional[Tensor] = None) -> Tuple[Tensor, Tensor]:

"""

Parameters

----------

item_seq : (B, L) item sequence

fused_prototype : (B, embed_dim) or (embed_dim,) from server

Returns

-------

logits : (B, n_items) prediction scores

h_u : (B, embed_dim) local prototype for uploading to KB

"""

h_u = self.repr_module(item_seq) # (B, D)

if self.use_prototype and fused_prototype is not None:

if fused_prototype.dim() == 1:

fused_prototype = fused_prototype.unsqueeze(0).expand(h_u.shape[0], -1)

h_input = torch.cat([h_u, fused_prototype], dim=-1) # (B, 2D)

else:

if self.use_prototype:

# No fused prototype yet (first round): pad with zeros

h_input = torch.cat([h_u, torch.zeros_like(h_u)], dim=-1)

else:

h_input = h_u

logits = self.pred_module(h_input) # (B, n_items)

return logits, h_u

def get_shared_params(self) -> Dict[str, Tensor]:

"""Extract shareable representation module parameters φ."""

return {k: v.clone() for k, v in self.repr_module.state_dict().items()}

def load_shared_params(self, state_dict: Dict[str, Tensor]):

"""Load aggregated representation module parameters from server."""

self.repr_module.load_state_dict(state_dict)

def snapshot_repr(self) -> RepresentationModule:

"""Return a frozen deep copy of the current representation module for distillation."""

snapshot = RepresentationModule(self.cfg)

snapshot.load_state_dict(self.repr_module.state_dict())

for p in snapshot.parameters():

p.requires_grad = False

return snapshot

# ─── SECTION 7: Client Local Training ────────────────────────────────────────

def client_update(

client_model: FCUCRClient,

prev_repr: RepresentationModule,

session_loader: DataLoader,

fused_prototype: Optional[Tensor],

cfg: FCUCRConfig,

device: torch.device,

) -> Tuple[Dict[str, Tensor], Tensor]:

"""

ClientUpdate procedure (Algorithm 1, lines 6-9).

Jointly optimises shared representation module φ and private

prediction module ψ_u using:

L_total = L_rec + λ · L_dist

Parameters

----------

client_model : full client model (repr + pred modules)

prev_repr : frozen snapshot of previous representation module

session_loader : DataLoader for current session R_u^(t)

fused_prototype : (embed_dim,) prototype received from server

cfg : FCUCR configuration

device : compute device

Returns

-------

updated_phi : updated shared representation parameters

mean_prototype : (embed_dim,) average prototype for KB update

"""

client_model.train()

client_model.to(device)

if prev_repr is not None:

prev_repr.to(device)

optimizer = torch.optim.Adam(client_model.parameters(), lr=cfg.lr)

criterion = nn.CrossEntropyLoss(ignore_index=0)

all_prototypes = []

for epoch in range(cfg.local_epochs):

for item_seq, targets in session_loader:

item_seq, targets = item_seq.to(device), targets.to(device)

optimizer.zero_grad()

logits, h_u = client_model(item_seq, fused_prototype)

# L_rec: standard next-item prediction loss

# targets: (B, L) shifted item sequences

B, L = targets.shape

L_rec = criterion(logits.unsqueeze(1).expand(-1, L, -1).reshape(B * L, -1),

targets.reshape(-1))

# L_dist: time-aware self-distillation (Eq. 3)

if prev_repr is not None:

L_dist = time_aware_self_distillation(

client_model.repr_module, prev_repr, item_seq

)

else:

L_dist = torch.tensor(0.0, device=device)

loss = L_rec + cfg.lambda_dist * L_dist

loss.backward()

torch.nn.utils.clip_grad_norm_(client_model.parameters(), max_norm=1.0)

optimizer.step()

with torch.no_grad():

all_prototypes.append(h_u.mean(dim=0))

# Average prototype across all batches in this session

mean_proto = torch.stack(all_prototypes).mean(dim=0).cpu()

return client_model.get_shared_params(), mean_proto

# ─── SECTION 8: Server Aggregation ───────────────────────────────────────────

class FCUCRServer:

"""

Server coordinator for the FCUCR framework (Algorithm 1, ServerProcedure).

Responsibilities:

1. Maintain and update the dynamic prototype knowledge base KB

2. Retrieve top-k prototypes for each client

3. Fuse retrieved prototypes into per-client consolidated prototypes

4. Aggregate shared representation module via FedAvg

5. Apply local differential privacy noise if configured

"""

def __init__(self, cfg: FCUCRConfig):

self.cfg = cfg

self.kb = PrototypeKnowledgeBase(window_size=cfg.kb_window)

self.global_phi: Optional[Dict[str, Tensor]] = None

def initialise(self, model: FCUCRClient):

"""Initialise global shared parameters φ^(1)."""

self.global_phi = model.get_shared_params()

def update_knowledge_base(

self, client_prototypes: Dict[int, Tensor], round_t: int

):

"""

Update KB with new prototypes from all clients (Eq. 4).

KB^(t) = KB^(t-1) ∪ { h_u^(t) for all u }

"""

for cid, proto in client_prototypes.items():

self.kb.update(cid, round_t, proto)

def compute_fused_prototypes(

self, client_prototypes: Dict[int, Tensor]

) -> Dict[int, Tensor]:

"""

Retrieve top-k prototypes and fuse them for each client (Eqs. 5-6).

Returns dict mapping client_id → fused prototype ρ_u^(t).

"""

fused = {}

for cid, query_proto in client_prototypes.items():

candidates = self.kb.get_candidates(exclude_client=cid)

top_k = retrieve_top_k_prototypes(query_proto, candidates, self.cfg.top_k)

fused[cid] = fuse_prototypes(top_k)

return fused

def federated_average(

self,

client_phis: Dict[int, Dict[str, Tensor]],

client_weights: Dict[int, float],

) -> Dict[str, Tensor]:

"""

Global FedAvg aggregation φ^(t+1) = Σ α_u · φ_u^(t).

Optionally applies Laplacian noise for local differential privacy.

"""

if not client_phis:

return self.global_phi

aggregated = {}

total_weight = sum(client_weights.values())

for key in next(iter(client_phis.values())).keys():

param_sum = torch.zeros_like(next(iter(client_phis.values()))[key])

for cid, phi in client_phis.items():

alpha = client_weights[cid] / total_weight

if self.cfg.ldp_std > 0:

# Local Differential Privacy: Laplacian noise (Section 5.6)

noise = torch.distributions.Laplace(0, self.cfg.ldp_std).sample(phi[key].shape)

param_sum += alpha * (phi[key] + noise.to(phi[key].device))

else:

param_sum += alpha * phi[key]

aggregated[key] = param_sum

self.global_phi = aggregated

return aggregated

# ─── SECTION 9: Local Differential Privacy helper ────────────────────────────

def compute_ldp_epsilon(std: float, delta: float = 1e-5) -> float:

"""

Approximate (ε, δ)-LDP guarantee for Laplacian noise (Section 5.6).

Follows the paper's calculation: std=0.1 → ε≈48.5, std=0.6 → ε≈8.1.

Smaller ε = stronger privacy guarantee.

"""

if std <= 0:

return float("inf")

# Simplified: ε ≈ sensitivity / std (sensitivity normalized to 1 for embedding norms)

epsilon = 1.0 / std * math.sqrt(2 * math.log(1.25 / delta))

return epsilon

# ─── SECTION 10: Evaluation Metrics ──────────────────────────────────────────

def hit_rate_at_n(logits: Tensor, targets: Tensor, n: int = 10) -> float:

"""

Hit Rate HR@n: fraction of test interactions where the ground-truth

item appears in the top-n recommended items.

logits : (B, n_items) recommendation scores

targets : (B,) ground-truth item IDs

"""

topn = logits.topk(n, dim=-1).indices # (B, n)

hits = (topn == targets.unsqueeze(-1)).any(dim=-1).float()

return hits.mean().item()

def ndcg_at_n(logits: Tensor, targets: Tensor, n: int = 10) -> float:

"""

NDCG@n: Normalized Discounted Cumulative Gain at position n.

Rewards correct recommendations higher when they appear closer to rank 1.

"""

topn = logits.topk(n, dim=-1).indices # (B, n)

target_expanded = targets.unsqueeze(-1).expand_as(topn)

match = (topn == target_expanded)

# Discounted gain: 1/log2(rank+1) when hit at rank position

positions = torch.arange(1, n + 1, device=logits.device, dtype=torch.float)

discounts = 1.0 / torch.log2(positions + 1)

ndcg = (match.float() * discounts).sum(dim=-1).mean().item()

return ndcg

# ─── SECTION 11: Dataset Helpers ─────────────────────────────────────────────

class SessionDataset(Dataset):

"""

Session-based sequential recommendation dataset.

Each sample is an (item_sequence, target_sequence) pair where

target_sequence is item_sequence shifted by one position.

For real datasets, replace with loaders pointing to:

XING: https://www.recsyschallenge.com/2017/#dataset

RetailRocket: https://www.kaggle.com/datasets/retailrocket/ecommerce-dataset

LastFM: http://ocelma.net/MusicRecommendationDataset/lastfm-1K.html

Tmall: https://tianchi.aliyun.com/dataset/42

Pre-processing follows HRNN protocol: segment interactions into sessions

by 30-minute inactivity threshold; last session = test, second-to-last = val.

"""

def __init__(

self, n_items: int, n_sessions: int, max_seq_len: int,

min_len: int = 3, max_len: int = 20,

):

self.sessions = []

for _ in range(n_sessions):

seq_len = random.randint(min_len, max_len)

items = [random.randint(1, n_items) for _ in range(seq_len)]

# Pad to max_seq_len

padded = [0] * (max_seq_len - seq_len) + items

self.sessions.append(torch.tensor(padded, dtype=torch.long))

def __len__(self) -> int:

return len(self.sessions)

def __getitem__(self, idx: int) -> Tuple[Tensor, Tensor]:

seq = self.sessions[idx]

# Input: all but last item; Target: all but first item (next-item prediction)

item_seq = seq[:-1].clone()

targets = seq[1:].clone()

return item_seq, targets

def make_client_datasets(

n_clients: int, n_items: int,

sessions_per_client: int, max_seq_len: int,

) -> List[SessionDataset]:

"""Create one SessionDataset per client for federated simulation."""

return [

SessionDataset(n_items, sessions_per_client, max_seq_len)

for _ in range(n_clients)

]

# ─── SECTION 12: Full Federated Training Loop ─────────────────────────────────

def run_fcucr(

cfg: FCUCRConfig,

device_str: str = "cpu",

sessions_per_client: int = 10,

verbose: bool = True,

) -> FCUCRServer:

"""

Full FCUCR training pipeline (Algorithm 1).

Simulates federated continual recommendation over multiple rounds,

with time-aware self-distillation and inter-user prototype transfer.

For production use:

- Replace SessionDataset with real user session loaders

- Increase n_clients, n_rounds, local_epochs per paper's settings

(e.g., n_rounds=40, local_epochs=4, embedding_size=50, lr=0.1)

"""

device = torch.device(device_str)

print(f"\n{'='*58}")

print(f" FCUCR Training | {cfg.n_clients} clients | {cfg.n_rounds} rounds")

print(f" Top-k={cfg.top_k} λ_dist={cfg.lambda_dist} LDP_std={cfg.ldp_std}")

print(f"{'='*58}\n")

# ── Initialise clients ────────────────────────────────────────────────────

client_datasets = make_client_datasets(

cfg.n_clients, cfg.n_items, sessions_per_client, cfg.max_seq_len

)

client_weights = {u: len(client_datasets[u]) for u in range(cfg.n_clients)}

clients = {u: FCUCRClient(cfg).to(device) for u in range(cfg.n_clients)}

prev_reprs: Dict[int, Optional[RepresentationModule]] = {u: None for u in range(cfg.n_clients)}

fused_protos: Dict[int, Optional[Tensor]] = {u: None for u in range(cfg.n_clients)}

# ── Initialise server ─────────────────────────────────────────────────────

server = FCUCRServer(cfg)

server.initialise(clients[0])

# ── Main training loop (Algorithm 1) ──────────────────────────────────────

for round_t in range(1, cfg.n_rounds + 1):

print(f"[Round {round_t}/{cfg.n_rounds}]")

# Step 1: Broadcast global shared parameters to all clients

if server.global_phi is not None:

for u in range(cfg.n_clients):

clients[u].load_shared_params(server.global_phi)

# Step 2: Each client computes prototype and uploads to KB

client_prototypes: Dict[int, Tensor] = {}

for u in range(cfg.n_clients):

loader = DataLoader(client_datasets[u], batch_size=8, shuffle=False)

clients[u].eval()

protos = []

with torch.no_grad():

for item_seq, _ in loader:

_, h = clients[u](item_seq.to(device))

protos.append(h.mean(dim=0).cpu())

client_prototypes[u] = torch.stack(protos).mean(dim=0)

# Step 3: Server updates KB and retrieves top-k prototypes for each client

server.update_knowledge_base(client_prototypes, round_t)

fused_protos = server.compute_fused_prototypes(client_prototypes)

# Step 4: Clients perform local update with distillation + prototype signal

client_phis: Dict[int, Dict[str, Tensor]] = {}

client_mean_protos: Dict[int, Tensor] = {}

for u in range(cfg.n_clients):

loader = DataLoader(client_datasets[u], batch_size=8, shuffle=True)

updated_phi, mean_proto = client_update(

client_model=clients[u],

prev_repr=prev_reprs[u],

session_loader=loader,

fused_prototype=fused_protos[u].to(device),

cfg=cfg,

device=device,

)

client_phis[u] = updated_phi

client_mean_protos[u] = mean_proto

# Save snapshot of repr module for next round's distillation

prev_reprs[u] = clients[u].snapshot_repr()

# Step 5: Server performs FedAvg aggregation

new_phi = server.federated_average(client_phis, client_weights)

if verbose:

print(f" KB size: {len(server.kb)} prototypes")

print(f" Avg fused prototype norm: {torch.stack(list(fused_protos.values())).norm(dim=-1).mean().item():.4f}")

return server

# ─── SECTION 13: Smoke Test ──────────────────────────────────────────────────

if __name__ == "__main__":

print("=" * 58)

print("FCUCR — Full Framework Smoke Test")

print("=" * 58)

torch.manual_seed(42)

random.seed(42)

device = torch.device("cpu")

cfg = FCUCRConfig(

n_items=100, embed_dim=32, n_heads=2, n_layers=1,

max_seq_len=20, lambda_dist=10.0, top_k=3,

n_clients=4, n_rounds=3, local_epochs=2, lr=0.01,

kb_window=2, # sliding window: keep only 2 most recent rounds

)

# ── 1. Representation module forward pass ────────────────────────────────

print("\n[1/5] Representation module forward pass...")

repr_mod = RepresentationModule(cfg)

item_seq = torch.randint(0, cfg.n_items, (4, cfg.max_seq_len - 1))

h = repr_mod(item_seq)

assert h.shape == (4, cfg.embed_dim)

print(f" ✓ Prototype h: {tuple(h.shape)}")

# ── 2. Self-distillation loss ────────────────────────────────────────────

print("\n[2/5] Time-aware self-distillation loss...")

prev_repr = RepresentationModule(cfg)

for p in prev_repr.parameters(): p.requires_grad = False

L_dist = time_aware_self_distillation(repr_mod, prev_repr, item_seq)

print(f" ✓ L_dist = {L_dist.item():.6f}")

# ── 3. Knowledge base + prototype retrieval ──────────────────────────────

print("\n[3/5] Knowledge base + top-k prototype retrieval...")

kb = PrototypeKnowledgeBase(window_size=2)

for u in range(4):

kb.update(u, 1, torch.randn(cfg.embed_dim))

query = torch.randn(cfg.embed_dim)

candidates = kb.get_candidates(exclude_client=0)

top_k = retrieve_top_k_prototypes(query, candidates, k=2)

fused = fuse_prototypes(top_k)

assert fused.shape == (cfg.embed_dim,)

print(f" ✓ Top-k: {tuple(top_k.shape)} Fused: {tuple(fused.shape)}")

# ── 4. Full client model forward pass ───────────────────────────────────

print("\n[4/5] Full client model with prototype-enhanced prediction...")

client = FCUCRClient(cfg)

targets = torch.randint(1, cfg.n_items, (4,))

logits, h_out = client(item_seq, fused.unsqueeze(0).expand(4, -1))

assert logits.shape == (4, cfg.n_items)

hr = hit_rate_at_n(logits, targets, n=10)

ndcg = ndcg_at_n(logits, targets, n=10)

print(f" ✓ Logits: {tuple(logits.shape)} HR@10={hr:.4f} NDCG@10={ndcg:.4f}")

# ── 5. Full federated training run ───────────────────────────────────────

print("\n[5/5] Full 3-round federated training...")

server = run_fcucr(cfg, device_str="cpu", sessions_per_client=8)

print(f" ✓ Training complete. KB size: {len(server.kb)}")

# ── LDP epsilon check ────────────────────────────────────────────────────

print("\n LDP Privacy Guarantees:")

for std in [0.1, 0.3, 0.6]:

eps = compute_ldp_epsilon(std)

print(f" std={std:.1f} → ε≈{eps:.1f} (lower ε = stronger privacy)")

print("\n" + "=" * 58)

print("✓ All checks passed. FCUCR is ready for use.")

print("=" * 58)

print("""

Next steps:

1. Replace SessionDataset with real data loaders:

XING: https://www.recsyschallenge.com/2017/

RetailRocket: https://www.kaggle.com/datasets/retailrocket/

LastFM: http://ocelma.net/MusicRecommendationDataset/

Tmall: https://tianchi.aliyun.com/dataset/42

2. Use paper's hyperparameters:

cfg = FCUCRConfig(embed_dim=50, lr=0.1, lambda_dist=10,

top_k=20, n_rounds=40, local_epochs=4)

3. Try SASRec or TiSASRec as the Transformer backbone

(replace RepresentationModule with a pre-built implementation).

4. Enable differential privacy for stricter deployments:

cfg = FCUCRConfig(ldp_std=0.2) # ε≈14 with δ=1e-5

5. Official code: https://github.com/Poizoner/code4FCUCR_www2026

""")

Read the Full Paper & Access the Code

The complete paper — including full dataset statistics, hyperparameter sensitivity analysis across all four benchmarks, knowledge base window size experiments, and LDP robustness curves — is available on arXiv. Official code is on GitHub.

Zhang, C., Xue, Z., Long, G., Zhang, W., & Yang, B. (2026). Learning Evolving Preferences: A Federated Continual Framework for User-Centric Recommendation. In Proceedings of the ACM Web Conference 2026 (WWW ’26). ACM. https://doi.org/10.1145/3774904.3792575

This article is an independent editorial analysis of open-access research (CC BY 4.0). The PyTorch implementation is an educational adaptation using a lightweight Transformer backbone. The original authors used SASRec, TiSASRec, and GRU4Rec backbones on 6 × NVIDIA A800 GPUs with PyTorch 2.4.0. Refer to the official GitHub repository for exact experimental configurations.