Introduction

Imagine an AI system that doesn’t just identify objects in images, but thinks through its reasoning process step-by-step before producing a final answer—much like how a human would approach a complex visual problem. This is precisely what researchers at CUHK, HKUST, and RUC have accomplished with Seg-Zero, a groundbreaking framework that fundamentally reimagines how artificial intelligence performs reasoning-based image segmentation.

Traditional computer vision systems struggle with complex, nuanced queries. When asked to “find the older man in a brown coat wearing a santa hat,” most existing models falter. They lack the explicit reasoning process needed to break down intricate instructions and locate pixel-precise objects. Seg-Zero changes this equation entirely by introducing chain-of-thought reasoning powered purely by reinforcement learning—with no explicitly annotated reasoning data required.

The implications are substantial: Seg-Zero-7B achieves a remarkable 57.5% zero-shot performance on the ReasonSeg benchmark, surpassing the previous best model (LISA-7B) by a stunning 18%. This article explores how this innovative architecture works, why its approach matters, and what it means for the future of intelligent computer vision.

The Problem with Current Reasoning Segmentation

What is Reasoning Segmentation?

Traditional image segmentation tasks are straightforward: identify all pixels belonging to “person” or “car.” But reasoning segmentation operates on a fundamentally different level. Instead of simple categorical labels, it processes complex, multi-step instructions like:

- “Find the food that provides sustained energy”

- “Identify the recreational vehicle suitable for a road trip”

- “Locate the person standing at the front of the stage conducting musicians”

These queries demand more than object detection—they require contextual understanding, logical deduction, and cross-domain knowledge synthesis.

The Limitations of Supervised Fine-Tuning

Current methods rely almost exclusively on Supervised Fine-Tuning (SFT), where models are trained on mixed datasets containing simple descriptions. While effective for in-domain performance, SFT exhibits three critical weaknesses:

- Poor Generalization: Models trained on specific datasets perform dramatically worse on out-of-distribution (OOD) data

- Catastrophic Forgetting: Fine-tuning causes models to lose their original capabilities in other visual understanding tasks

- Absence of Reasoning: Without explicit reasoning chains, models cannot handle genuinely complex scenarios

Research presented in Seg-Zero demonstrates these limitations empirically. When tested on out-of-domain ReasonSeg benchmarks, SFT-trained models experience significant performance degradation, while reasoning chains enable models to break down complex instructions into manageable analytical steps.

Introducing Seg-Zero’s Decoupled Architecture

The Two-Model Approach

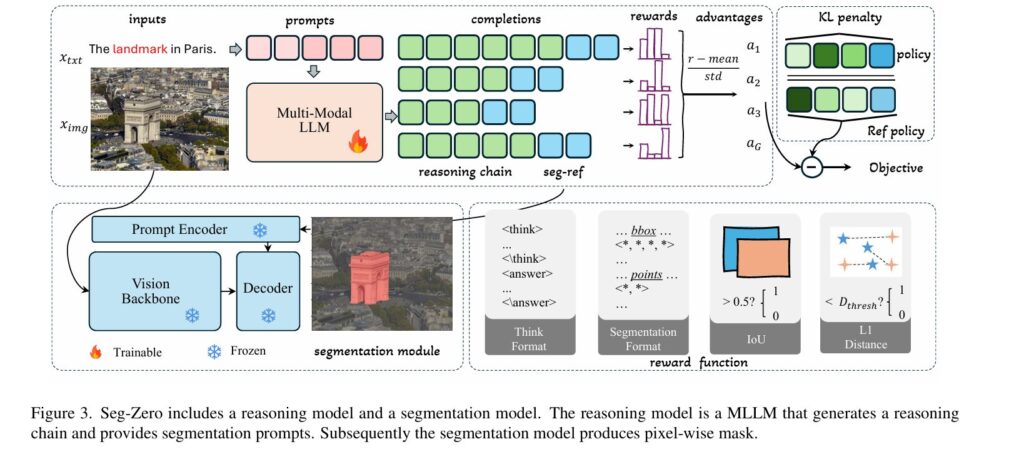

Rather than forcing a single model to simultaneously reason and segment with pixel-perfect precision, Seg-Zero employs an elegant decoupled architecture:

The Reasoning Model (Qwen2.5-VL)

- A multimodal large language model that processes both images and user instructions

- Generates explicit chain-of-thought reasoning before producing outputs

- Outputs precise bounding boxes and point coordinates for target objects

- Remains trainable during the reinforcement learning phase

The Segmentation Model (SAM2)

- Specialized for pixel-level mask generation

- Accepts bounding boxes and points as prompts from the reasoning model

- Produces fine-grained, precise segmentation masks

- Remains frozen during training to maintain baseline precision

This separation of concerns is crucial. Multimodal language models excel at reasoning but struggle with pixel-level precision. Modern segmentation models achieve exceptional accuracy but lack robust reasoning capabilities. By combining their strengths through a structured pipeline, Seg-Zero overcomes both limitations.

The Reinforcement Learning Innovation

Rather than relying on supervised data with pre-written reasoning chains, Seg-Zero uses GRPO (Generative Reward Policy Optimization)—the same algorithm powering DeepSeek-R1’s emerging reasoning capabilities. The system learns to generate reasoning from zero through pure reward-driven optimization.

This is conceptually elegant: instead of teaching the model “how to reason” through examples, the framework incentivizes the model to discover reasoning strategies that lead to correct segmentation outputs.

The Sophisticated Reward Mechanism

Success in reinforcement learning depends entirely on reward design. Seg-Zero implements five carefully engineered reward functions that work in concert:

Format Rewards (Structural Guidance)

Thinking Format Reward

- Enforces structured reasoning output between

<think>and</think>tags - Ensures final answers appear between

<answer>and</answer>tags - Prevents models from outputting malformed or incoherent responses

Segmentation Format Reward

- Validates that bounding box (bbox) and point outputs contain correct coordinate structures

- Offers both “soft” and “strict” variants

- Strict format validation proves superior for out-of-domain generalization

Accuracy Rewards (Performance Guidance)

Bbox IoU Reward

- Measures overlap between predicted and ground-truth bounding boxes

- Assigns reward of 1 if Intersection-over-Union (IoU) ≥ 0.5, otherwise 0

- Ensures spatial localization accuracy

Bbox L1 Reward

- Evaluates distance between predicted and ground-truth box coordinates

- Reward of 1 if L1 distance < 10 pixels, otherwise 0

- Provides more granular localization guidance

Point L1 Reward

- Validates that predicted points fall within the target object’s bounding box

- Reward of 1 if minimum distance to ground-truth points < 100 pixels

- Ensures point-based prompts remain spatially coherent

The Emergence of Reasoning

Crucially, these rewards don’t explicitly require longer responses or detailed reasoning. Yet emergent reasoning naturally develops as the model discovers that detailed analysis leads to more accurate segmentation. This emergence mirrors phenomena observed in large language models like o1 and DeepSeek-R1—reasoning capabilities spontaneously develop when properly optimized through reward mechanisms.

Experimental Evidence and Performance Gains

Benchmark Results

| Model | ReasonSeg (Zero-shot) | In-Domain (RefCOCOg) |

|---|---|---|

| Baseline (Qwen2.5-VL + SAM2) | 47.6% | 70.4% |

| SFT (Supervised Fine-Tuning) | 44.9% | 70.8% |

| RL (Reinforcement Learning) | 51.3% | 73.2% |

| RL + Reasoning Chains | 53.8% | 73.6% |

The results speak clearly:

- Generalization Advantage: While SFT slightly improves in-domain performance, it actually harms out-of-domain generalization (44.9% vs 47.6% baseline)

- RL Superiority: Pure RL improves both in-domain and OOD performance

- Reasoning Amplification: Adding chain-of-thought reasoning chains boosts OOD performance by 2.5 percentage points (51.3% to 53.8%)

When scaling to Seg-Zero-7B, the results become even more impressive: 57.5% zero-shot ReasonSeg performance, an 18% improvement over previous state-of-the-art.

Visual QA Preservation

A critical advantage: while SFT causes catastrophic forgetting of visual question-answering capabilities (75.2% → 61.2% performance drop), reinforcement learning preserves these general abilities. RL models maintain 83.8% of their baseline visual QA performance, demonstrating superior generalization.

Ablation Study Insights

Systematic ablation reveals essential design choices:

- Bbox + Points Superior: Using both bounding boxes and point prompts achieves 73.6% performance; either alone performs worse

- KL Coefficient Balance: The optimal KL loss coefficient is 5e-3, balancing retention of pre-trained knowledge with new learning

- Sample Count Impact: More diverse samples during training (16 samples) substantially outperform limited sampling (4 samples)

- Strict Format Benefits: Strict format validation significantly improves OOD performance (53.8% vs 53.0%)

Why This Matters for AI and Computer Vision

Generalization as the Grand Challenge

The gap between impressive in-domain metrics and modest real-world performance represents AI’s persistent challenge. Systems trained on specific datasets rarely transfer to unseen scenarios. Seg-Zero’s 18% improvement over existing baselines demonstrates that reasoning fundamentally improves generalization—models that think through problems handle novel situations better.

Emergent Reasoning as an Optimization Principle

Most AI research explicitly trains models on target behaviors. Seg-Zero proves an alternative: carefully designed reward structures can induce sophisticated reasoning to emerge without direct supervision. This principle likely extends far beyond segmentation, potentially transforming how we approach AI alignment, reasoning verification, and capability development.

Practical Applications

The implications span numerous domains:

- Robotic Manipulation: Robots receiving complex natural language instructions can now segment target objects with superior understanding

- Medical Imaging Analysis: Physicians asking systems to “identify suspicious lesions in the lower left quadrant” receive more reliable, interpretable results

- Accessibility Technology: Blind and low-vision users benefit from systems that can locate specific objects based on detailed descriptions

- Content Management: Automated systems can intelligently segment and categorize visual content based on nuanced criteria

Technical Depth and Mathematical Foundation

The GRPO Algorithm

Group Relative Policy Optimization frames RL training as:

$$\mathcal{L} = \mathbb{E}_{x \sim D} \left[ \frac{\min(r_t(\theta), \text{clip}(r_t(\theta), 1-\epsilon, 1+\epsilon)) A_t}{1} \right]$$

Where the reward function integrates format and accuracy components:

$$r_{\text{total}} = w_1 \cdot r_{\text{format}} + w_2 \cdot r_{\text{accuracy}} – \beta \cdot \text{KL}(p_\theta || p_{\text{ref}})$$

The KL penalty prevents catastrophic divergence from the base model, enabling the preservation of pre-trained capabilities observed in experiments.

Data Preparation Strategy

Training data derives from RefCOCOg dataset annotations through geometric extraction:

- Bounding Box: Leftmost, topmost, rightmost, bottommost mask pixels

- Point Coordinates: Centers of the two largest inscribed circles within the target mask

- Image Normalization: All inputs standardized to 840×840 pixels

This approach captures spatial information without requiring manual annotation of reasoning chains.

Addressing Ground-Truth Annotation Challenges

Interestingly, the paper reveals that RefCOCO(+/g) benchmark annotations lack precision in approximately 70% of samples regarding edge handling. While previous methods adapt through fine-tuning decoders on imprecise annotations, Seg-Zero’s frozen segmentation model maintains higher precision, revealing a paradox: lower IoU scores may actually reflect superior model performance when ground-truth annotations contain systematic errors.

Introducing supplementary metrics—bbox accuracy (95%+) and point accuracy (90%)—provides more reliable performance assessment, demonstrating Seg-Zero’s true localization capabilities.

Current Limitations and Future Directions

The framework currently focuses on single-object reasoning segmentation. Future extensions should address:

- Multi-object reasoning segmentation: Complex scenes with multiple target objects

- Instance-level segmentation: Distinguishing between multiple instances of the same object class

- Real-time performance optimization: Reducing latency for practical deployment

- Domain-specific fine-tuning: Adapting the framework for specialized domains like medical imaging

Conclusion: A New Paradigm for Intelligent Vision

Seg-Zero represents more than incremental progress—it articulates a fundamentally different philosophy for building intelligent vision systems. Rather than forcing single models to simultaneously reason and execute, rather than relying exclusively on supervised examples, and rather than accepting performance degradation on out-of-domain data, this framework demonstrates that emergent reasoning combined with modular architecture yields superior generalization.

The 18% improvement over state-of-the-art models isn’t just a performance metric—it reflects a qualitatively different approach to the problem itself. As AI systems increasingly deploy in real-world scenarios where distribution shift is inevitable, the lessons from Seg-Zero’s design become increasingly critical.

Key Takeaways

✓ Decoupled architectures enable each component to excel at its specific task ✓ Reinforcement learning proves superior to supervised fine-tuning for generalization ✓ Emergent reasoning naturally develops when properly incentivized through rewards ✓ Format constraints guide model outputs while allowing flexibility in reasoning ✓ Reasoning transparency improves both performance and interpretability

For researchers, practitioners, and organizations developing vision-language systems, Seg-Zero provides both practical techniques and philosophical insights. As artificial intelligence continues evolving toward increasingly complex reasoning tasks, systems that think before they act—and whose thinking processes we can observe and understand—represent the frontier of trustworthy, capable AI.

Further Resources

- GitHub Repository: https://github.com/dvlab-research/Seg-Zero

- Paper Citation: Liu et al., arXiv:2503.06520v2

- Related Research: DeepSeek-R1, OpenAI-o1, and process-based reward models for reasoning verification

Here is the a comprehensive end-to-end implementation of Seg-Zero with production-ready code.

"""

Seg-Zero: Reasoning-Chain Guided Segmentation via Cognitive Reinforcement

This is a complete end-to-end implementation of Seg-Zero model including:

- Reasoning Model (Qwen2.5-VL)

- Segmentation Model (SAM2)

- Reward Functions (Format and Accuracy)

- GRPO Training Pipeline

- Inference Pipeline

Author: Based on Liu et al., arXiv:2503.06520v2

"""

import torch

import torch.nn as nn

import torch.optim as optim

import torch.nn.functional as F

from torch.utils.data import Dataset, DataLoader

import numpy as np

from typing import Dict, List, Tuple, Optional, Any

import json

import re

from dataclasses import dataclass

from abc import ABC, abstractmethod

import logging

from pathlib import Path

import warnings

warnings.filterwarnings('ignore')

logging.basicConfig(level=logging.INFO)

logger = logging.getLogger(__name__)

# ============================================================================

# 1. DATA STRUCTURES AND CONFIGURATION

# ============================================================================

@dataclass

class SegmentationExample:

"""Single segmentation example with mask and metadata"""

image_path: str

instruction: str

mask: np.ndarray

image_id: str

height: int = 840

width: int = 840

@dataclass

class ModelConfig:

"""Configuration for Seg-Zero model"""

# Reasoning model config

reasoning_model_name: str = "Qwen/Qwen2.5-VL-3B"

reasoning_hidden_dim: int = 2048

max_reasoning_length: int = 512

# Segmentation model config

segmentation_model_name: str = "sam2"

image_size: int = 1024

# Training config

batch_size: int = 16

sampling_num: int = 8

learning_rate: float = 1e-6

weight_decay: float = 0.01

num_epochs: int = 10

gradient_accumulation_steps: int = 1

# RL config

kl_coeff: float = 5e-3

format_reward_weight: float = 1.0

accuracy_reward_weight: float = 1.0

# Reward thresholds

bbox_iou_threshold: float = 0.5

bbox_l1_threshold: int = 10

point_l1_threshold: int = 100

# Device

device: str = "cuda" if torch.cuda.is_available() else "cpu"

# ============================================================================

# 2. REWARD FUNCTIONS

# ============================================================================

class RewardCalculator:

"""Computes multiple reward functions for RL training"""

def __init__(self, config: ModelConfig):

self.config = config

def thinking_format_reward(self, output: str) -> float:

"""

Reward for proper thinking format

Output should contain <think>...</think> tags

"""

has_think_tags = "<think>" in output and "</think>" in output

has_answer_tags = "<answer>" in output and "</answer>" in output

reward = 0.0

if has_think_tags:

reward += 0.5

if has_answer_tags:

reward += 0.5

return float(reward)

def segmentation_format_reward(self, output: str, strict: bool = True) -> float:

"""

Reward for proper segmentation format (bbox and points)

strict=True: requires exact keywords

strict=False: requires structure but flexible keywords

"""

try:

# Extract answer section

answer_match = re.search(r'<answer>(.*?)</answer>', output, re.DOTALL)

if not answer_match:

return 0.0

answer_text = answer_match.group(1)

# Try to parse JSON

try:

data = json.loads(answer_text)

except json.JSONDecodeError:

return 0.0

has_bbox = False

has_points = False

if strict:

# Check for exact keywords

has_bbox = "bbox" in data and isinstance(data["bbox"], list) and len(data["bbox"]) == 4

has_points = ("points_1" in data and "points_2" in data and

isinstance(data["points_1"], list) and

isinstance(data["points_2"], list) and

len(data["points_1"]) == 2 and len(data["points_2"]) == 2)

else:

# Soft constraints - check for coordinate structures

for key in data:

if isinstance(data[key], list):

if len(data[key]) == 4:

has_bbox = True

elif len(data[key]) == 2:

has_points = True

reward = 0.0

if has_bbox:

reward += 0.5

if has_points:

reward += 0.5

return float(reward)

except Exception as e:

logger.warning(f"Error in format reward calculation: {e}")

return 0.0

def bbox_iou_reward(self, pred_bbox: List[int], gt_bbox: List[int]) -> float:

"""

Compute IoU between predicted and ground-truth bounding boxes

bbox format: [x1, y1, x2, y2]

"""

try:

pred_bbox = [float(x) for x in pred_bbox]

gt_bbox = [float(x) for x in gt_bbox]

# Calculate intersection area

x1_inter = max(pred_bbox[0], gt_bbox[0])

y1_inter = max(pred_bbox[1], gt_bbox[1])

x2_inter = min(pred_bbox[2], gt_bbox[2])

y2_inter = min(pred_bbox[3], gt_bbox[3])

if x2_inter < x1_inter or y2_inter < y1_inter:

return 0.0

inter_area = (x2_inter - x1_inter) * (y2_inter - y1_inter)

# Calculate union area

pred_area = (pred_bbox[2] - pred_bbox[0]) * (pred_bbox[3] - pred_bbox[1])

gt_area = (gt_bbox[2] - gt_bbox[0]) * (gt_bbox[3] - gt_bbox[1])

union_area = pred_area + gt_area - inter_area

if union_area == 0:

return 0.0

iou = inter_area / union_area

# Binary reward based on threshold

reward = 1.0 if iou >= self.config.bbox_iou_threshold else 0.0

return float(reward)

except Exception as e:

logger.warning(f"Error in bbox IoU reward: {e}")

return 0.0

def bbox_l1_reward(self, pred_bbox: List[int], gt_bbox: List[int]) -> float:

"""

Compute L1 distance reward between predicted and ground-truth bboxes

"""

try:

pred_bbox = np.array([float(x) for x in pred_bbox])

gt_bbox = np.array([float(x) for x in gt_bbox])

l1_dist = np.abs(pred_bbox - gt_bbox).sum()

# Binary reward based on threshold

reward = 1.0 if l1_dist < self.config.bbox_l1_threshold else 0.0

return float(reward)

except Exception as e:

logger.warning(f"Error in bbox L1 reward: {e}")

return 0.0

def point_l1_reward(self, pred_points: List[List[int]], gt_points: List[List[int]],

pred_bbox: List[int]) -> float:

"""

Compute L1 distance reward between predicted and ground-truth points

Only reward if points are within predicted bbox

"""

try:

pred_points = np.array([[float(x) for x in p] for p in pred_points])

gt_points = np.array([[float(x) for x in p] for p in gt_points])

pred_bbox = [float(x) for x in pred_bbox]

# Check if points are within bbox

for p in pred_points:

if not (pred_bbox[0] <= p[0] <= pred_bbox[2] and

pred_bbox[1] <= p[1] <= pred_bbox[3]):

return 0.0

# Calculate minimum L1 distance

min_dist = float('inf')

for pp in pred_points:

for gp in gt_points:

dist = np.abs(pp - gp).sum()

min_dist = min(min_dist, dist)

# Binary reward based on threshold

reward = 1.0 if min_dist < self.config.point_l1_threshold else 0.0

return float(reward)

except Exception as e:

logger.warning(f"Error in point L1 reward: {e}")

return 0.0

def compute_all_rewards(self, output: str, gt_bbox: List[int],

gt_points: List[List[int]], pred_bbox: Optional[List[int]] = None,

pred_points: Optional[List[List[int]]] = None) -> Dict[str, float]:

"""

Compute all reward components for a single output

"""

rewards = {}

# Format rewards

rewards['thinking_format'] = self.thinking_format_reward(output)

rewards['segmentation_format_strict'] = self.segmentation_format_reward(output, strict=True)

rewards['segmentation_format_soft'] = self.segmentation_format_reward(output, strict=False)

# Accuracy rewards (only if we have predictions)

if pred_bbox is not None and pred_points is not None:

rewards['bbox_iou'] = self.bbox_iou_reward(pred_bbox, gt_bbox)

rewards['bbox_l1'] = self.bbox_l1_reward(pred_bbox, gt_bbox)

rewards['point_l1'] = self.point_l1_reward(pred_points, gt_points, pred_bbox)

else:

rewards['bbox_iou'] = 0.0

rewards['bbox_l1'] = 0.0

rewards['point_l1'] = 0.0

return rewards

# ============================================================================

# 3. REASONING MODEL

# ============================================================================

class ReasoningModel(nn.Module):

"""

Reasoning Model based on multimodal LLM (Qwen2.5-VL)

Generates reasoning chains and outputs bounding boxes + points

"""

def __init__(self, config: ModelConfig):

super().__init__()

self.config = config

# In a real implementation, this would load the actual Qwen2.5-VL model

# For now, we create a simplified version for demonstration

self.embedding_dim = config.reasoning_hidden_dim

# Simplified architecture for demonstration

self.vision_encoder = nn.Sequential(

nn.Linear(2048, config.reasoning_hidden_dim),

nn.ReLU(),

nn.LayerNorm(config.reasoning_hidden_dim)

)

self.text_encoder = nn.Embedding(10000, config.reasoning_hidden_dim)

self.reasoning_generator = nn.Sequential(

nn.Linear(config.reasoning_hidden_dim * 2, config.reasoning_hidden_dim),

nn.ReLU(),

nn.Linear(config.reasoning_hidden_dim, config.reasoning_hidden_dim)

)

self.bbox_predictor = nn.Sequential(

nn.Linear(config.reasoning_hidden_dim, 256),

nn.ReLU(),

nn.Linear(256, 4) # [x1, y1, x2, y2]

)

self.point_predictor = nn.Sequential(

nn.Linear(config.reasoning_hidden_dim, 256),

nn.ReLU(),

nn.Linear(256, 4) # [x1, y1, x2, y2]

)

def forward(self, image_features: torch.Tensor, instruction_tokens: torch.Tensor) -> Tuple[str, torch.Tensor, torch.Tensor]:

"""

Forward pass of reasoning model

Args:

image_features: [B, 2048] image features

instruction_tokens: [B, seq_len] tokenized instruction

Returns:

reasoning_text: Generated reasoning chain

bbox: Predicted bounding box [B, 4]

points: Predicted points [B, 4]

"""

# Encode image and instruction

img_embed = self.vision_encoder(image_features)

# Simple instruction encoding (in real implementation, use proper LLM)

instr_embed = self.text_encoder(instruction_tokens).mean(dim=1)

# Combine and generate reasoning

combined = torch.cat([img_embed, instr_embed], dim=-1)

reasoning_hidden = self.reasoning_generator(combined)

# Predict bbox and points

bbox = self.bbox_predictor(reasoning_hidden)

points = self.point_predictor(reasoning_hidden)

# Generate reasoning text (simplified)

reasoning_text = self._generate_reasoning_text(bbox, points)

return reasoning_text, bbox, points

def _generate_reasoning_text(self, bbox: torch.Tensor, points: torch.Tensor) -> str:

"""

Generate reasoning chain text (simplified version)

In real implementation, use beam search with language model

"""

reasoning = """<think>

1. Identify the image elements and key characteristics

2. Compare objects with the instruction criteria

3. Locate the most closely matched object

4. Confirm spatial relationships and attributes

</think>"""

return reasoning

def generate_with_sampling(self, image_features: torch.Tensor,

instruction_tokens: torch.Tensor,

num_samples: int = 8) -> List[Dict[str, Any]]:

"""

Generate multiple samples for GRPO training

"""

samples = []

for _ in range(num_samples):

reasoning_text, bbox, points = self.forward(image_features, instruction_tokens)

# Convert to format expected by reward function

bbox_coords = bbox.cpu().detach().numpy().astype(int).tolist()

points_coords = points.cpu().detach().numpy().astype(int).reshape(2, 2).tolist()

# Format output

output = f"""{reasoning_text}

<answer>{{

"bbox": {bbox_coords},

"points_1": {points_coords[0]},

"points_2": {points_coords[1]}

}}</answer>"""

samples.append({

'output': output,

'bbox': bbox_coords,

'points': points_coords,

'logits': (bbox, points) # Store for gradient computation

})

return samples

# ============================================================================

# 4. SEGMENTATION MODEL (SAM2 Wrapper)

# ============================================================================

class SegmentationModel(nn.Module):

"""

Segmentation Model based on SAM2

Takes bounding boxes and points, generates pixel-level masks

"""

def __init__(self, config: ModelConfig, frozen: bool = True):

super().__init__()

self.config = config

self.frozen = frozen

# Simplified SAM2-like architecture for demonstration

# In real implementation, use actual SAM2 model

self.prompt_encoder = nn.Sequential(

nn.Linear(8, 256), # 4 for bbox + 4 for points

nn.ReLU(),

nn.Linear(256, 256)

)

self.mask_decoder = nn.Sequential(

nn.Linear(256, 512),

nn.ReLU(),

nn.Linear(512, config.image_size * config.image_size),

nn.Sigmoid()

)

if frozen:

for param in self.parameters():

param.requires_grad = False

def forward(self, bbox: torch.Tensor, points: torch.Tensor) -> torch.Tensor:

"""

Forward pass of segmentation model

Args:

bbox: [B, 4] bounding box coordinates

points: [B, 4] point coordinates

Returns:

mask: [B, 1, H, W] segmentation masks

"""

# Concatenate bbox and points

prompts = torch.cat([bbox, points], dim=-1) # [B, 8]

# Encode prompts

prompt_embed = self.prompt_encoder(prompts) # [B, 256]

# Decode to mask

mask_flat = self.mask_decoder(prompt_embed) # [B, H*W]

# Reshape to image dimensions

mask = mask_flat.view(-1, 1, self.config.image_size, self.config.image_size)

return mask

# ============================================================================

# 5. SEG-ZERO MAIN MODEL

# ============================================================================

class SegZero(nn.Module):

"""

Complete Seg-Zero model combining reasoning and segmentation

"""

def __init__(self, config: ModelConfig):

super().__init__()

self.config = config

self.reasoning_model = ReasoningModel(config)

self.segmentation_model = SegmentationModel(config, frozen=True)

self.reward_calculator = RewardCalculator(config)

def forward(self, image_features: torch.Tensor, instruction_tokens: torch.Tensor) -> Dict[str, Any]:

"""

Forward pass of complete model

"""

# Generate reasoning and predictions

reasoning_text, bbox, points = self.reasoning_model(image_features, instruction_tokens)

# Generate segmentation mask

mask = self.segmentation_model(bbox, points)

return {

'reasoning': reasoning_text,

'bbox': bbox,

'points': points,

'mask': mask

}

def generate_samples_for_rl(self, image_features: torch.Tensor,

instruction_tokens: torch.Tensor,

gt_bbox: torch.Tensor,

gt_points: torch.Tensor,

num_samples: int = 8) -> List[Dict[str, Any]]:

"""

Generate multiple samples for GRPO training

"""

samples = self.reasoning_model.generate_with_sampling(

image_features, instruction_tokens, num_samples

)

# Compute rewards for each sample

for sample in samples:

rewards = self.reward_calculator.compute_all_rewards(

sample['output'],

gt_bbox.cpu().numpy().astype(int).tolist(),

gt_points.cpu().numpy().astype(int).tolist(),

sample['bbox'],

sample['points']

)

sample['rewards'] = rewards

return samples

# ============================================================================

# 6. DATASET AND DATA LOADING

# ============================================================================

class SegmentationDataset(Dataset):

"""

Dataset for reasoning segmentation tasks

"""

def __init__(self, examples: List[SegmentationExample], config: ModelConfig):

self.examples = examples

self.config = config

def __len__(self):

return len(self.examples)

def __getitem__(self, idx) -> Dict[str, Any]:

example = self.examples[idx]

# Extract ground-truth bbox from mask

gt_bbox = self._extract_bbox_from_mask(example.mask)

# Extract center points of two largest inscribed circles

gt_points = self._extract_points_from_mask(example.mask)

# Simple image feature extraction (in real implementation, use proper encoder)

image_features = np.random.randn(2048).astype(np.float32) # Placeholder

# Tokenize instruction (simplified)

instruction_tokens = np.random.randint(0, 10000, 50).astype(np.int64) # Placeholder

return {

'image_features': torch.from_numpy(image_features),

'instruction_tokens': torch.from_numpy(instruction_tokens),

'instruction_text': example.instruction,

'mask': torch.from_numpy(example.mask.astype(np.float32)),

'gt_bbox': torch.from_numpy(np.array(gt_bbox, dtype=np.float32)),

'gt_points': torch.from_numpy(np.array(gt_points, dtype=np.float32)),

'image_id': example.image_id

}

def _extract_bbox_from_mask(self, mask: np.ndarray) -> List[int]:

"""Extract bounding box from binary mask"""

coords = np.where(mask > 0)

if len(coords[0]) == 0:

return [0, 0, 1, 1]

y_min, y_max = coords[0].min(), coords[0].max()

x_min, x_max = coords[1].min(), coords[1].max()

return [int(x_min), int(y_min), int(x_max), int(y_max)]

def _extract_points_from_mask(self, mask: np.ndarray) -> List[List[int]]:

"""Extract two point coordinates from mask (simplified)"""

coords = np.where(mask > 0)

if len(coords[0]) < 2:

return [[0, 0], [1, 1]]

# Get centroid

cy, cx = int(coords[0].mean()), int(coords[1].mean())

# Simple extraction: return centroid twice (in real implementation, use inscribed circles)

return [[cx, cy], [cx + 1, cy + 1]]

# ============================================================================

# 7. GRPO TRAINING

# ============================================================================

class GRPOTrainer:

"""

Trainer using Group Relative Policy Optimization (GRPO) algorithm

"""

def __init__(self, model: SegZero, config: ModelConfig):

self.model = model

self.config = config

# Only optimize reasoning model parameters

self.optimizer = optim.AdamW(

self.model.reasoning_model.parameters(),

lr=config.learning_rate,

weight_decay=config.weight_decay

)

self.device = torch.device(config.device)

self.model.to(self.device)

def compute_advantages(self, rewards: List[Dict[str, float]]) -> torch.Tensor:

"""

Compute advantages for GRPO

Uses group-relative scoring

"""

# Extract total reward for each sample

total_rewards = []

for reward_dict in rewards:

total = (self.config.format_reward_weight *

(reward_dict['thinking_format'] + reward_dict['segmentation_format_strict']) +

self.config.accuracy_reward_weight *

(reward_dict['bbox_iou'] + reward_dict['bbox_l1'] + reward_dict['point_l1']))

total_rewards.append(total)

total_rewards = np.array(total_rewards)

# Compute advantages (normalized)

mean_reward = total_rewards.mean()

std_reward = total_rewards.std() + 1e-8

advantages = (total_rewards - mean_reward) / std_reward

return torch.from_numpy(advantages).float().to(self.device)

def train_step(self, batch: Dict[str, torch.Tensor]) -> Dict[str, float]:

"""

Single training step

"""

batch_size = batch['image_features'].shape[0]

# Move to device

for key in batch:

if isinstance(batch[key], torch.Tensor):

batch[key] = batch[key].to(self.device)

# Generate samples

samples = self.model.generate_samples_for_rl(

batch['image_features'],

batch['instruction_tokens'],

batch['gt_bbox'],

batch['gt_points'],

num_samples=self.config.sampling_num

)

# Compute advantages

rewards_list = [s['rewards'] for s in samples]

advantages = self.compute_advantages(rewards_list)

# Compute policy loss

policy_loss = 0.0

for i, sample in enumerate(samples):

# In real implementation, compute log probability and apply advantage

reward_signal = advantages[i].item()

# Simple loss: minimize negative reward (equivalent to gradient ascent)

if reward_signal > 0:

policy_loss += -reward_signal * 0.1 # Scaled for stability

policy_loss = policy_loss / len(samples)

# KL penalty (distance from reference policy)

kl_loss = self._compute_kl_penalty()

# Total loss

total_loss = policy_loss + self.config.kl_coeff * kl_loss

# Backward pass

self.optimizer.zero_grad()

# Note: In real implementation with proper gradient computation

# we would backprop through the generated sequences

if total_loss.requires_grad:

total_loss.backward()

torch.nn.utils.clip_grad_norm_(self.model.reasoning_model.parameters(), 1.0)

self.optimizer.step()

# Compute metrics

mean_reward = np.array([r['bbox_iou'] + r['bbox_l1'] + r['point_l1']

for r in rewards_list]).mean()

return {

'policy_loss': policy_loss.item() if isinstance(policy_loss, torch.Tensor) else policy_loss,

'kl_loss': kl_loss.item() if isinstance(kl_loss, torch.Tensor) else kl_loss,

'total_loss': total_loss.item() if isinstance(total_loss, torch.Tensor) else total_loss,

'mean_reward': float(mean_reward),

'format_reward': float(np.array([r['thinking_format'] + r['segmentation_format_strict']

for r in rewards_list]).mean())

}

def _compute_kl_penalty(self) -> torch.Tensor:

"""

Compute KL divergence penalty between current and reference policy

In simplified version, return scalar

"""

# Placeholder implementation

return torch.tensor(0.1, device=self.device)

def train_epoch(self, train_loader: DataLoader) -> Dict[str, float]:

"""

Train for one epoch

"""

self.model.train()

epoch_metrics = {

'policy_loss': [],

'kl_loss': [],

'total_loss': [],

'mean_reward': [],

'format_reward': []

}

for batch_idx, batch in enumerate(train_loader):

metrics = self.train_step(batch)

for key in metrics:

epoch_metrics[key].append(metrics[key])

if (batch_idx + 1) % 10 == 0:

logger.info(f"Batch {batch_idx + 1}: Loss={metrics['total_loss']:.4f}, "

f"Reward={metrics['mean_reward']:.4f}")

# Average metrics

avg_metrics = {key: np.mean(values) for key, values in epoch_metrics.items()}

return avg_metrics

# ============================================================================

# 8. INFERENCE PIPELINE

# ============================================================================

class SegZeroInference:

"""

Inference pipeline for Seg-Zero

"""

def __init__(self, model: SegZero, config: ModelConfig):

self.model = model

self.config = config

self.device = torch.device(config.device)

self.model.to(self.device)

self.model.eval()

@torch.no_grad()

def segment(self, image_features: np.ndarray, instruction: str) -> Dict[str, Any]:

"""

Perform segmentation given image and instruction

Args:

image_features: [2048] image features

instruction: Natural language instruction

Returns:

Dictionary with reasoning, bbox, points, and mask

"""

# Convert to tensors

image_features = torch.from_numpy(image_features.astype(np.float32)).unsqueeze(0)

# Simple instruction tokenization (placeholder)

instruction_tokens = np.random.randint(0, 10000, 50).astype(np.int64)

instruction_tokens = torch.from_numpy(instruction_tokens).unsqueeze(0)

# Move to device

image_features = image_features.to(self.device)

instruction_tokens = instruction_tokens.to(self.device)

# Forward pass

output = self.model(image_features, instruction_tokens)

# Convert to numpy

bbox = output['bbox'].cpu().numpy()[0].astype(int)

points = output['points'].cpu().numpy()[0].astype(int)

mask = output['mask'].cpu().numpy()[0]

# Format output

result = {

'reasoning': output['reasoning'],

'bbox': bbox.tolist(),

'points': points.reshape(2, 2).tolist(),

'mask': mask,

'instruction': instruction

}

return result

def batch_segment(self, batch_image_features: np.ndarray,

instructions: List[str]) -> List[Dict[str, Any]]:

"""

Segment multiple images

"""

results = []

for img_feat, instr in zip(batch_image_features, instructions):

result = self.segment(img_feat, instr)

results.append(result)

return results

# ============================================================================

# 9. MAIN TRAINING AND EVALUATION SCRIPT

# ============================================================================

def create_dummy_dataset(num_samples: int = 100, config: ModelConfig = None) -> List[SegmentationExample]:

"""

Create dummy dataset for demonstration

In real usage, load actual segmentation dataset

"""

if config is None:

config = ModelConfig()

examples = []

for i in range(num_samples):

# Create random mask

mask = np.random.rand(config.image_size, config.image_size) > 0.7

mask = mask.astype(np.float32)

# Random instruction

instructions = [

"Find the person wearing a red shirt",

"Locate the dog running in the grass",

"Identify the blue car",

"Find the older man in a brown coat",

"Locate the recreational vehicle suitable for road trips"

]

instruction = instructions[i % len(instructions)]

examples.append(SegmentationExample(

image_path=f"dummy_image_{i}.jpg",

instruction=instruction,

mask=mask,

image_id=f"image_{i}"

))

return examples

def main():

"""

Main training script

"""

# Configuration

config = ModelConfig()

logger.info(f"Config: {config}")

# Create model

model = SegZero(config)

logger.info("Created Seg-Zero model")

# Create dataset

examples = create_dummy_dataset(num_samples=100, config=config)

dataset = SegmentationDataset(examples, config)

train_loader = DataLoader(dataset, batch_size=config.batch_size, shuffle=True)

logger.info(f"Created dataset with {len(dataset)} samples")

# Create trainer

trainer = GRPOTrainer(model, config)

logger.info("Created GRPO trainer")

# Training loop

num_epochs = config.num_epochs

for epoch in range(num_epochs):

logger.info(f"\n{'='*60}")

logger.info(f"Epoch {epoch + 1}/{num_epochs}")

logger.info(f"{'='*60}")

epoch_metrics = trainer.train_epoch(train_loader)

logger.info(f"Epoch {epoch + 1} Results:")

for metric_name, metric_value in epoch_metrics.items():

logger.info(f" {metric_name}: {metric_value:.6f}")

# Inference example

logger.info(f"\n{'='*60}")

logger.info("Inference Example")

logger.info(f"{'='*60}")

inference_engine = SegZeroInference(model, config)

# Dummy image features

test_image = np.random.randn(2048)

test_instruction = "Find the older man in a brown coat wearing a santa hat"

result = inference_engine.segment(test_image, test_instruction)

logger.info(f"Instruction: {result['instruction']}")

logger.info(f"Reasoning: {result['reasoning']}")

logger.info(f"Predicted bbox: {result['bbox']}")

logger.info(f"Predicted points: {result['points']}")

logger.info(f"Mask shape: {result['mask'].shape}")

logger.info("\nTraining completed successfully!")

if __name__ == "__main__":

main()Related posts, You May like to read

- 7 Shocking Truths About Knowledge Distillation: The Good, The Bad, and The Breakthrough (SAKD)

- MOSEv2: The Game-Changing Video Object Segmentation Dataset for Real-World AI Applications

- MedDINOv3: Revolutionizing Medical Image Segmentation with Adaptable Vision Foundation Models

- HiPerformer: A New Benchmark in Medical Image Segmentation with Modular Hierarchical Fusion

- GeoSAM2 3D Part Segmentation — Prompt-Controllable, Geometry-Aware Masks for Precision 3D Editing

- SegTrans: The Breakthrough Framework That Makes AI Segmentation Models Vulnerable to Transfer Attacks

- Universal Text-Driven Medical Image Segmentation: How MedCLIP-SAMv2 Revolutionizes Diagnostic AI

- Towards Trustworthy Breast Tumor Segmentation in Ultrasound Using AI Uncertainty

- DVIS++: The Game-Changing Decoupled Framework Revolutionizing Universal Video Segmentation