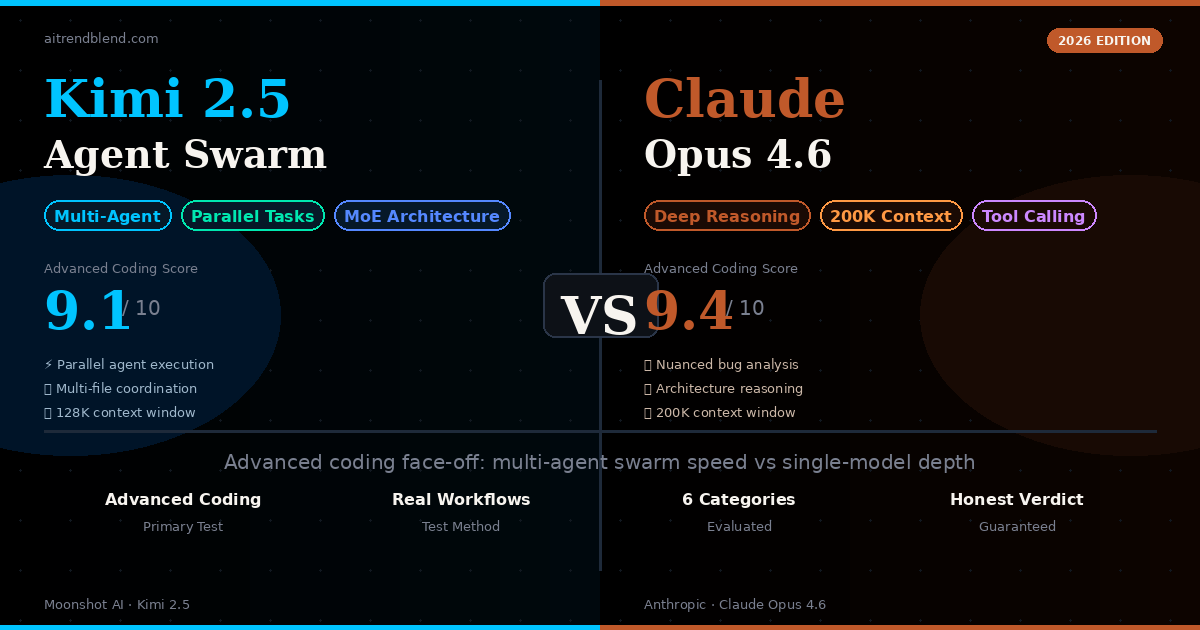

Kimi 2.5 Agent Swarm vs Claude Opus 4.6: Which AI Wins for Advanced Coding?

The AI coding space in 2026 has split into two camps. One camp believes that complex coding problems are best solved through parallelism — break the task into subtasks, run multiple specialized agents simultaneously, and synthesize the results. The other camp argues that truly difficult coding problems require deep, coherent reasoning that a single model handles better than a fragmented committee.

Kimi 2.5 from Moonshot AI is the most prominent representative of the first camp. Its agent swarm architecture can spin up multiple agents to work on different files, modules, or problem dimensions in parallel — coordinating through a shared memory layer to avoid conflicts. Claude Opus 4.6 from Anthropic represents the second camp, with a 200,000-token context window that lets it hold an entire codebase in mind and reason about it with the coherence that comes from a single model’s undivided attention.

Both are genuinely excellent at advanced coding. The question isn’t “which one is good” — it’s “which one is right for your situation.” The answer, as you’ll see, depends heavily on the type of task, your workflow, and how much you need the output to be immediately trustworthy vs. fast.

Understanding the Two Architectures

Before getting into test results, it’s worth spending a minute on why these two models are so architecturally different — because the architecture explains a lot of the behavior you’ll observe when you use them on hard coding problems.

Kimi 2.5’s Agent Swarm Approach

Kimi 2.5 is built around a mixture-of-experts (MoE) backbone combined with a multi-agent orchestration layer. When you give it a complex coding task, the system doesn’t just process it linearly — it can decompose the problem and assign subtasks to specialized agents that work in parallel. Think of it as having a small engineering team where each engineer focuses on their domain simultaneously: one agent handles the database layer, another works on the API surface, a third reviews the tests, and a coordinator synthesizes everything back together.

This approach has a real speed advantage on tasks that decompose cleanly. Refactoring a large codebase across multiple files, implementing a feature across several layers of a stack, or adding comprehensive test coverage to an existing project — these are tasks where working in parallel cuts total time significantly.

Claude Opus 4.6’s Deep Reasoning Approach

Claude Opus 4.6 takes the opposite architectural bet. Rather than splitting attention across multiple agents, it concentrates reasoning power in a single model with an enormous 200,000-token context window. You can literally paste in your entire codebase and have Claude hold all of it in mind simultaneously — every function, every dependency, every architectural decision — while it reasons about a problem that touches all of it.

The coherence advantage is real. When a bug involves three layers of abstraction interacting unexpectedly, or when the right architectural decision depends on understanding constraints that are scattered across dozens of files, a single model reasoning over all of it together tends to produce fewer coordination errors than multiple agents passing partial context between them.

claude-opus-4-6.

“Kimi thinks like a well-coordinated team. Claude thinks like a very experienced senior engineer who has read every line of your code twice and remembers all of it.” — aitrendblend.com editorial observation, March 2026

Head-to-Head: 6 Advanced Coding Rounds

I ran both tools through six representative advanced coding scenarios — the kind of tasks that trip up weaker models and reveal real architectural differences. Each round gets an honest score and a clear winner.

This was Kimi’s strongest showing of the entire test. The task is exactly what the agent swarm architecture was built for — the same transformation applied across many files independently, with no complex cross-file reasoning required. Kimi dispatched agents to work on different route files in parallel, completed the refactoring significantly faster than Claude’s sequential approach, and produced clean, consistent output across all 15 files.

Claude did this well too. Its output was slightly more idiomatic — it caught a few edge cases where Kimi’s agents had applied the transformation slightly inconsistently at the boundaries between files. But the speed difference was material enough that for this class of task, Kimi is the clear practical choice.

- Significantly faster on multi-file tasks

- Parallel agents work independently on unrelated files

- Consistent transformation quality across all files

- Minor inconsistencies at file boundaries

- Less awareness of project-wide patterns vs. Claude

This is not a small distinction. Claude’s architectural reasoning here was genuinely impressive — it presented a full event-sourcing design with CQRS patterns, thought carefully about consistency guarantees, and proactively flagged the eventual consistency trade-offs that would affect the checkout flow specifically. It included concrete decisions about event schema versioning, dead letter queue handling, and idempotency key strategies that a senior architect would think to include.

Kimi’s output was good but noticeably more surface-level. It produced the right overall architecture but was thinner on the hard edge cases — the “what happens when the payment service event arrives out of order” type of questions that make or break a real system. For pure architectural design work, Claude’s depth of reasoning is a meaningful advantage.

- Proactively identifies edge cases and failure modes

- Reasons about trade-offs across the whole system

- Produces production-grade design decisions, not just diagrams

- Takes longer to produce the output

- Can over-explain reasoning when you just want the answer

Race conditions are the hardest class of bugs to find because the evidence is always indirect — you’re reasoning about timing, shared state, and execution order across multiple code paths that only fail under specific load conditions. This is exactly the kind of task where Claude’s single-model coherence shows its value.

Claude identified the race condition correctly and completely — a mutex that was being acquired in one order in the payment processor and a different order in the webhook handler, causing a deadlock under concurrent load. It also identified two related timing issues that weren’t part of the original bug report. Kimi found the same root cause but missed the related issues, likely because the agent handling the webhook handler file didn’t have full visibility into the payment processor agent’s findings during analysis.

The coordination overhead between agents is Kimi’s real constraint on this class of problem. When finding the bug requires reasoning about how two different files interact under concurrent execution, the agent that knows about file A doesn’t automatically have the full picture of what the agent that knows about file B found.

The biggest gap in the entire test, and the most honest argument for Kimi’s architecture. This task involves 20 independent functions that each need three different types of additions — types, tests, and documentation. There’s no cross-function reasoning required. Each function is its own self-contained unit of work.

Kimi handled this nearly twice as fast as Claude, deploying multiple agents to work on different functions simultaneously. The quality was consistent and high throughout — the TypeScript types were accurate, the unit tests covered meaningful edge cases, and the JSDoc was clear and comprehensive.

Claude worked sequentially through the functions. The output quality was marginally higher — slightly more thoughtful edge case coverage in the tests — but not enough to justify the time difference on a task this decomposable. For any high-volume, independent-unit coding work, Kimi’s agent swarm is simply the faster and more practical choice.

- Near-linear speed scaling with parallel agents

- Ideal for boilerplate-heavy additions across many functions

- Consistent output quality at volume

- Marginally less nuanced test edge cases vs. Claude

- Worth it at scale; debatable for 3–5 functions

Understanding a complex legacy module requires following the thread of logic through the entire file — understanding how the early initialisation decisions constrain what happens five hundred lines later, why a seemingly arbitrary constant has the value it does, and what the author was protecting against with that unusual error handling structure.

Claude’s explanation was notably more cohesive. It traced the payment state machine from initialisation through all its transitions, explained the historical context for some unusual patterns (correctly inferring they were likely PCI compliance adaptations), and produced a clear mental model for the whole module. Kimi’s explanation covered the same content but felt more like a section-by-section summary than a unified understanding — a reflection of how different agents had processed different parts of the file.

For code that a new developer needs to deeply understand before touching, Claude’s explanation gives you more of what you need — a coherent narrative, not a series of independently produced paragraph summaries.

This was the closest round. Both models produced clean, production-quality integrations for all three APIs. Kimi had a slight edge on execution speed — it handled all three integrations partially in parallel, knowing that Stripe, Twilio, and SendGrid are independent concerns. The implementation quality was effectively equal.

Claude’s edge showed up in the webhook handling section — it wrote more defensive code around Stripe webhook signature verification and produced better error handling for edge cases like Twilio delivery failures during order confirmation. Whether that depth is worth the speed trade-off depends on your situation. For a critical payment integration, I’d lean toward Claude’s more cautious approach. For adding a simple notification integration, Kimi’s speed advantage is the practical winner.

The Full Comparison: Specs and Capabilities

| CATEGORY | KIMI 2.5 AGENT SWARM | CLAUDE OPUS 4.6 | EDGE |

|---|---|---|---|

| Architecture | MoE + multi-agent swarm | Single model, dense reasoning | Different, not better/worse |

| Context Window | 128K tokens | 200K tokens | Claude (+56%) |

| Multi-file Speed | Very fast (parallel) | Moderate (sequential) | Kimi |

| Architectural Reasoning | Good | Exceptional | Claude |

| Cross-file Bug Detection | Good | Excellent | Claude |

| High-volume Independent Tasks | Excellent | Good | Kimi |

| Code Explanation Quality | Good | Excellent (more cohesive) | Claude |

| Tool / API Integration | Excellent (fast) | Excellent (deep) | Draw |

| Output Consistency at Scale | Very good | Excellent | Claude (slight) |

| Pricing | Competitive, lower per-task for parallel work | Premium, higher per-call | Kimi (for parallel tasks) |

Where Kimi 2.5 Has a Genuine Edge

The cases where Kimi’s agent swarm approach isn’t just competitive but actually the smarter choice are clearer than I expected going into the test.

Large-scale mechanical transformations. If you need to migrate 50 files from CommonJS to ES modules, convert a Python 2 codebase to Python 3, or add TypeScript types across an entire untyped project, Kimi’s parallel execution is a genuine productivity advantage. These tasks are inherently parallelisable — the transformation on file A has nothing to do with the transformation on file B — and Kimi capitalises on that.

Adding boilerplate across many independent units. Test coverage, JSDoc, error handling patterns, logging — anything that needs to be added to many functions or classes that don’t depend on each other. The agent swarm handles these at a speed that sequential processing simply can’t match.

Multi-layer feature implementation. When you’re adding a feature that touches the database layer, service layer, API layer, and frontend independently, Kimi can assign agents to each layer simultaneously. As long as you’ve defined the interfaces between layers upfront, this works very well in practice.

Where Claude Opus 4.6 Has a Genuine Edge

Claude’s advantages are concentrated in the hardest categories of coding work — the problems that require genuine intelligence rather than speed, and the problems where getting it wrong has real costs.

Debugging complex cross-file issues. Race conditions, subtle state management bugs, unexpected interactions between services — anything where the bug lives in the interaction between components rather than within a single component. Claude’s ability to hold the whole system in mind while reasoning about a specific failure is hard to replicate with a distributed approach.

Architecture and design decisions. When you’re deciding how to structure a system, the quality of the decision depends on thinking about the whole problem space — not just the immediate requirements but the ways the system will need to evolve, the failure modes that matter, and the trade-offs that depend on each other. Claude’s depth of reasoning produces architecture advice that holds up better under scrutiny.

Security-sensitive code. Authentication systems, payment flows, data validation, permission checks — the code where subtle bugs have real consequences. Claude’s more careful, nuanced output is worth the extra time here. You don’t want your authorization middleware to be written by an agent that didn’t quite have the full picture of your permission model.

What Neither Tool Gets Right

It would be intellectually dishonest to run a comparison like this without being honest about where both models still fall short. Advanced coding has categories that challenge both.

Long-running test execution and real environment feedback. Both models reason about what tests should do — neither can actually run them and reason about the failure output the way a developer with a terminal open does. They produce better test coverage than most developers write manually, but they can’t close the loop between writing the test and seeing it fail.

Domain-specific performance optimisation. Optimising a database query for a specific query planner, tuning JVM garbage collection for a particular workload pattern, profiling GPU kernel efficiency — these require empirical feedback from a real runtime that neither model has access to. They can reason about performance from first principles well, but they’re reasoning in the abstract.

Project-specific tribal knowledge. Neither model knows why your team made the architectural decision that looks strange to an outsider. Both models are good at inferring context from code — Claude particularly so — but the decisions that came from a meeting three years ago where someone said “we tried X and it caused Y” aren’t recoverable from the codebase alone. Always review AI-generated changes with that in mind.

Which One Should You Use?

The most practical framing: treat these as different tools in the same toolkit, not competitors where one wins permanently. The developers getting the best results in 2026 are the ones who have a clear mental model of when to reach for each one.

- You have many files needing the same transformation

- Adding tests, types, or docs to a large existing codebase

- Implementing a feature across independent layers

- Speed matters more than depth on the specific task

- The work is well-defined and doesn’t require ambiguity resolution

- High-volume content generation (API routes, CRUD operations)

- Debugging cross-file race conditions or subtle state bugs

- Designing system architecture from scratch

- Writing security-critical authentication or payment code

- Understanding a complex legacy codebase

- The problem has multiple valid approaches and you need the right one

- Code quality matters more than code speed

The two-tool workflow that works best in practice: use Kimi for the high-volume, well-defined coding work that makes up the majority of a typical sprint — adding tests, types, docs, migrations, boilerplate. Use Claude for the hard problems — the bugs that have been open for three weeks, the architecture decisions that will affect the codebase for three years, the code that absolutely cannot have subtle errors.

The Bigger Picture: Where AI Coding Is Going

The Kimi vs. Claude comparison is a microcosm of a bigger debate in AI development: does intelligence scale through parallelism or through depth? The honest answer, as these results suggest, is that both approaches have genuine merit — and the right answer depends on the nature of the problem.

What’s striking about 2026 is how capable both of these models are for tasks that would have required significant senior developer time a few years ago. Kimi producing correct, consistent TypeScript types for 20 functions in parallel isn’t just fast — the quality is genuinely good. Claude identifying a race condition across 2,000 lines of code isn’t just impressive — the explanation of why it’s a race condition, and the suggested fix, reflects a real understanding of concurrent execution models.

The remaining gap — and it is still a gap — is in tasks that require environmental feedback, accumulated project context, and the kind of judgment that comes from having seen a system fail in production and understanding exactly why. AI coding tools are accelerators, not replacements. The best engineers in 2026 are the ones who have internalised that distinction and built their workflows around it.

Expect Kimi’s agent swarm approach to get better at cross-agent context sharing over the next 12 months — that’s the most obvious remaining limitation and it’s actively being worked on. Expect Claude’s context window to continue expanding, and its reasoning on truly ambiguous architectural problems to keep improving. The gap between the two will narrow. But the fundamental architectural difference — swarm vs. depth — will likely persist because both approaches have inherent advantages that can’t be fully replicated by the other.

Try Both and See the Difference

The best way to understand which approach fits your workflow is to run both on a real task from your current project. Give them the same prompt and compare.