5 Shocking Secrets of Skin Cancer Detection: How This SSD-KD AI Method Beats the Competition (And Why Others Fail)

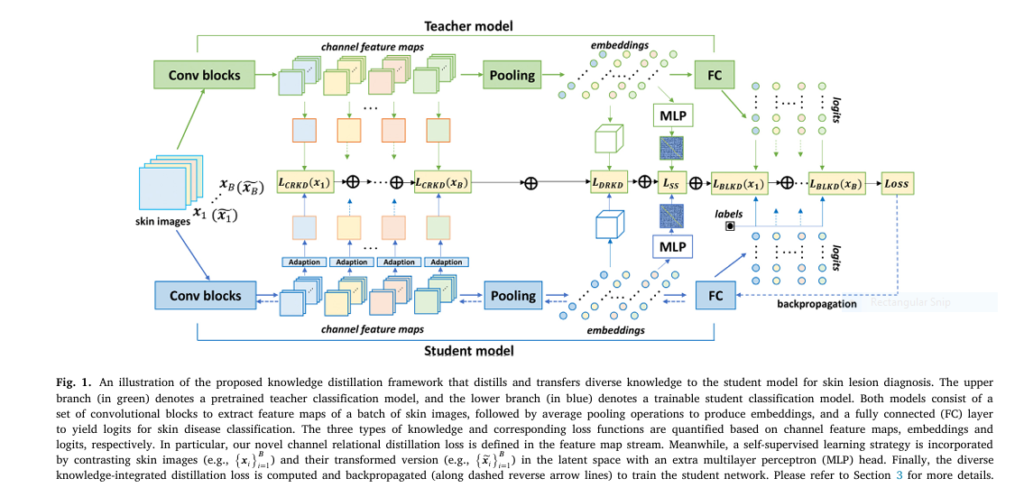

The Hidden Crisis in AI Skin Cancer Diagnosis: A 7% Accuracy Gap That Could Cost Lives Every year, millions of people face the terrifying reality of skin cancer. With over 5 million cases diagnosed annually in the U.S. alone, early detection isn’t just important—it’s life-saving. Artificial Intelligence (AI) promised a revolution in dermatology, offering dermatologist-level […]