ChatGPT 5 vs Kimi K2.5: Smarter, Cheaper, Faster? — The 2026 AI Agent Comparison Nobody Else Did Honestly

Two AI agents walk into your workday in 2026. One — ChatGPT 5 (now at version GPT-5.4) — can literally move your mouse, click through applications, and execute work inside your operating system. The other — Kimi K2.5, Moonshot AI’s open-source 1-trillion-parameter model — can deploy 100 parallel sub-agents simultaneously to attack a problem from every angle at once. The question isn’t which one is “better.” The question is: smarter at what? Cheaper for whom? Faster on which tasks? This article gives you real answers without the hype.

Why This Comparison Actually Matters in 2026

The AI agent landscape crossed a meaningful threshold in early 2026. Both ChatGPT 5 and Kimi K2.5 are no longer just language models that answer questions — they are systems that execute. The distinction sounds subtle, but it changes everything about how you work with them. When an AI can plan, act, check its own results, and iterate without you holding its hand, you stop thinking about “prompts” and start thinking about “delegating.”

GPT-5 landed in August 2025, and by March 2026, OpenAI had pushed it to version GPT-5.4 — adding native computer use, improved tool orchestration, and what they call “compaction” for long agent sessions. Kimi K2.5 arrived in January 2026 from Beijing-based Moonshot AI with a genuinely different design philosophy: a sparse 1-trillion-parameter mixture-of-experts architecture, trained on 15 trillion tokens, with a novel Parallel Agent Reinforcement Learning (PARL) system powering an Agent Swarm mode that nothing else in the market currently replicates.

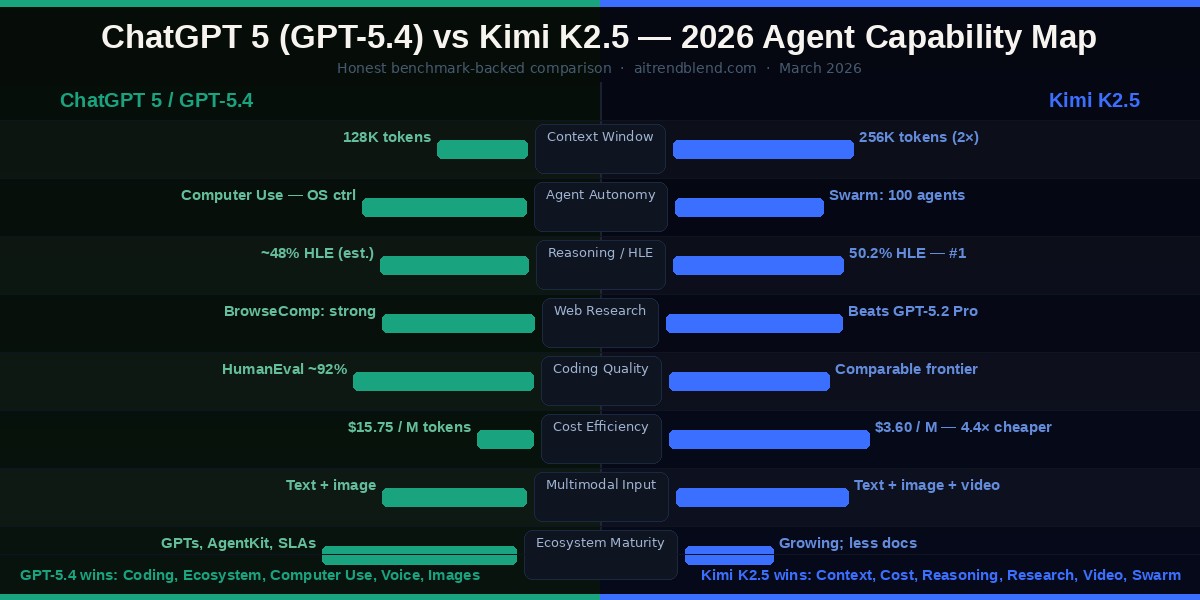

These two tools are not variations on the same theme. They’re built differently, priced dramatically differently ($3.60 vs $15.75 per million tokens), and they solve different hard problems. Let’s go through each one before putting them side by side.

ChatGPT 5 (GPT-5.4) — The Agent That Works Inside Your Computer

⚡ ChatGPT 5 / GPT-5.4 — Full Agent Spec (March 2026)

- Context window: 128K tokens — approximately 192 A4 pages

- Computer Use: Native screen interpretation + mouse and keyboard control — automates workflows across any desktop app

- GDPval benchmark: 83% — matches or exceeds human professionals across 44 occupations

- AgentKit: Full developer toolkit for building, deploying, and optimizing custom agents visually

- Tool Search: Handles large tool inventories without context overflow

- Compaction: Maintains long agent trajectories without degradation — critical for multi-day tasks

- Custom GPTs: Mature ecosystem of shareable specialized agents with their own tools + knowledge

- DALL·E 3: Native image generation, interpretation, and editing

- Advanced Voice Mode: Real-time voice with near-human latency

- Memory: Persistent cross-session memory (Plus and above)

- Excel / Artifacts integration: Works inside documents — not just about them

The headline feature of GPT-5.4 — Computer Use — deserves real explanation, not a bullet point. Before this, even the most capable AI models sat behind a chat window. GPT-5.4 can now be given access to your screen and actually navigate it: clicking buttons, filling forms, opening files, running software, and switching between applications. This is not browser automation in the Selenium sense. The model interprets a screenshot of your screen, understands what’s on it semantically, and decides what to do next as part of a larger task plan.

🖥️ GPT-5.4 Computer Use — What It Can Actually Do

On GDPval — a benchmark designed to evaluate AI agents on actual knowledge work tasks across 44 occupational categories — GPT-5.4 scored 83.0%, meaning it matched or exceeded the average professional in over four-fifths of tested job functions. The previous version hit 70.9%. That’s not incremental improvement; that’s a qualitative shift in what the model can reliably handle unsupervised.

The AgentKit launch is significant for developers and businesses. For the first time, building a production-grade agent on top of ChatGPT doesn’t require a deep understanding of prompt engineering. AgentKit provides a visual workflow builder, pre-built agent components, embedded agentic UIs, and deployment tools. A non-engineer can build a compliant customer research agent in a morning.

Where GPT-5.4 Still Has Limits

Computer Use is powerful but not autonomous by design. GPT-5.4 treats user confirmations as a first-class design element — meaning it pauses at sensitive actions rather than barreling through. That’s safety-conscious engineering, but it also means fully hands-off automation requires careful workflow design. The 128K context window, while large, is half of Kimi K2.5’s. At $15.75 per million blended tokens, scaling GPT-5.4 to high-volume document processing is expensive fast.

Kimi K2.5 — The 1-Trillion-Parameter Open-Source Swarm Machine

🔵 Kimi K2.5 — Full Agent Spec (January 2026)

- Architecture: Mixture-of-Experts (MoE) — 1.04 trillion total parameters, 32B activated per token

- Training: 15 trillion tokens; continued pre-training + RL (PARL)

- Context window: 256K tokens — approximately 384 A4 pages (2× GPT-5)

- Vision: MoonViT-3D encoder — supports text, images, video input natively

- Four operating modes: Instant, Thinking, Agent, Agent Swarm

- Agent Swarm: Up to 100 parallel sub-agents; 4.5× faster on large-scale tasks

- HLE-Full benchmark: 50.2% — top score on one of AI’s hardest evaluations

- BrowseComp: Outperforms GPT-5.2 Pro on complex web research

- Open-source: Model weights publicly available on Hugging Face

- Pricing: ~$3.60/M tokens — 4.4× cheaper than GPT-5.2

- Outputs: Documents, spreadsheets, websites, slides, research reports

Kimi K2.5’s most disruptive feature is the Agent Swarm, and it’s worth slowing down on it. Every other AI agent model in 2026 operates sequentially: the model does step one, then step two, then step three. Kimi K2.5 in Swarm mode does something fundamentally different. Its orchestrator model decomposes a complex goal into subtasks, then spins up up to 100 sub-agents to execute those subtasks simultaneously. Each sub-agent has access to independent tool use — web search, code execution, file generation, data analysis — and they work in parallel.

🌐 Kimi K2.5 Agent Swarm — By the Numbers

The training technique behind the swarm — PARL, or Parallel Agent Reinforcement Learning — is worth understanding at a conceptual level. Moonshot AI trained the orchestrator model to decompose tasks effectively while keeping the sub-agents frozen. This means the system learned to plan parallel execution through reinforcement signals rather than rigid templates. The result is an orchestrator that adapts how it delegates based on the specific task structure rather than following a fixed script.

“Kimi K2.5’s PARL training methodology represents a genuine departure from sequential chain-of-thought paradigms. It’s the first production model to treat parallelism itself as a trainable skill rather than a system design constraint.”

— Moonshot AI technical release, January 2026

The HLE-Full score of 50.2% requires context. Humanity’s Last Exam is a benchmark comprising 2,500 questions across graduate-level mathematics and physics — questions specifically designed to defeat current AI models. Scoring above 50% on it is a milestone the field has been tracking. Kimi K2.5 achieved it while remaining open-source and available to run locally. The MoE architecture is what makes this possible: because only 32 billion parameters activate per token, the model runs efficiently even on non-datacenter hardware.

Where Kimi K2.5 Has Real Gaps

Computer Use doesn’t exist in Kimi K2.5. The model can generate code, websites, and documents, but it cannot operate your operating system. Agent Swarm is a research preview — it’s real and functional, but it’s not battle-hardened for enterprise production yet. There’s no voice mode. The English-language brand recognition and enterprise support infrastructure that OpenAI has built over four years doesn’t have an equivalent. And while the open-source availability is a massive advantage for developers and researchers, enterprise buyers often need managed services, SLAs, and compliance certifications that Moonshot AI’s Western-market offering is still maturing.

GPT-5.4’s Computer Use is a qualitatively new agent capability — it can work inside your computer, not just alongside it. Kimi K2.5’s Agent Swarm deploys 100 parallel agents on a single goal — 4.5× faster execution at 4.4× lower cost. These are two different definitions of “agent power,” and you need to decide which one your work actually requires.

Benchmark Breakdown — What the Numbers Actually Tell You

Raw numbers, honestly interpreted. On BrowseComp — a benchmark testing deep web research where an agent must find obscure, multi-hop information by navigating the internet — Kimi K2.5 outperforms GPT-5.2 Pro. On WideSearch, it outperforms Claude Opus 4.5. These are not narrow wins; they reflect a genuine architectural advantage in tool-augmented research tasks, where Swarm mode can assign independent search threads to multiple sub-agents simultaneously.

On HumanEval (code generation), GPT-5.4 leads — coding has consistently been a strength of OpenAI’s models, and the consolidation of GPT-5.3-Codex capabilities into GPT-5.4 sharpens this further. On GDPval, GPT-5.4’s 83% score on professional knowledge work tasks is genuinely impressive and reflects how much the model has improved at following complex multi-step instructions faithfully.

The context window gap — 256K for Kimi K2.5 versus 128K for GPT-5.4 — compounds over time. Researchers working with literature reviews, legal analysts processing full case files alongside statutes, or developers feeding in large codebases will hit GPT-5’s ceiling on tasks where Kimi K2.5 never flinches. That’s a structural advantage, not a temporary one.

Full Head-to-Head Comparison Table

| Feature / Metric | ChatGPT 5 (GPT-5.4) | Kimi K2.5 | Edge |

|---|---|---|---|

| Release | Aug 2025 (GPT-5); GPT-5.4 Mar 2026 | January 27, 2026 | Tie Both current |

| Architecture | Proprietary (dense / semi-sparse) | Open-source MoE, 1.04T / 32B active | Kimi Open-source |

| Context Window | 128K tokens (~192 pages) | 256K tokens (~384 pages) | Kimi 2× larger |

| Agent Swarm | Not available | Up to 100 sub-agents parallel (PARL) | Kimi Unique feature |

| Computer Use | Native — screen + mouse + keyboard | Not available | GPT-5.4 Unique feature |

| GDPval (Professional) | 83.0% — exceeds human avg. | Not published | GPT-5.4 Measured |

| HLE-Full (Hard Reasoning) | ~48% (estimated) | 50.2% — highest published | Kimi Top score |

| BrowseComp (Web Research) | Strong, Bing-integrated | Outperforms GPT-5.2 Pro | Kimi Benchmark win |

| Coding (HumanEval) | ~92% (GPT-5 range) | Comparable to frontier models | GPT-5.4 Slight edge |

| Multimodal Input | Text + images + documents | Text + images + video (MoonViT-3D) | Kimi Video input |

| Image Generation | DALL·E 3 built-in | Not available | GPT-5.4 Only option |

| Voice Mode | Real-time ultra-low latency | Not available | GPT-5.4 Only option |

| Custom Agents | Custom GPTs + AgentKit (visual builder) | Not yet available | GPT-5.4 Mature ecosystem |

| API Pricing | ~$15.75 / M blended tokens | ~$3.60 / M tokens | Kimi 4.4× cheaper |

| Open-Source Weights | No — fully proprietary | Yes — Hugging Face available | Kimi Self-hostable |

| Enterprise Maturity | Full SLAs, compliance, security | Growing; less documented | GPT-5.4 Years ahead |

| Execution Speed Boost | Compaction (maintains long sessions) | Swarm: 4.5× faster on parallel tasks | Kimi On parallel tasks |

Pricing: The $3 vs $15 Reality Check

This is where the comparison gets uncomfortable for OpenAI fans. The price gap between GPT-5.4 and Kimi K2.5 is not a rounding error — it’s a 4.4× difference per million tokens. At scale, that gap is the difference between an affordable product and an expensive infrastructure line item.

- ChatGPT Plus: $20/month (individual)

- ChatGPT Business: team workspace

- ChatGPT Enterprise: custom + SLA

- Includes: Computer Use, Voice, DALL·E 3

- AgentKit: included with API access

- Free tier: limited GPT-5 access

- Kimi Pro: ~$9.9/month (web app)

- Generous free tier with daily limits

- Open weights: run locally for free

- 256K context, Swarm, multimodal

- API: full access to k2.5 capabilities

- No enterprise SLA documented yet

The open-source dimension adds another layer. Kimi K2.5’s model weights are public on Hugging Face. If you have the hardware — or access to a cloud provider that hosts the model — you can run Kimi K2.5 at effectively zero marginal cost per token. For a startup building a document intelligence product, that’s a completely different business model than paying OpenAI $15.75 per million tokens.

That said, the free lunch has a catch. Self-hosting 1 trillion parameters, even with MoE active-parameter efficiency, still requires serious GPU infrastructure. The 32 billion active parameters per token are manageable, but the full model weight requires substantial VRAM to hold. Most individuals cannot self-host this meaningfully — but enterprises and research institutions can, and increasingly will.

If you process millions of tokens per month for document analysis, research, or batch workflows, Kimi K2.5’s pricing is not a marginal improvement — it’s a structural business advantage. GPT-5.4 charges a premium for its ecosystem, Computer Use, and enterprise reliability, and for many buyers that premium is justified.

Real-World Use Cases: When to Pick Each One

- You need OS-level automation (Computer Use)

- You want voice-driven agent interactions

- You need DALL·E 3 image generation in workflow

- You’re building agents with AgentKit

- Enterprise compliance or SLA is required

- You want Custom GPT distribution

- You work directly inside Excel or Docs

- Your tasks need confirmed, careful step-by-step automation

- You’re parallelizing large research tasks

- You need to process 200+ page documents

- You want 4.5× faster execution via Agent Swarm

- You need open-source / self-hosted AI

- You’re doing advanced math or reasoning tasks

- You analyze video as well as images

- You want frontier performance at 4.4× lower cost

- You’re building a product and need Hugging Face weights

The Honest Verdict: Smarter? Cheaper? Faster?

Let’s answer the title directly — because a good comparison article should.

Smarter? Neither, clearly. They’re smarter at different things. GPT-5.4 is smarter at professional knowledge work across diverse domains (GDPval: 83%), at coding, and at operating-system-level task execution. Kimi K2.5 is smarter at graduate-level reasoning (HLE-Full: 50.2%), long-document comprehension, and parallel multi-agent problem decomposition. If you need one model to replace a human assistant, GPT-5.4 is currently more general. If you need one model to outthink a hard problem, Kimi K2.5 punches harder on raw reasoning.

Cheaper? Kimi K2.5, decisively. $3.60 versus $15.75 per million tokens is not close, and the open-source availability means the floor is effectively zero for those who can self-host. At high volumes, this comparison doesn’t need nuance — Kimi K2.5 is dramatically cheaper.

Faster? Depends on task type. For tasks that parallelize well — comprehensive research, multi-document synthesis, batch processing — Kimi K2.5’s Agent Swarm is 4.5× faster by design. For sequential tasks, tasks requiring OS interaction, or tasks that need careful human-confirmed steps, GPT-5.4’s Compaction and Computer Use architecture is better suited to maintaining speed without degradation across long sessions.

🏆 Final Verdict by User Type

The deeper truth is that the smartest approach in 2026 is not to choose. Use GPT-5.4 for the work that requires human-like execution and OS-level interaction. Use Kimi K2.5 for the work that requires deep reading, parallel research, and cost-effective scale. These tools are cheap enough relative to human labor that running both in parallel is almost always worth it for serious practitioners.

The race isn’t over. OpenAI will respond to Kimi K2.5’s pricing and context window with GPT-5.5 or equivalent. Moonshot AI is clearly eyeing Computer Use as a missing capability. In six months, specific numbers in this article will have changed. But the structural insight — that a Chinese open-source MoE model can challenge OpenAI’s closed-source leader on agentic benchmarks while costing a fraction of the price — that’s a development that will reshape this industry regardless of what happens next.