Goal-Oriented Graphs: How NTU Researchers Finally Taught LLMs to Plan Like Humans

GraphRAG shreds procedural knowledge into thousands of disconnected fragments. A new framework from Nanyang Technological University puts it back together — and the results in Minecraft are hard to argue with.

There is a particular frustration in watching a highly capable language model fall apart on what should be a simple sequential task. Ask GPT-4 to craft a diamond axe in Minecraft — not in the game, just explain the steps — and you often get something that sounds plausible right up until a critical ingredient goes missing, or a smelting step appears at the wrong point in the chain. The model knows about diamonds. It knows about furnaces. It just cannot hold the whole dependency tree in mind at once. A team at Nanyang Technological University has built something that can.

The Problem Nobody Talks About Enough

Language models have become remarkably good at broad, associative tasks. They summarize, translate, rephrase, and generate on demand. The trouble shows up as soon as a task requires not just knowing facts, but knowing facts in the right order, with the right prerequisites, building toward a specific outcome. This is procedural reasoning — the cognitive machinery behind cooking a meal, assembling flat-pack furniture, or, in the paper’s chosen domain, surviving and thriving in Minecraft.

Retrieval-Augmented Generation was supposed to fix the knowledge problem. The idea is sound: instead of relying on whatever a model memorized during training, you attach a knowledge base and let the model look things up. GraphRAG took this further by structuring that knowledge base as a graph, hoping that relationships between entities would make retrieval richer.

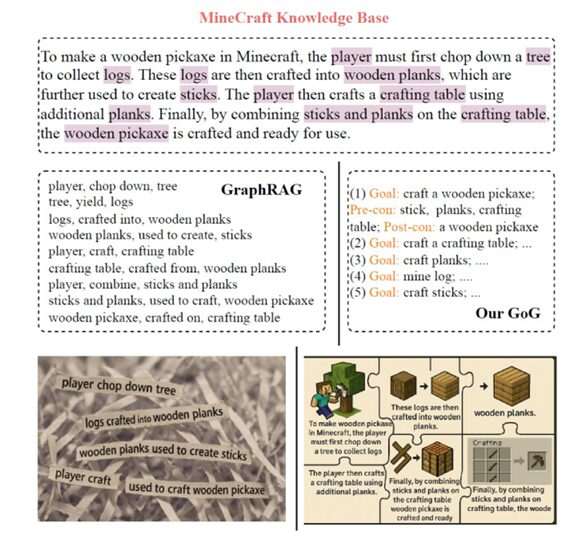

Here is where it gets complicated. GraphRAG’s graphs are entity-centric. They extract things like: “player — crafts — crafting table” and “sticks — used to craft — wooden pickaxe” and “wooden planks — crafted into — sticks.” Individually, each triple is accurate. Collectively, they are something like a murder board after the detective has left the room — all the evidence pinned up, but nothing explaining how to move from A to B to C in the right sequence.

The NTU team describes this with a striking phrase: it is like trying to put shredded paper back together. The information was all there before it was torn apart. The challenge is reconstruction, and entity-relation triples make reconstruction computationally expensive and semantically noisy.

GraphRAG applied to Minecraft’s Wiki and recipe data produces 12,388 nodes and 18,347 edges. The GoG framework, operating on identical source material, produces 703 nodes and 1,653 edges — a 17× compression that preserves the causal structure entity graphs destroy.

Rethinking What a Node Should Represent

The insight at the heart of Goal-Oriented Graphs is almost embarrassingly direct: if you want a graph that supports planning, build a graph whose nodes represent things you can plan toward.

In a GoG, each node is a goal — “craft a wooden pickaxe,” “mine cobblestone,” “smelt iron ore.” Edges are not vague semantic relationships; they encode actual logical dependencies. A directed edge from Goal A to Goal B means: you cannot pursue Goal A without first satisfying Goal B. That is a prerequisite edge, and it carries enormous structural power compared to a generic “related-to” connection.

Each goal node carries a rich set of attributes: its name, a natural language description, required tools, required materials, and both preconditions and postconditions. The postconditions — what the world looks like after achieving this goal — turn out to be especially important during graph construction, because they allow the algorithm to link goals from different text chunks that it has never seen together.

GoG vs GraphRAG: A Structural Comparison

The differences between the two approaches are not just philosophical — they are structural, and they show up in every downstream task. The table below captures what separates them at the graph level. GraphRAG organizes knowledge around noun-centric entities and semantic relationships, which is excellent for question answering but fundamentally ill-suited for planning. GoG organizes knowledge around verb-centric goals and logical prerequisites, which is exactly what a sequential planner needs.

| Aspect | GoG (This Work) | GraphRAG |

|---|---|---|

| Graph Nodes | Structured task goals (e.g., “craft a wooden pickaxe”) | Informational entities (people, places, events) extracted from documents |

| Graph Edges | Explicit goal–subgoal dependencies based on prerequisites | Semantic or contextual relationships between entities |

| Node Semantics | Verb-centric goals with preconditions and postconditions | Noun-centric entities or named entities |

| Purpose of Graph | Guides procedural planning and multi-step reasoning | Provides rich, factual context for question answering or generation |

| Graph Size (Minecraft) | 703 nodes · 1,653 edges | 12,388 nodes · 18,347 edges (same source) |

That last row is worth dwelling on. Both graphs were built from the exact same corpus — the Minecraft Wiki pages and recipe files. GoG compresses the same knowledge into a graph that is 17× smaller by node count, not by discarding information, but by representing it at the right level of abstraction. A recipe that GraphRAG shatters into eight entity–relation triples becomes a single goal node with structured attributes. The compression is lossless for planning purposes; for question answering over arbitrary facts, you would want GraphRAG’s richer entity coverage.

How the Graph Gets Built

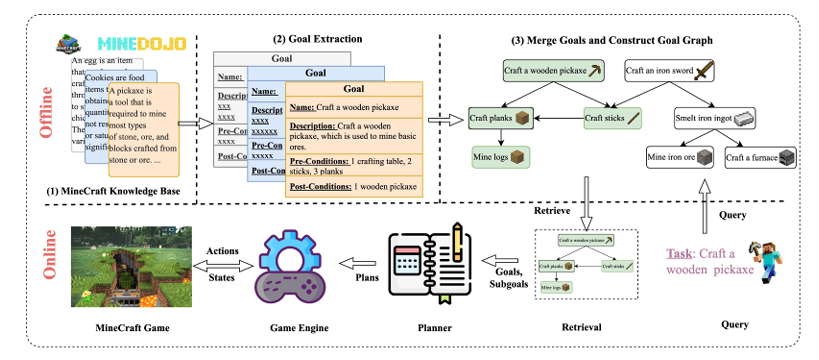

Building a GoG from raw text involves three main stages, each solving a specific problem that naive extraction would fumble.

Goal extraction

The source corpus — in this case, 514 Minecraft Wiki pages and 859 recipe files — is split into chunks small enough for an LLM to process. For each chunk, the model extracts structured goal tuples: name, description, required tools (as a JSON object with tool grades), required materials, and postconditions. The prompt is carefully designed to produce goal-oriented phrases in the form “<action> <item>” — “craft planks”, “mine iron ore” — rather than the noun-centric entities GraphRAG extracts.

The total API cost for this one-time extraction across 2.7 million characters of source text, using GPT-4o-mini, came to roughly US$2–3. That is not a typo.

Goal merging

The same goal will inevitably appear in multiple chunks — “craft a wooden pickaxe” shows up across the Wiki page for pickaxes, the crafting guide, and the recipe files. Naively adding each occurrence would create a fragmented mess. Instead, the algorithm uses embedding similarity in two directions.

For each newly extracted goal, it finds the most similar existing goal by cosine similarity over name embeddings. If both the name similarity and the condition similarity (comparing sorted precondition and postcondition lists) exceed a threshold \(\theta\), the new goal is considered a duplicate and discarded. If conditions match but the name differs, the new name is added as an alias. Only genuinely distinct goals enter the base. The authors use \(\theta = 0.92\) with the nomic-text-embed-v1.5 model.

Subgoal derivation

The trickiest part. A goal extracted from one chunk may depend on a goal that only appeared in a completely different chunk. The algorithm handles this by matching the preconditions of newly added goals against the postconditions of all existing goals. A match triggers a new directed edge. This cross-chunk dependency linking is what gives the GoG its coherent, graph-wide causal structure — something GraphRAG’s per-document processing cannot replicate.

Retrieval at Inference Time

Once the graph is built, using it is fast and clean. Given a task instruction like “craft a wooden sword,” the system embeds the query and retrieves the top-\(k\) goals by cosine similarity. When \(k > 1\), a single LLM call selects the best match from the candidates — this is the “goal inference” step.

From the selected goal, a depth-first search traverses all prerequisite edges, collecting every subgoal in the dependency chain. Cyclical paths (like converting iron ingots to blocks and back) are explicitly pruned since a player starting with an empty inventory cannot benefit from reversible loops. The result is a clean, acyclic goal chain.

That chain — nodes, attributes, and edges — is serialized into a structured prompt for plan generation. The LLM is asked to translate the goal hierarchy and the extracted materials list into an ordered sequence of in-game subtasks.

“Reconstructing a coherent plan from fragmented knowledge is akin to the saying: tearing paper is easy, putting it back together is hard.” — Leung et al., Knowledge-Based Systems 2026

The Experiments: Minecraft as a Serious Benchmark

The choice of Minecraft deserves more credit than it usually gets. The game’s crafting system is a genuine directed acyclic graph of material dependencies, with dozens of items requiring multi-stage production chains. Diamonds cannot be mined without an iron pickaxe. Iron pickaxes require iron ingots. Iron ingots require smelted ore. Smelting requires a furnace. Furnaces require cobblestone. The chain goes on, and any gap in the plan causes the agent to fail.

The experimental setup uses 66 tasks across 7 difficulty groups — wood, stone, iron, gold, diamond, redstone, and armor — evaluated over 30 independent runs per task using each of three LLMs: Llama 3.2-Vision 90B, Gemma 3 27B, and Qwen 2.5-VL 32B. The downstream executor is STEVE-1, which translates text instructions into keyboard and mouse controls.

GoG is compared against three baselines: a Vanilla LLM (no retrieval), GraphRAG (built from the same source data), and HKG — a Hierarchical Knowledge Graph inspired by the Optimus-1 framework, which builds a goal graph purely from recipe files and skips Wiki text.

What the numbers say

| Task Group | Metric | Vanilla | GraphRAG | HKG | GoG (Llama 3.2) |

|---|---|---|---|---|---|

| Wood | Success ↑ | 83.7% | 80.0% | 93.7% | 95.7% |

| Stone | Success ↑ | 57.4% | 37.8% | 46.7% | 80.0% |

| Iron | Success ↑ | 19.8% | 19.4% | 54.4% | 74.0% |

| Gold | Success ↑ | 7.5% | 0.0% | 5.6% | 72.3% |

| Diamond | Success ↑ | 0.0% | 0.0% | 0.0% | 66.1% |

| Redstone | Success ↑ | 0.0% | 0.0% | 11.7% | 49.4% |

| Armor | Success ↑ | 23.9% | 26.7% | 31.8% | 54.4% |

The numbers at the difficult end of the spectrum deserve a moment of attention. On gold-tier tasks, GraphRAG scores zero — it actually fails every single run. HKG manages 5.6%. GoG reaches 72.3%. On diamond tasks, both GraphRAG and HKG score zero, while GoG maintains a 66.1% success rate with Llama 3.2-Vision.

This is not a marginal improvement. It is a qualitative difference in capability. The baselines are not merely less efficient; they are fundamentally unable to handle tasks that require more than three or four sequential dependencies. GoG still succeeds on more than half of those tasks.

A striking anti-pattern emerges from the results: GraphRAG sometimes performs worse than the Vanilla baseline. Entities like “stone” are connected to dozens of crafting recipes, and when tasked with making a stone axe, the retrieved neighbourhood floods the LLM with noise. More context, structured badly, is worse than no context at all.

The Diamond Axe Case Study

The paper includes a close-up comparison of the plans each method generates for “craft a diamond axe” — one of the hardest tasks in the benchmark. It is a masterclass in diagnosing exactly where reasoning breaks down.

HKG’s plan includes the step “smelt diamond.” The semantic distinction matters enormously: when you mine diamond ore with an iron pickaxe in Minecraft, you get raw diamonds directly — no smelting required. HKG has a materials list but no procedural understanding of when smelting applies. The plan is technically well-formed until it collapses at step 15 on a physically impossible action.

Both GraphRAG and Vanilla produce plans that simply under-count sticks. The LLM generates a plausible-sounding sequence, estimates “3 sticks” somewhere in the middle, and the agent runs out mid-craft and is forced to replan. The replanning loop adds seven extra steps and still fails. Quantity reasoning, it turns out, requires knowing exact postconditions — how many items each crafting step produces — and neither GraphRAG nor Vanilla encodes that.

GoG’s plan is 16 steps and executes cleanly. Every material is present in the correct quantity. Every tool is acquired before the step that requires it. The goal tree’s explicit precondition and postcondition encoding does exactly what the paper promises it would.

| # | ✅ GoG — 16 steps, clean execution | ❌ HKG — invalid “smelt diamond” at step 15 | ❌ GraphRAG — under-counts sticks, replanning loop | ❌ Vanilla — under-counts sticks, replanning loop |

|---|---|---|---|---|

| 0 | chop a tree → logs ×4 | chop a tree → logs ×9 | chop a tree → logs ×5 | chop a tree → logs ×5 |

| 1 | craft planks → planks ×12 | craft planks → planks ×27 | craft planks → planks ×15 | craft planks → planks ×16 |

| 2 | craft stick → sticks ×8 | craft stick → sticks ×8 | craft stick → sticks ×3 | craft stick → sticks ×4 |

| 3 | craft crafting table ×1 | craft crafting table ×1 | craft crafting table ×1 | craft crafting table ×1 |

| 4 | craft wooden pickaxe ×1 | craft wooden pickaxe ×1 | craft wooden pickaxe ×1 | craft wooden pickaxe ×1 |

| 5 | equip wooden pickaxe | equip wooden pickaxe | equip wooden pickaxe | equip wooden pickaxe |

| 6 | mine cobblestone ×11 | mine cobblestone ×19 | mine cobblestone ×11 | mine cobblestone ×11 |

| 7 | craft stone pickaxe ×1 | craft stone pickaxe ×1 | craft stone pickaxe ×1 | craft stone pickaxe ×1 |

| 8 | equip stone pickaxe | equip stone pickaxe | equip stone pickaxe | equip stone pickaxe |

| 9 | mine iron ore ×3 | craft furnace ×1 | craft furnace ×1 | craft furnace ×1 |

| 10 | craft furnace ×1 | mine iron ore ×3 | mine iron ore ×2 | mine iron ore ×2 |

| 11 | smelt iron ingot ×3 | smelt iron ore → ingots ×3 | smelt iron ore → ingots ×2 | smelt iron ore → ingots ×2 |

| 12 | craft iron pickaxe ×1 | craft iron pickaxe ×1 | chop tree (replan) → logs ×2 | chop tree (replan) → logs ×2 |

| 13 | equip iron pickaxe | equip iron pickaxe | craft planks (replan) ×2 | craft planks (replan) ×2 |

| 14 | mine diamond ore → diamond ×3 | mine diamond ore ×3 | craft sticks (replan) ×2 | craft sticks (replan) ×2 |

| 15 | craft diamond axe ×1 ✅ | smelt diamond ×3 ← INVALID ❌ | craft wooden pickaxe (replan) | craft stone pickaxe (replan) ❌ |

| 16 | — | craft diamond axe ❌ FAILS | mine iron ore (replan) ×1 | mine iron ore (replan) ×1 |

| 17–21 | — | — | 5 more replan steps → FAILS ❌ | 5 more replan steps → FAILS ❌ |

Table: Step-by-step plan comparison for “craft a diamond axe” using Llama 3.2-Vision. Highlighted cells show the critical failure points in each baseline method.

Plan Quality Beyond Success Rate

Success rate captures whether the agent finished the task, but it hides a lot. An agent that barely squeaks through by replanning five times is not solving the same problem as one that executes a clean first-pass plan. The paper introduces four plan quality metrics borrowed from classical planning literature: goal satisfaction, soundness, completeness, and efficiency.

The ablation study separates out two main components the GoG contributes to each prompt: the goal tree (the hierarchical subgoal structure) and the materials and tools list (extracted from the tree’s postconditions). The results are instructive.

Providing the materials list alone, without the goal tree, achieves near-perfect soundness and completeness — 0.99 and 0.99 for Llama 3.2-Vision. Adding the goal tree on top pushes goal satisfaction and efficiency to 1.0. The materials list tells the LLM what to gather; the goal tree tells it in what order and why. Both are necessary for optimal plans.

The one exception is Gemma 3, where adding the goal tree actually reduces performance slightly. The authors attribute this to difficulty handling hierarchical input or the increased context length. It is an honest limitation, and the paper flags it explicitly as an open question rather than papering over it.

Does It Break Under Noise?

Real-world procedural knowledge is rarely as clean as Minecraft’s recipe files. So the team ran a dedicated robustness experiment: they converted the structured recipe data into natural language descriptions using an LLM, then rebuilt the GoG from that noisier input.

The degradation is real but contained. Under noisy construction, goal satisfaction drops from 1.0 to 0.97 for Llama 3.2-Vision — a barely noticeable dip. Soundness and completeness remain above 0.99. The largest drops appear in efficiency metrics, because noisy extraction sometimes omits implicit preconditions (missing fuel for smelting, for instance) or over-estimates item yields.

None of this is catastrophic. The goal dependency structure, once extracted, is robust enough that moderate extraction noise does not unravel the coherent reasoning chain. The system degrades gracefully rather than failing completely — which is exactly what you want from anything you intend to deploy in the messy real world.

Limitations and What Comes Next

The authors are unusually candid about where GoG falls short, and that honesty is part of what makes this paper useful rather than just impressive.

The most fundamental constraint is the reliance on extractable procedural knowledge. GoG thrives when a domain has text that can be reasonably decomposed into goal–precondition–effect structures. Manufacturing manuals, medical workflows, cooking instructions — these are plausible targets. Abstract creative tasks or domains without any structured text base are a different story. The noise ablation suggests graceful degradation, but it does not suggest immunity.

The current framework also retrieves a single reasoning chain per task, pruning alternatives early. Many real tasks admit multiple valid subgoal decompositions — different resource routes toward the same goal. Optimality-aware retrieval, with cost-weighted or success-probability-weighted edges, is listed as future work. So is online replanning that dynamically updates the graph as an agent observes the world, and scalability work on large cross-domain GoGs with thousands of goals.

There is also the Minecraft familiarity question. Pretrained LLMs have been exposed to Minecraft content during training. The authors acknowledge this but note that the consistent gap between GoG and the Vanilla baseline — both using the same pretrained model — points to structured knowledge retrieval as the driver rather than memorized domain facts. Still, they flag evaluation in synthetic or obfuscated environments as an important next step.

Why This Matters Beyond Minecraft

Games make excellent research vehicles because they offer controlled, repeatable conditions for measuring something genuinely hard. The insight this paper actually delivers — that goal-oriented graphs beat entity-relation graphs for procedural tasks — does not require Minecraft to be true.

Think about the domains where multi-step planning failures are not just frustrating but consequential: surgical planning, manufacturing workflows, software deployment pipelines, drug interaction analysis. Any domain where knowledge can be expressed as “to achieve X, you must first achieve Y and Z” is a candidate for GoG-style reasoning. The paper is careful not to oversell this generality, but the principle is clean enough that the case practically makes itself.

The cost argument matters too. Building a GoG from 2.7 million characters of source text costs roughly $2–3 in API calls, runs once, and then supports fast inference via cosine similarity and DFS traversal. This is not a research artifact that requires industrial infrastructure to deploy. It is a technique that a small team could apply to a domain-specific knowledge base without a major investment.

Complete Proposed Model Code (PyTorch)

The implementation below captures the core GoG components: the goal data structure, the embedding-based merging logic, the depth-first subgoal retrieval, and a smoke test with synthetic data. This maps directly to Algorithm 1 and Section 3.2 from the paper. For the full experimental codebase, see the official repository.

# ─── 1. IMPORTS AND SETUP ────────────────────────────────────────────────────

import torch

import torch.nn.functional as F

from dataclasses import dataclass, field

from typing import Dict, List, Optional, Set, Tuple

import json

# ─── 2. GOAL DATA STRUCTURE ───────────────────────────────────────────────────

@dataclass

class Goal:

"""

A single node in the Goal-Oriented Graph.

Each Goal represents an actionable objective with explicit preconditions

and postconditions, following the schema in Table 1 of the paper.

Edges between Goals encode prerequisite (subgoal) relationships.

"""

name: str # e.g. "craft wooden pickaxe"

description: str # natural language summary

required_tools: Dict[str, int] # {tool_name: min_grade}

required_materials: Dict[str, int] # {material_name: quantity}

postconditions: Dict[str, int] # {item_name: quantity_produced}

aliases: List[str] = field(default_factory=list)

subgoal_names: List[str] = field(default_factory=list) # edges → children

def to_dict(self) -> dict:

"""Serialise goal to a JSON-compatible dict for LLM prompting."""

return {

"name": self.name,

"description": self.description,

"required_tools": self.required_tools,

"required_materials": self.required_materials,

"postconditions": self.postconditions,

"subgoals": self.subgoal_names,

}

# ─── 3. EMBEDDING UTILITIES ───────────────────────────────────────────────────

class EmbeddingStore:

"""

Lightweight wrapper that holds pre-computed goal name embeddings

and supports fast top-k cosine similarity retrieval.

In production this maps to nomic-text-embed-v1.5; here we accept

any callable that returns a (1, D) float tensor.

"""

def __init__(self, embed_fn):

"""

Args:

embed_fn: Callable[[str], torch.Tensor] — maps text to a 1×D vector.

"""

self.embed_fn = embed_fn

self.names: List[str] = []

self.matrix: Optional[torch.Tensor] = None # (N, D)

def add(self, name: str) -> None:

"""Embed a goal name and append it to the matrix."""

vec = self.embed_fn(name) # (1, D)

vec = F.normalize(vec, dim=-1)

self.names.append(name)

if self.matrix is None:

self.matrix = vec

else:

self.matrix = torch.cat([self.matrix, vec], dim=0)

def top_k(self, query: str, k: int = 3) -> List[Tuple[str, float]]:

"""Return top-k goal names by cosine similarity to query."""

if self.matrix is None:

return []

q = F.normalize(self.embed_fn(query), dim=-1) # (1, D)

sims = (self.matrix @ q.T).squeeze(-1) # (N,)

k = min(k, len(self.names))

vals, idxs = torch.topk(sims, k)

return [(self.names[i], vals[j].item()) for j, i in enumerate(idxs)]

def best_sim(self, query: str) -> Tuple[str, float]:

"""Return the single closest goal name and its similarity score."""

results = self.top_k(query, k=1)

return results[0] if results else ("", 0.0)

# ─── 4. GOAL-ORIENTED GRAPH ───────────────────────────────────────────────────

class GoalOrientedGraph:

"""

Implementation of Algorithm 1 from Leung et al. (2026).

Maintains a directed graph G = (V, E) where:

- V = Goal nodes with precondition/postcondition attributes

- E = Subgoal dependency edges (parent depends on child)

Supports three operations:

1. add_goal() — merge or insert a newly extracted goal

2. retrieve() — top-k retrieval by embedding similarity

3. get_chain() — DFS traversal returning the full dependency chain

"""

def __init__(self, embed_fn, theta: float = 0.92):

"""

Args:

embed_fn: Callable that maps a string to a normalised 1×D tensor.

theta: Similarity threshold for goal equivalence / aliasing.

"""

self.goals: Dict[str, Goal] = {} # name → Goal

self.theta = theta

self._store = EmbeddingStore(embed_fn)

self._embed = embed_fn

# ── helpers ──────────────────────────────────────────────────────────────

def _cond_sim(self, g_new: Goal, g_exist: Goal) -> float:

"""

Compute condition similarity between two goals.

Sorts precondition and postcondition keys alphabetically,

joins them into a single string, then computes cosine similarity

between their embeddings — matching the paper's cond_ok check.

"""

def cond_str(g: Goal) -> str:

pre = " ".join(sorted(g.required_materials.keys()))

post = " ".join(sorted(g.postconditions.keys()))

return pre + " | " + post

v1 = F.normalize(self._embed(cond_str(g_new)), dim=-1)

v2 = F.normalize(self._embed(cond_str(g_exist)), dim=-1)

return (v1 @ v2.T).item()

def _derive_subgoal_edges(self, new_goal: Goal) -> None:

"""

Link new_goal to existing goals whose postconditions satisfy

its required materials — implementing cross-chunk edge derivation.

"""

for mat in new_goal.required_materials:

for name, existing in self.goals.items():

if mat in existing.postconditions:

if name not in new_goal.subgoal_names:

new_goal.subgoal_names.append(name)

# ── public API ───────────────────────────────────────────────────────────

def add_goal(self, new_goal: Goal) -> str:

"""

Attempt to merge new_goal into the graph.

Returns one of: 'equivalent' | 'alias' | 'added'

following the three branches in Algorithm 1.

"""

if not self.goals:

self.goals[new_goal.name] = new_goal

self._store.add(new_goal.name)

self._derive_subgoal_edges(new_goal)

return "added"

best_name, name_sim = self._store.best_sim(new_goal.name)

best_goal = self.goals[best_name]

cond_sim = self._cond_sim(new_goal, best_goal)

cond_ok = cond_sim >= self.theta

if cond_ok and name_sim >= self.theta:

return "equivalent" # duplicate — discard

elif cond_ok and name_sim < self.theta:

best_goal.aliases.append(new_goal.name) # alias — record name

return "alias"

else:

self.goals[new_goal.name] = new_goal # new distinct goal

self._store.add(new_goal.name)

self._derive_subgoal_edges(new_goal)

return "added"

def retrieve(self, query: str, k: int = 3) -> List[Goal]:

"""Return top-k Goal objects ranked by name embedding similarity."""

results = self._store.top_k(query, k=k)

return [self.goals[name] for name, _ in results if name in self.goals]

def get_chain(self, root_name: str) -> List[Goal]:

"""

Depth-first traversal from root_name, collecting the full

prerequisite chain in topological order (leaves first).

Cycles are prevented by tracking visited nodes — matching

the DFS described in Section 3.2 of the paper.

"""

visited: Set[str] = set()

order: List[Goal] = []

def dfs(name: str) -> None:

if name in visited or name not in self.goals:

return

visited.add(name)

goal = self.goals[name]

for sub in goal.subgoal_names:

dfs(sub)

order.append(goal)

dfs(root_name)

return order # topological order: subgoals before their parents

def get_materials_list(self, root_name: str) -> Dict[str, int]:

"""

Walk the full goal chain and aggregate all required_materials,

subtracting postconditions of intermediate goals to avoid

double-counting items produced within the chain itself.

"""

chain = self.get_chain(root_name)

produced: Dict[str, int] = {}

needed: Dict[str, int] = {}

for goal in chain:

for item, qty in goal.required_materials.items():

available = produced.get(item, 0)

shortfall = max(0, qty - available)

if shortfall > 0:

needed[item] = needed.get(item, 0) + shortfall

produced[item] = max(0, available - qty)

for item, qty in goal.postconditions.items():

produced[item] = produced.get(item, 0) + qty

return needed

# ─── 5. PLAN QUALITY METRICS ──────────────────────────────────────────────────

def completeness(plan_items: Dict[str, int], needed_items: Dict[str, int]) -> float:

"""

Equation (1) from the paper.

c = min(n_obtained / n_needed, 1.0)

Measures whether the plan obtains at least the required quantity

of every item needed to complete the task.

"""

if not needed_items:

return 1.0

total_needed = sum(needed_items.values())

total_obtained = sum(min(plan_items.get(k, 0), v) for k, v in needed_items.items())

return min(total_obtained / total_needed, 1.0)

def efficiency(plan_steps: int, min_steps: int) -> float:

"""

Equation (2) from the paper.

e = s_minimal / s_plan if s_plan >= s_minimal else 0

Penalises unnecessarily long plans without rewarding short ones

that are too short to be feasible.

"""

if plan_steps < min_steps:

return 0.0

return min_steps / plan_steps

# ─── 6. SMOKE TEST ────────────────────────────────────────────────────────────

def _dummy_embed(text: str) -> torch.Tensor:

"""

Deterministic hash-based pseudo-embedding for smoke testing.

Replace with a real sentence-embedding model in production.

"""

chars = list(text.lower())

D = 64

vec = torch.zeros(D)

for i, c in enumerate(chars):

vec[i % D] += ord(c) / 128.0

return vec.unsqueeze(0) # (1, D)

def _smoke_test() -> None:

"""

End-to-end smoke test using a minimal Minecraft crafting subgraph.

Builds three goals:

mine logs → craft planks → craft wooden pickaxe

and verifies retrieval, chain construction, and materials aggregation.

"""

print("=== GoG Smoke Test ===")

gog = GoalOrientedGraph(_dummy_embed, theta=0.50) # low theta for test

# --- define test goals ---

mine_logs = Goal(

name="mine logs",

description="Chop a tree to collect wood logs.",

required_tools={},

required_materials={},

postconditions={"logs": 4},

)

craft_planks = Goal(

name="craft planks",

description="Convert logs into wooden planks at a crafting grid.",

required_tools={},

required_materials={"logs": 1},

postconditions={"planks": 4},

)

craft_pickaxe = Goal(

name="craft wooden pickaxe",

description="Craft a wooden pickaxe from planks and sticks.",

required_tools={"crafting_table": 1},

required_materials={"planks": 3, "sticks": 2},

postconditions={"wooden_pickaxe": 1},

)

# --- build graph ---

for g in [mine_logs, craft_planks, craft_pickaxe]:

status = gog.add_goal(g)

print(f" Added '{g.name}' → {status}")

# --- duplicate test ---

dup_status = gog.add_goal(Goal(

name="mine logs", description="Same goal.",

required_tools={}, required_materials={},

postconditions={"logs": 4},

))

assert dup_status == "equivalent", f"Expected 'equivalent', got {dup_status}"

print(f" Duplicate 'mine logs' → {dup_status} ✓")

# --- retrieval ---

results = gog.retrieve("wooden pickaxe", k=2)

print(f" Retrieval for 'wooden pickaxe': {[r.name for r in results]}")

assert any("pickaxe" in r.name for r in results)

# --- goal chain ---

chain = gog.get_chain("craft wooden pickaxe")

print(f" Chain for 'craft wooden pickaxe': {[g.name for g in chain]}")

# --- materials list ---

mats = gog.get_materials_list("craft wooden pickaxe")

print(f" Materials needed: {mats}")

# --- plan quality metrics ---

plan_items = {"planks": 3, "sticks": 2}

c = completeness(plan_items, {"planks": 3, "sticks": 2})

e = efficiency(plan_steps=5, min_steps=4)

print(f" Completeness={c:.2f} Efficiency={e:.2f}")

assert c == 1.0, "Completeness should be 1.0"

assert 0 < e <= 1, "Efficiency should be in (0,1]"

print("=== All checks passed ✓ ===")

if __name__ == "__main__":

_smoke_test()

Read the Paper & Explore the Code

The full GoG paper — including all 66 task experiments, ablation tables, and prompt designs — is available via Elsevier. The official PyTorch implementation supports the complete Minecraft benchmark with GPT-4o-mini extraction.

Leung, J., Wang, Y., & Shen, Z. (2026). From entity-centric to goal-oriented graphs: Enhancing LLM knowledge retrieval in Minecraft. Knowledge-Based Systems, 341, 115706. https://doi.org/10.1016/j.knosys.2026.115706

This article is an independent editorial analysis of peer-reviewed research published open-access under the CC BY-NC 4.0 license. Views expressed reflect the author’s interpretation and do not represent the original research team. Code provided for educational purposes; refer to the official repository for the production implementation.

Explore More on AI Trend Blend

If this breakdown sparked your interest, here is more of what we cover — from foundational tutorials to the latest research in LLM reasoning, knowledge graphs, and AI agent systems.