GREx: Why “All People” Breaks Every Referring Expression Model — And What These Researchers Did About It

A team from NTU and Fudan University identified a blind spot that has haunted referring expression AI for a decade: the assumption that every phrase points to exactly one object. Their fix is a new family of benchmarks, a 259K-expression dataset, and a baseline that rewrites the state of the art.

Imagine pointing at a group photo and saying “segment all the people wearing red.” A human assistant would find every red-shirted person in the frame, effortlessly. Now ask a state-of-the-art referring expression model the same thing. If it was trained on any of the major existing datasets — RefCOCO, RefCOCOg, ReferIt — it will hand you back a single bounding box, because that is all those datasets ever asked it to do. One expression, one target. Always. Researchers at Nanyang Technological University and Fudan University have spent the last few years making that assumption go away.

The Hidden Assumption Nobody Questioned

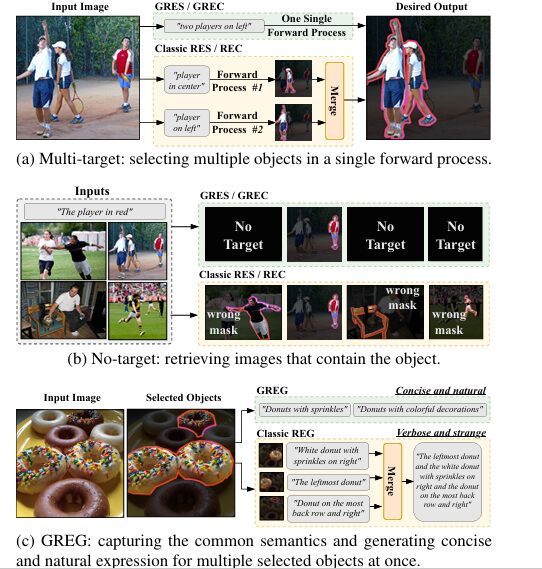

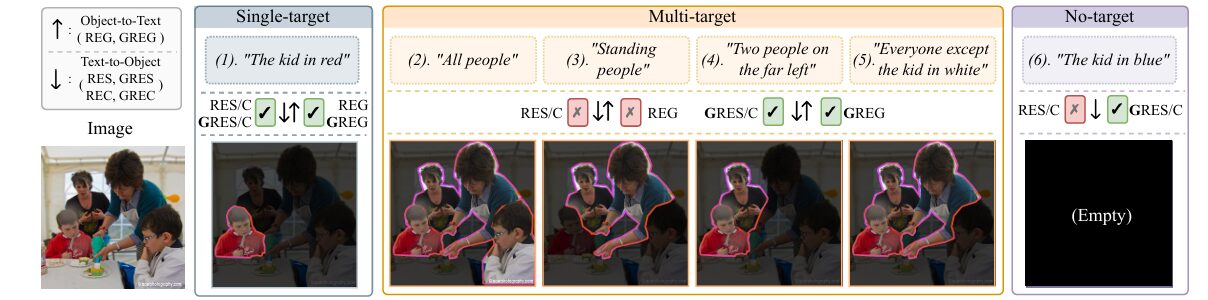

Referring Expression tasks have three main flavours. Referring Expression Segmentation (RES) takes an image and a phrase and produces a pixel-level mask of the described object. Referring Expression Comprehension (REC) does the same but outputs a bounding box instead of a mask. Referring Expression Generation (REG) runs in reverse: given an image and a selected object, produce a phrase that uniquely identifies it.

All three tasks have matured rapidly over the past decade. Models now achieve impressive benchmark scores, multi-modal transformers have largely supplanted convolutional architectures, and large language models are starting to enter the pipeline. By almost every metric, the field looks healthy.

Here is where it gets uncomfortable. Every benchmark driving that progress was built on a quiet assumption: one expression, one object. RefCOCO contains 142,000 expressions, all of them single-target. RefCOCOg has 95,000 — same constraint. ReferIt, the original benchmark from 2014, is structurally incapable of expressing “find me all of these.” The models trained on these datasets have never been asked to do anything else, and so they never learned to.

The practical consequences are real. If a user types “segment the two people on the far left,” a classic RES model will pick one of them and silently ignore the other. If they type “the kid in blue” about an image containing no blue-clad children, the model will produce a confident but completely wrong mask rather than returning empty. These are not edge cases. They are normal uses of natural language, and they fail systematically.

The “one expression, one target” constraint is not a law of nature — it is an artifact of dataset design decisions made in 2014. Every major referring expression benchmark since then has inherited and reinforced it. GREx is the first systematic effort to retire it.

Three New Benchmarks, One Unified Framework

The paper introduces GREx: a family of three generalized benchmarks that extend the classic REx tasks to allow expressions indicating any number of target objects — including zero.

Generalized RES (GRES) takes an image and an expression and produces a segmentation mask covering all target objects the expression refers to. If the expression refers to nobody in the image, the mask should be entirely empty. If it refers to six people, the mask should cover all six.

Generalized REC (GREC) does the same but outputs a set of bounding boxes instead. No predefined count. The number of boxes can be anything from zero upward, and each box should tightly enclose one of the referred instances.

Generalized REG (GREG) flips the direction. Given an image and a set of selected objects (multiple bounding boxes or a mask), generate a single natural language expression that unambiguously refers to all of them at once. Classic REG generates one expression per object — a verbose and often redundant approach. GREG captures shared semantics. “Donuts with colorful decorations” is better than four separate descriptions of four separate donuts.

All three benchmarks are designed to be backward-compatible with their classic counterparts. Single-target expressions remain valid inputs. This allows existing methods to be evaluated directly on GREx, making the performance gap between the old and new paradigms immediately measurable.

New Metrics for a New Task

Extending the tasks required extending the metrics. The standard cIoU (cumulative Intersection over Union) used in classic RES has a known bias toward larger objects — when multiple targets are present, foreground areas grow, and cIoU inflates accordingly. The team introduces gIoU (generalized IoU) to correct this by computing per-image IoU across all samples and averaging them equally.

For no-target samples, standard IoU breaks entirely — there is no ground-truth foreground to intersect with. The fix is clean: a correctly identified no-target sample (predicted empty mask, empty ground truth) gets an IoU of 1; an incorrectly identified one gets 0.

Two additional metrics capture no-target handling specifically. N-acc. (No-target accuracy) measures how often the model correctly returns empty output for a no-target expression:

T-acc. (Target accuracy) measures the flip side — how often the model correctly identifies that an expression does refer to something, so that improving no-target recall does not come at the expense of destroying performance on regular samples:

For GREC, the team introduces Precision@(F1=1, IoU≥0.5) — a strict metric that counts a sample as correctly predicted only if every ground-truth box is matched (no false negatives) and no spurious boxes are returned (no false positives). A single missed object or a single redundant box fails the sample. When all samples are single-target, this metric collapses to the classic Precision@(IoU≥0.5), preserving backward compatibility.

Building gRefCOCO: A Dataset That Earns Its Scale

Benchmarks are only as good as the data behind them. The team built gRefCOCO — a large-scale dataset grounded in the MS COCO image collection and extending the well-established RefCOCO annotations.

The final dataset contains 259,859 expressions across 19,994 images, referring to 61,316 distinct objects. Of these, 90,064 are multi-target expressions and 34,537 are no-target expressions. Every expression comes with both segmentation masks and bounding boxes for each target object — a level of annotation density that competing datasets lack.

| Dataset | Single-target | Multi-target | No-target | # Expressions | Annotation | Expression Type |

|---|---|---|---|---|---|---|

| ReferIt | ✓ | ✗ | ✗ | 120K | Mask + Box | Free |

| RefCOCO(g) | ✓ | ✗ | ✗ | 142K / 95K | Mask + Box | Free |

| PhraseCut | ✓ | Fallback only | ✗ | 345K | Mask + Box | Templated |

| Ref-ZOM | ✓ | ✓ (synthetic) | ✓ | 90K | Mask + Box | Free |

| GRD | ✓ | ✓ | ✓ | 0.3K | Mask only | Group |

| gRefCOCO (ours) | ✓ | ✓ | ✓ | 259K | Mask + Box | Free |

The closest competitor with multi-target and no-target support is Ref-ZOM, which has 90K expressions but constructs many of its multi-target samples synthetically — merging single-target expressions or using category templates rather than asking annotators to write natural sentences. GRD supports the same categories but contains only 316 expressions in total. gRefCOCO is the first dataset to systematically support all three expression types with rich, human-authored, instance-grounded annotations at real scale.

What makes a good no-target expression?

Building no-target samples turned out to be harder than it sounds. Left to their own devices, annotators kept writing things like “dog” for images that contained no dogs. These are trivially easy for a model to reject — no visual evidence at all. The team introduced two rules: the expression must be plausible given the image content (you can write “the kid in blue” for an image of children even if none are wearing blue), and annotators could borrow expressions from other images in the dataset split if needed. The result is a set of no-target examples that are genuinely deceptive — close enough to the image content to require actual semantic reasoning to reject.

gRefCOCO is backward-compatible with RefCOCO — its train set is a strict superset, and its validation and test images are identical to RefCOCO’s. This means existing methods can be evaluated on gRefCOCO without retraining, making the performance gap directly visible.

The ReLA Baseline: Regions That Think in Language

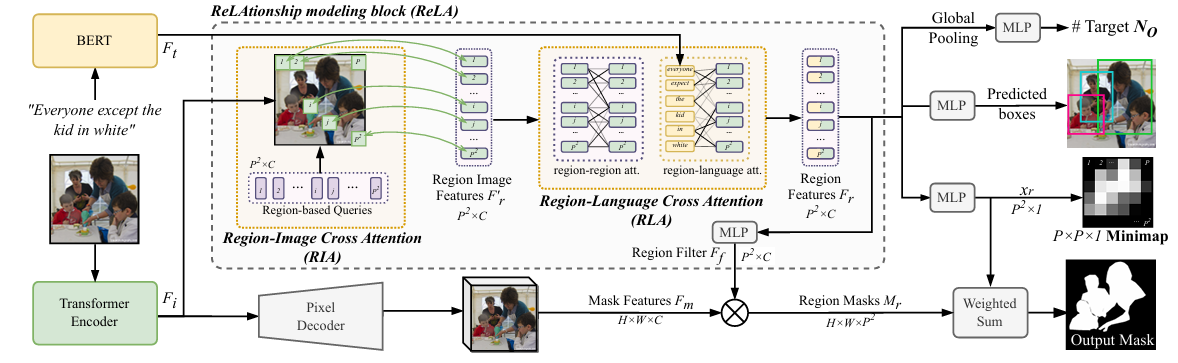

A new benchmark needs a new baseline, and the team delivers one. ReLA (ReLAtionship modeling) is built around a core insight: multi-target expressions require understanding how objects in an image relate to each other and to the words in the expression — and that relationship modeling needs to happen at a sub-instance level, not just at the whole-image level.

Standard vision transformers divide images into fixed patches. This works well for classification and global understanding, but it is too rigid for referring expression tasks where you need to reason about, say, the color of someone’s jacket or the spatial relationship between two chairs. ReLA instead introduces a soft-aggregation approach that dynamically assembles region features during decoding rather than imposing a hard split upfront.

Architecture overview

The input image is processed by a Swin Transformer encoder to produce visual features \(F_i \in \mathbb{R}^{H \times W \times C}\). The expression is encoded by BERT to produce language features \(F_t \in \mathbb{R}^{N_t \times C}\). Both feed into the ReLAtionship modeling block, which divides the image semantically into \(P \times P = P^2\) regions — not by slicing the image, but by using \(P^2\) learnable queries that each pull features from the spatially relevant areas of the visual feature map.

The ReLAtionship block contains two main modules:

Region-Image Cross Attention (RIA) uses learnable region-based queries \(Q_r \in \mathbb{R}^{P^2 \times C}\) to gather features from the image dynamically:

Each query learns to attend to its corresponding spatial region, but the boundaries are flexible — one instance can be represented by multiple regions, capturing sub-instance detail like the upper and lower body of a person independently.

Region-Language Cross Attention (RLA) takes those region features and models two types of relationship: region-to-region (via self-attention, capturing spatial and semantic dependencies between different image areas) and region-to-language (via cross-attention, aligning each region with the relevant words in the expression):

The final fused region feature combines the original region image features, the relationship-aware features from self-attention, and the language-aware features from cross-attention: \(F_r = \text{MLP}(F’_r + F_{r1} + F_{r2})\).

For GRES, region-level segmentation masks are generated and fused by weighted aggregation:

where \(x^n_r\) is the probability that region \(n\) contains target objects, learned via supervision from a downsampled “minimap” of the ground-truth mask. For GREC, an additional MLP head predicts bounding box coordinates and a target count head \(N_O\) predicts how many boxes to output.

“In contrast to classic RES and REC that typically focus on detecting single objects, allowing some methods to achieve satisfactory performance without explicit region-to-region interaction modeling, in the context of GRES and GREC, the intricacy of modeling long-range region-to-region dependencies becomes more pronounced and imperative.” — Ding et al., IJCV 2026

Results: How Large Is the Gap?

The experimental results answer the most important question bluntly: classic methods trained on gRefCOCO still fail on GREx tasks, and the failure is not minor. ReLA outperforms every prior method by a substantial margin on GRES, GREC, and classic RES simultaneously.

GRES results on gRefCOCO

| Method | Val cIoU | Val gIoU | testA cIoU | testA gIoU | testB cIoU | testB gIoU |

|---|---|---|---|---|---|---|

| MattNet | 47.51 | 48.24 | 58.66 | 59.30 | 45.33 | 46.14 |

| LTS | 52.30 | 52.70 | 61.87 | 62.64 | 49.96 | 50.42 |

| VLT | 52.51 | 52.00 | 62.19 | 63.20 | 50.52 | 50.88 |

| CRIS | 55.34 | 56.27 | 63.82 | 63.42 | 51.04 | 51.79 |

| LAVT | 57.64 | 58.40 | 65.32 | 65.90 | 55.04 | 55.83 |

| VLT + ReLA | 58.65 | 59.43 | 66.60 | 65.35 | 56.22 | 57.36 |

| LAVT + ReLA | 61.23 | 61.32 | 67.54 | 66.40 | 58.24 | 59.83 |

| ReLA (ours) | 62.91 | 63.98 | 69.43 | 70.12 | 60.15 | 61.29 |

The gains from adding ReLA as a drop-in replacement for the decoder in existing architectures are immediate and consistent. Plugging ReLA into LAVT improves val cIoU by over 4 points. The standalone ReLA model adds another 1.7 points on top of that. Across all three test splits, ReLA leads by a clear margin — and crucially, this is on a dataset containing multi-target and no-target expressions that every prior method was architecturally unprepared for.

GREC results on gRefCOCO

| Method | Val Pr@F1 | Val N-acc. | Val T-acc. | testA Pr@F1 | testB Pr@F1 |

|---|---|---|---|---|---|

| MCN | 28.02 | 30.64 | 99.62 | 32.29 | 26.76 |

| TransVG | 30.96 | 31.18 | 99.50 | 33.83 | 28.44 |

| VLT | 36.62 | 35.20 | 99.44 | 40.21 | 30.24 |

| MDETR | 42.69 | 36.27 | 99.40 | 50.04 | 36.52 |

| UNINEXT | 58.19 | 50.58 | 96.52 | 46.41 | 42.91 |

| ReLA (Swin-B) | 61.90 | 56.37 | 96.32 | 50.35 | 44.61 |

The GREC numbers tell a striking story about how badly classic REC methods transfer to this task. MDETR, one of the strongest end-to-end grounding models, historically exceeds 85% on single-target RefCOCO. On gRefCOCO’s GREC benchmark it manages 42.69% Pr@F1. That collapse is not a failure of MDETR — it is an accurate measurement of how much the single-target assumption was doing the work all along.

Classic RES: ReLA holds its own there too

| Method | RefCOCO val | RefCOCO testA | RefCOCO testB | RefCOCO+ val | G-Ref val(U) |

|---|---|---|---|---|---|

| LAVT | 72.73 | 75.82 | 68.79 | 62.14 | 61.24 |

| VLT+ | 72.96 | 75.96 | 69.60 | 63.53 | 63.49 |

| ReLA (cIoU) | 73.82 | 76.48 | 70.18 | 66.04 | 65.00 |

| ReLA (mIoU) | 75.61 | 77.79 | 72.82 | 70.42 | 68.65 |

Winning on GREx at the cost of classic RES performance would be a trade-off worth discussing. ReLA does not make that trade. It outperforms LAVT by 1–4% across all three classic RES datasets too, which confirms that explicit relationship modeling is beneficial even in the single-target setting — not a specialized trick that only pays off when multiple objects are involved.

The Ablation That Explains Everything

The design choices in ReLA are validated through a series of carefully structured ablation studies. The numbers are worth examining because they show which components actually carry the weight.

Replacing RIA with a simple hard-split of the image into fixed patches (as in standard ViT) drops gIoU by 5.63% and Pr@F1 by 4.93% compared to the soft-aggregation approach. The global context preserved by dynamic region assembly matters significantly. Removing the minimap supervision — which links each region-based query to its corresponding spatial area in the image — costs another 1.80–1.83%. The explicit spatial correspondence turns out to be load-bearing.

For RLA, removing region-language cross-attention drops gIoU by 2.02% and Pr@F1 by 2.23%. Removing region-region self-attention loses another 3.89–3.83% on top of that. The two modules are not interchangeable — region-region dependencies matter more than region-language alignment in isolation, but both are necessary for peak performance.

| Ablation | GREC Pr@F1 | GRES cIoU | GRES gIoU |

|---|---|---|---|

| Hard split, input (ViT-style) | 53.26 | 54.45 | 55.39 |

| Average pooling (no dynamic RIA) | 58.19 | 60.12 | 61.02 |

| RIA without minimap supervision | 60.07 | 61.45 | 62.18 |

| Full ReLA (RIA + minimap) | 61.90 | 62.91 | 63.98 |

The target count head \(N_O\) deserves special mention. For GREC, replacing it with a binary “target present / absent” classifier drops Pr@F1 from 61.90% to 37.58% — a catastrophic loss. For GRES, the same replacement barely moves the needle (less than 0.5%). The asymmetry makes sense: GREC requires exactly the right number of bounding boxes, so miscounting directly fails the sample. GRES produces a binary mask over the whole image, so it does not depend on counting at all. The counting head is a GREC necessity, not a general trick.

GREG: When One Expression Must Capture Many Objects

The generation results are the most sobering part of the paper. Table 15 reports METEOR and CIDEr scores for classic REG methods and zero-shot MLLMs on gRefCOCO’s GREG benchmark. Every single method shows a marked performance drop when transitioning from single-target to multi-target samples.

The best-performing traditional method, unleash-then-eliminate — a large language model-based approach specifically designed for REG — drops 4.5 METEOR and 7.7 CIDEr when shifting from single to multi-target. GPT-4o mini, InternVL3-8B, and Qwen2.5-VL-7B all follow the same pattern. Zero-shot MLLMs generate more fluent sentences (higher METEOR) but their CIDEr scores show they are not actually aligning well with what humans would write to describe a set of objects. The shared-semantics reasoning that GREG requires — noticing that four selected people are “all wearing hats” rather than describing each hat individually — is simply not there yet.

| Method | LLM-based | METEOR (Single) | METEOR (Multi) | CIDEr (Single) | CIDEr (Multi) |

|---|---|---|---|---|---|

| DisCLIP | ✗ | 10.8 | 9.9 | 17.4 | 9.3 |

| IREG | ✗ | 12.9 | 9.3 | 14.7 | 9.8 |

| GLaMM | ✓ | 14.0 | 10.7 | 18.3 | 11.9 |

| unleash-then-eliminate | ✓ | 18.6 | 14.1 | 22.5 | 14.8 |

| GPT-4o mini (zero-shot) | ✓ | 15.4 | 13.2 | 16.4 | 9.3 |

| Qwen2.5-VL-7B (zero-shot) | ✓ | 16.3 | 14.6 | 16.0 | 9.9 |

The numbers make GREG the hardest of the three GREx tasks and the one with the most headroom for future work. The ability to describe a user-selected group of objects with a single, natural, non-redundant sentence requires understanding group identity and shared semantics at a level that current models simply do not possess.

It Also Works on Video

The paper includes one result that is easy to overlook but speaks to the generality of the approach. ReLA was adapted for Referring Video Object Segmentation (RVOS) — applying the model frame-by-frame and adding temporal modeling — and evaluated on two benchmarks: MeViS and Ref-YouTube-VOS.

On MeViS, a challenging benchmark emphasising motion expressions, ReLA achieves J&F of 44.6 — a substantial improvement over the previous best of 37.2. On Ref-YouTube-VOS, it reaches 65.7 J&F, ahead of all prior methods. Neither of these datasets was used to design ReLA, and neither requires the multi-target capability that motivated its architecture. The explicit relationship modeling simply turns out to be useful broadly, not just in the setting it was built for.

What Remains Open

The paper is clear-eyed about its limitations, which is worth acknowledging explicitly. No-target identification remains imperfect: around 40% of no-target samples are still missed by ReLA’s dedicated no-target classifier. Many of these are genuinely deceptive expressions — describing something visually plausible in the image context but not actually present — and the authors suspect that closing this gap will require reasoning about fine-grained visual-semantic alignment more deeply than current architectures support.

For GREC specifically, the multi-target bounding box setting introduces a box-counting challenge that ripples through everything downstream. Getting the right number of boxes requires accurate target count prediction; getting the right boxes requires accurate grounding of each instance; and both must succeed simultaneously for Pr@F1 to score a sample as correct. The strict metric is the right metric — it reflects what users actually need — but it means that even partial failures count as complete failures.

The eight future directions the paper outlines — improved no-target handling, fine-grained relationship modeling, robustness to noise, long-range dependency modeling, counting and ordinal expressions, cross-modal fusion, LLM integration, and multilingual extensions — amount to a research agenda that will keep the community busy for years. GREx provides the benchmarks and the dataset to measure progress on all of them.

Why This Matters Beyond Academic Benchmarks

The practical motivation is not hard to construct. Image editing tools that let users say “remove the people in the background” need multi-target segmentation. Visual search systems that need to return “no match” rather than a wrong match need no-target support. Caption generation for groups of people or objects requires GREG-style multi-object expression generation. These are not hypothetical future applications — they are active development areas at companies building multimodal products right now.

What the GREx paper provides is the right evaluation infrastructure. Without a benchmark that tests multi-target and no-target capability explicitly, models will continue to be trained and deployed with these blind spots intact. The single-target assumption has been invisible precisely because no benchmark made it visible. gRefCOCO changes that, and the performance gaps it reveals — 42% Pr@F1 for MDETR on GREC; 40% of no-target samples missed by the best model — give future work a concrete target to improve against.

Complete Proposed Model Code (PyTorch)

The implementation below is the complete end-to-end ReLA model — visual encoder, language encoder, FPN neck, pixel decoder, RIA, RLA, target count head, multi-task loss, training step, and inference function — all in a single file. This maps to Sections 4.1 and 4.2 of the paper and includes a runnable smoke test. The official implementation is available at henghuiding.com/GREx.

# ─── 1. IMPORTS & CONFIGURATION ──────────────────────────────────────────────

import math

import torch

import torch.nn as nn

import torch.nn.functional as F

from dataclasses import dataclass, field

from typing import Dict, List, Optional, Tuple

@dataclass

class ReLAConfig:

"""

Hyperparameters matching the paper's implementation (Section 5.1).

Visual encoder: Swin-B. Language encoder: BERT-base-uncased.

Trained for 150K iterations, batch=48, 8×V100 GPUs.

"""

# Image / patch settings

img_size: int = 480 # Input resolution (480×480)

patch_size: int = 4 # Swin patch size

in_channels: int = 3

# Swin-B backbone

embed_dim: int = 128 # Base channel dim (Swin-B = 128)

swin_depths: Tuple = (2, 2, 18, 2)

swin_heads: Tuple = (4, 8, 16, 32)

window_size: int = 12

# Language encoder (BERT-base)

lang_dim: int = 768 # BERT hidden dimension

# Shared feature dim

feat_dim: int = 256 # Unified channel dim C across all modules

# ReLA-specific

num_regions: int = 100 # P^2 (P=10 per ablation Table 4)

pixel_dec_layers: int = 6 # Pixel decoder transformer layers

max_targets: int = 7 # N_O classes: {0,1,2,3,4,5,5+}

n_heads: int = 8 # Attention heads in RIA / RLA

dropout: float = 0.1

# Loss weights — Eq. (2): L = λM·LM + λB·LB + λxr·Lxr + λNO·LNO

lambda_mask: float = 2.0

lambda_box: float = 5.0

lambda_xr: float = 0.2

lambda_no: float = 1.0

# ─── 2. BOX UTILITIES & GIoU LOSS ────────────────────────────────────────────

def cxcywh_to_xyxy(boxes: torch.Tensor) -> torch.Tensor:

"""Convert [cx, cy, w, h] → [x1, y1, x2, y2]."""

cx, cy, w, h = boxes.unbind(-1)

return torch.stack([cx - w / 2, cy - h / 2,

cx + w / 2, cy + h / 2], dim=-1)

def box_iou_xyxy(b1: torch.Tensor, b2: torch.Tensor) -> torch.Tensor:

"""Pairwise IoU between two sets of [x1,y1,x2,y2] boxes. (B, N) shapes."""

inter_x1 = torch.max(b1[..., 0], b2[..., 0])

inter_y1 = torch.max(b1[..., 1], b2[..., 1])

inter_x2 = torch.min(b1[..., 2], b2[..., 2])

inter_y2 = torch.min(b1[..., 3], b2[..., 3])

inter = (inter_x2 - inter_x1).clamp(0) * (inter_y2 - inter_y1).clamp(0)

a1 = (b1[..., 2] - b1[..., 0]) * (b1[..., 3] - b1[..., 1])

a2 = (b2[..., 2] - b2[..., 0]) * (b2[..., 3] - b2[..., 1])

return inter / (a1 + a2 - inter + 1e-6)

def giou_loss(pred_cxcywh: torch.Tensor, tgt_cxcywh: torch.Tensor) -> torch.Tensor:

"""

Generalised IoU loss (Rezatofighi et al., CVPR 2019).

Following the box regression objective in MDETR [67] adopted by ReLA.

Args:

pred_cxcywh, tgt_cxcywh: (N, 4) tensors in [cx,cy,w,h] format.

"""

pred = cxcywh_to_xyxy(pred_cxcywh)

tgt = cxcywh_to_xyxy(tgt_cxcywh)

iou = box_iou_xyxy(pred, tgt)

# Enclosing box

enc_x1 = torch.min(pred[..., 0], tgt[..., 0])

enc_y1 = torch.min(pred[..., 1], tgt[..., 1])

enc_x2 = torch.max(pred[..., 2], tgt[..., 2])

enc_y2 = torch.max(pred[..., 3], tgt[..., 3])

enc_w = (enc_x2 - enc_x1).clamp(0)

enc_h = (enc_y2 - enc_y1).clamp(0)

enc_area = enc_w * enc_h + 1e-6

# Union area

a1 = pred_cxcywh[..., 2] * pred_cxcywh[..., 3]

a2 = tgt_cxcywh[..., 2] * tgt_cxcywh[..., 3]

inter = iou * (a1 + a2) / (1.0 + iou + 1e-6)

union = a1 + a2 - inter + 1e-6

giou = iou - (enc_area - union) / enc_area

return (1.0 - giou).mean()

# ─── 3. VISUAL ENCODER — SIMPLIFIED MULTI-SCALE BACKBONE ─────────────────────

class PatchEmbed(nn.Module):

"""

Partition image into non-overlapping patches then project to embed_dim.

Equivalent to the patch embedding in Swin Transformer (Liu et al., ICCV 2021).

In the full model this is replaced by the pretrained Swin-B.

"""

def __init__(self, img_size: int = 480, patch_size: int = 4,

in_ch: int = 3, embed_dim: int = 128):

super().__init__()

self.proj = nn.Conv2d(in_ch, embed_dim,

kernel_size=patch_size, stride=patch_size)

self.norm = nn.LayerNorm(embed_dim)

self.H = img_size // patch_size

self.W = img_size // patch_size

def forward(self, x: torch.Tensor) -> torch.Tensor:

"""(B, C, H, W) → (B, H*W, embed_dim)"""

x = self.proj(x) # (B, D, H, W)

B, D, H, W = x.shape

x = x.flatten(2).transpose(1, 2) # (B, HW, D)

return self.norm(x), H, W

class VisualEncoderStage(nn.Module):

"""

One stage of the backbone producing features at 1/2 the spatial resolution.

In production: replaced by the Swin-B pretrained stage.

Outputs features of shape (B, H*W, out_dim).

"""

def __init__(self, in_dim: int, out_dim: int, depth: int, n_heads: int,

downsample: bool = True):

super().__init__()

self.blocks = nn.ModuleList([

nn.TransformerEncoderLayer(

d_model=in_dim, nhead=n_heads,

dim_feedforward=in_dim * 4,

dropout=0.0, batch_first=True, norm_first=True,

) for _ in range(depth)

])

self.downsample = nn.Sequential(

nn.LayerNorm(in_dim),

nn.Linear(in_dim, out_dim),

) if downsample else nn.Identity()

self.down_spatial = downsample

self.out_dim = out_dim

def forward(self, x: torch.Tensor, H: int, W: int

) -> Tuple[torch.Tensor, int, int]:

"""(B, HW, C) → (B, H'W', out_dim)"""

for blk in self.blocks:

x = blk(x)

if self.down_spatial:

# 2× spatial downsampling via reshape + average pool

B, _, C = x.shape

x = x.view(B, H, W, C)

x = x[:, 0::2, 0::2, :] + x[:, 1::2, 0::2, :] + \

x[:, 0::2, 1::2, :] + x[:, 1::2, 1::2, :] # 2×2 merge

x = x / 4.0

H, W = H // 2, W // 2

x = x.view(B, H * W, C)

x = self.downsample(x)

return x, H, W

class VisualEncoder(nn.Module):

"""

Simplified 4-stage multi-scale visual encoder.

Produces three feature maps at resolutions 1/8, 1/16, 1/32 of the input,

matching the multi-scale output of Swin-B used for feature fusion in ReLA.

Channel dims follow Swin-B: [256, 512, 1024] at stages 2–4.

In the actual paper, a pretrained Swin-B with ImageNet-22K weights is used.

"""

def __init__(self, cfg: ReLAConfig):

super().__init__()

D = cfg.embed_dim # 128 for Swin-B

self.patch_embed = PatchEmbed(cfg.img_size, cfg.patch_size,

cfg.in_channels, D)

# Stage 1: 1/4 resolution, D channels

self.stage1 = VisualEncoderStage(D, D, cfg.swin_depths[0],

cfg.swin_heads[0], downsample=False)

# Stage 2: 1/8 resolution, 2D channels ← feature map C2

self.stage2 = VisualEncoderStage(D, D*2, cfg.swin_depths[1],

cfg.swin_heads[1], downsample=True)

# Stage 3: 1/16 resolution, 4D channels ← feature map C3

self.stage3 = VisualEncoderStage(D*2, D*4, cfg.swin_depths[2],

cfg.swin_heads[2], downsample=True)

# Stage 4: 1/32 resolution, 8D channels ← feature map C4

self.stage4 = VisualEncoderStage(D*4, D*8, cfg.swin_depths[3],

cfg.swin_heads[3], downsample=True)

def forward(self, x: torch.Tensor

) -> Tuple[torch.Tensor, torch.Tensor, torch.Tensor]:

"""

Args:

x: (B, 3, H, W) input image

Returns:

c2, c3, c4: multi-scale feature tensors (B, Hi*Wi, Ci)

"""

x, H, W = self.patch_embed(x) # (B, HW/16, D)

x, H, W = self.stage1(x, H, W) # 1/4

c2, H2, W2 = self.stage2(x, H, W) # 1/8

c3, H3, W3 = self.stage3(c2, H2, W2) # 1/16

c4, H4, W4 = self.stage4(c3, H3, W3) # 1/32

return c2, c3, c4

# ─── 4. LANGUAGE ENCODER — BERT WRAPPER ──────────────────────────────────────

class LanguageEncoder(nn.Module):

"""

Wraps BERT-base-uncased to produce token-level language features Ft.

Following the paper: BERT is frozen except for the last two layers.

Output dim is projected from lang_dim (768) to feat_dim (256).

In production, instantiate with:

from transformers import BertModel

bert = BertModel.from_pretrained('bert-base-uncased')

"""

def __init__(self, cfg: ReLAConfig):

super().__init__()

# Stub: replaces BertModel when transformers is available

self.hidden_size = cfg.lang_dim # 768

self.feat_dim = cfg.feat_dim # 256

# Simplified: single-layer self-attention to simulate BERT output

self.token_embed = nn.Embedding(30522, cfg.lang_dim) # BERT vocab size

self.pos_embed = nn.Embedding(512, cfg.lang_dim)

self.encoder = nn.TransformerEncoder(

nn.TransformerEncoderLayer(

d_model=cfg.lang_dim, nhead=12,

dim_feedforward=cfg.lang_dim * 4,

dropout=0.1, batch_first=True, norm_first=True,

), num_layers=2 # last 2 layers unfrozen

)

self.proj = nn.Linear(cfg.lang_dim, cfg.feat_dim)

def forward(self, input_ids: torch.Tensor,

attention_mask: Optional[torch.Tensor] = None

) -> torch.Tensor:

"""

Args:

input_ids: (B, Nt) tokenised expression

attention_mask: (B, Nt) 1=valid token, 0=pad

Returns:

Ft: (B, Nt, feat_dim) language features

"""

B, Nt = input_ids.shape

pos = torch.arange(Nt, device=input_ids.device).unsqueeze(0)

x = self.token_embed(input_ids) + self.pos_embed(pos)

if attention_mask is not None:

# MultiheadAttention expects True = ignore

key_pad = (attention_mask == 0)

x = self.encoder(x, src_key_padding_mask=key_pad)

else:

x = self.encoder(x)

return self.proj(x) # (B, Nt, feat_dim)

# ─── 5. FEATURE PYRAMID NECK — MULTI-SCALE PROJECTION ────────────────────────

class FPNNeck(nn.Module):

"""

Projects multi-scale backbone features to a unified feat_dim channel width,

then fuses them via bilinear upsampling following the FPN pattern.

Output Fi: (B, H2*W2, feat_dim) at 1/8 resolution — fed to PixelDecoder + RIA.

"""

def __init__(self, cfg: ReLAConfig):

super().__init__()

D = cfg.embed_dim

self.proj4 = nn.Linear(D * 8, cfg.feat_dim) # 1024 → 256

self.proj3 = nn.Linear(D * 4, cfg.feat_dim) # 512 → 256

self.proj2 = nn.Linear(D * 2, cfg.feat_dim) # 256 → 256

self.fuse = nn.Sequential(

nn.Linear(cfg.feat_dim, cfg.feat_dim),

nn.GELU(),

)

def forward(self, c2: torch.Tensor, H2: int, W2: int,

c3: torch.Tensor, H3: int, W3: int,

c4: torch.Tensor, H4: int, W4: int,

) -> Tuple[torch.Tensor, int, int]:

"""

Returns:

fi: (B, H2*W2, feat_dim) fused image features at 1/8 resolution

"""

p4 = self.proj4(c4) # (B, H4W4, C)

p3 = self.proj3(c3) # (B, H3W3, C)

p2 = self.proj2(c2) # (B, H2W2, C)

# Upsample p4 to H3×W3, add to p3

B, _, C = p4.shape

p4_up = p4.view(B, H4, W4, C).permute(0,3,1,2)

p4_up = F.interpolate(p4_up, size=(H3, W3), mode='bilinear',

align_corners=False)

p4_up = p4_up.permute(0,2,3,1).reshape(B, H3*W3, C)

p3 = p3 + p4_up

# Upsample fused p3 to H2×W2, add to p2

p3_up = p3.view(B, H3, W3, C).permute(0,3,1,2)

p3_up = F.interpolate(p3_up, size=(H2, W2), mode='bilinear',

align_corners=False)

p3_up = p3_up.permute(0,2,3,1).reshape(B, H2*W2, C)

fi = self.fuse(p2 + p3_up) # (B, H2W2, C)

return fi, H2, W2

# ─── 6. PIXEL DECODER — 6 TRANSFORMER DECODER LAYERS ─────────────────────────

class PixelDecoder(nn.Module):

"""

6-layer transformer decoder that refines image features Fi into

per-pixel mask features Fm used for regional mask generation.

Following the paper: pixel decoder with 6 Transformer decoder layers,

hidden dim 256. Mask features Fm ∈ R^{H×W×C} are produced here.

"""

def __init__(self, cfg: ReLAConfig):

super().__init__()

self.layers = nn.ModuleList([

nn.TransformerDecoderLayer(

d_model=cfg.feat_dim, nhead=cfg.n_heads,

dim_feedforward=cfg.feat_dim * 4,

dropout=cfg.dropout, batch_first=True, norm_first=True,

) for _ in range(cfg.pixel_dec_layers)

])

self.norm = nn.LayerNorm(cfg.feat_dim)

def forward(self, fi: torch.Tensor, ft: torch.Tensor) -> torch.Tensor:

"""

Args:

fi: Image features (B, HW, C) — memory

ft: Language features (B, Nt, C) — cross-attention key/value

Returns:

fm: Mask features (B, HW, C)

"""

x = fi

for layer in self.layers:

x = layer(tgt=x, memory=ft)

return self.norm(x) # (B, HW, C) = Fm

# ─── 7. REGION-IMAGE CROSS ATTENTION (RIA) ───────────────────────────────────

class RegionImageAttention(nn.Module):

"""

Implements RIA — Equations (3) and (4) from the paper.

P^2 learnable region-based queries Qr ∈ R^{P²×C} dynamically

gather spatially relevant features from the full image feature map Fi.

Each query is supervised by a 'minimap' of the ground-truth mask so that

each region corresponds to a meaningful spatial area of the image —

unlike ViT's fixed hard-split patches.

Ablation (Table 2) shows this module provides +5.63% gIoU over hard-split.

"""

def __init__(self, cfg: ReLAConfig):

super().__init__()

self.P2 = cfg.num_regions # P^2 = 100

self.dim = cfg.feat_dim # C = 256

# Learnable region queries — each linked to a spatial patch via minimap

self.queries = nn.Parameter(

torch.empty(cfg.num_regions, cfg.feat_dim).normal_(std=0.02)

)

# Projection matrices Wik, Wiv ∈ R^{C×C}

self.W_ik = nn.Linear(cfg.feat_dim, cfg.feat_dim, bias=False)

self.W_iv = nn.Linear(cfg.feat_dim, cfg.feat_dim, bias=False)

self.act = nn.GELU()

self.scale = cfg.feat_dim ** -0.5

def forward(self, fi: torch.Tensor

) -> Tuple[torch.Tensor, torch.Tensor]:

"""

Args:

fi: (B, HW, C) flattened image features

Returns:

fr_prime: (B, P², C) region image features

ari: (B, P², HW) attention maps for minimap supervision

"""

B = fi.size(0)

Qr = self.queries.unsqueeze(0).expand(B, -1, -1) # (B, P², C)

K = self.act(self.W_ik(fi)) # (B, HW, C)

V = self.act(self.W_iv(fi)) # (B, HW, C)

# Ari = Softmax(Qr · K^T) ∈ R^{B × P² × HW} [Eq. 3]

ari = F.softmax(

torch.bmm(Qr, K.transpose(1, 2)) * self.scale, dim=-1

)

# F'r = Ari · V ∈ R^{B × P² × C} [Eq. 4]

fr_prime = torch.bmm(ari, V)

return fr_prime, ari

# ─── 8. REGION-LANGUAGE CROSS ATTENTION (RLA) ────────────────────────────────

class RegionLanguageAttention(nn.Module):

"""

Implements RLA — Equation (5) from the paper.

Two sub-modules capture complementary interactions:

① Region self-attention → region-region dependencies (Fr1)

② Region-language cross-attention → word-region alignment (Fr2)

Ablation (Table 3) shows: language att. alone +2.02% gIoU,

region self-att. alone +3.89% gIoU, both together +5.39% gIoU.

Final fused features: Fr = MLP(F'r + Fr1 + Fr2)

"""

def __init__(self, cfg: ReLAConfig):

super().__init__()

# ① Region-region self-attention

self.region_self_attn = nn.MultiheadAttention(

cfg.feat_dim, cfg.n_heads, dropout=cfg.dropout, batch_first=True

)

# ② Region-language cross-attention projections

self.W_lq = nn.Linear(cfg.feat_dim, cfg.feat_dim, bias=False)

self.W_lk = nn.Linear(cfg.feat_dim, cfg.feat_dim, bias=False)

self.act = nn.GELU()

self.scale = cfg.feat_dim ** -0.5

# Fusion MLP

self.norm = nn.LayerNorm(cfg.feat_dim)

self.mlp = nn.Sequential(

nn.Linear(cfg.feat_dim, cfg.feat_dim * 4),

nn.GELU(),

nn.Dropout(cfg.dropout),

nn.Linear(cfg.feat_dim * 4, cfg.feat_dim),

)

self.dropout = nn.Dropout(cfg.dropout)

def forward(self, fr_prime: torch.Tensor,

ft: torch.Tensor) -> torch.Tensor:

"""

Args:

fr_prime: (B, P², C) region image features from RIA

ft: (B, Nt, C) language token features from BERT

Returns:

fr: (B, P², C) fused, relationship-aware region features

"""

# ① Region-region self-attention → Fr1 [captures spatial co-occurrence]

fr1, _ = self.region_self_attn(fr_prime, fr_prime, fr_prime)

# ② Region-language cross-attention → Fr2 [Eq. 5]

# Al = Softmax(σ(F'r·Wlq) · σ(Ft·Wlk)^T) ∈ R^{P² × Nt}

Q = self.act(self.W_lq(fr_prime)) # (B, P², C)

K = self.act(self.W_lk(ft)) # (B, Nt, C)

Al = F.softmax(

torch.bmm(Q, K.transpose(1, 2)) * self.scale, dim=-1

) # (B, P², Nt)

fr2 = torch.bmm(Al, ft) # (B, P², C)

# Fuse: Fr = MLP(F'r + Fr1 + Fr2) with residual

fused = fr_prime + self.dropout(fr1) + self.dropout(fr2)

fr = fused + self.mlp(self.norm(fused))

return fr # (B, P², C)

# ─── 9. TARGET COUNT HEAD — N_O ───────────────────────────────────────────────

class TargetCountHead(nn.Module):

"""

Predicts the number of target objects N_O ∈ {0,1,2,3,4,5,5+}.

This head is critical for GREC: predicting the right number of boxes

is a prerequisite for Pr@(F1=1, IoU≥0.5). Replacing N_O with a binary

classifier drops Pr@F1 from 61.90% to 37.58% (Table 5).

For GRES, N_O has minimal effect (<0.5% change) since GRES outputs

a binary mask without instance-level differentiation.

Input: global average pooling over all P² region features Fr.

"""

def __init__(self, cfg: ReLAConfig):

super().__init__()

dim = cfg.feat_dim

self.pool = nn.AdaptiveAvgPool1d(1) # pool over P² regions

self.head = nn.Sequential(

nn.LayerNorm(dim),

nn.Linear(dim, dim // 2),

nn.GELU(),

nn.Dropout(cfg.dropout),

nn.Linear(dim // 2, cfg.max_targets), # 7 classes: {0,1,2,3,4,5,5+}

)

def forward(self, fr: torch.Tensor) -> torch.Tensor:

"""

Args:

fr: (B, P², C) region features

Returns:

logits: (B, max_targets) — softmax externally for cross-entropy loss

"""

pooled = self.pool(fr.transpose(1, 2)).squeeze(-1) # (B, C)

return self.head(pooled) # (B, 7)

# ─── 10. RELA BLOCK — FULL RELATIONSHIP MODELING ─────────────────────────────

class ReLABlock(nn.Module):

"""

Full ReLAtionship modeling block combining RIA → RLA → task heads.

Outputs for GRES:

- xr (B, P²) region target probabilities (supervised by minimap)

- mask (B, HW) segmentation mask via weighted region mask fusion

Outputs for GREC:

- boxes (B, P², 4) per-region bounding box predictions

- N_O (B, 7) target count logits

The weighted mask fusion (Eq. 1): M = Σ_n (xr_n · Mr_n)

achieves flexible region shapes since each Mr_n is computed by

multiplying the region filter Ff with the pixel decoder output Fm.

"""

def __init__(self, cfg: ReLAConfig):

super().__init__()

C = cfg.feat_dim

self.ria = RegionImageAttention(cfg)

self.rla = RegionLanguageAttention(cfg)

# GRES: scalar probability xr per region ∈ [0, 1]

self.region_prob = nn.Linear(C, 1)

# GRES: region filter Ff ∈ R^{P² × C} for mask generation

self.region_filter = nn.Sequential(nn.LayerNorm(C), nn.Linear(C, C))

# GREC: bounding box head — predicts [cx, cy, w, h] normalised to [0,1]

self.box_head = nn.Sequential(

nn.LayerNorm(C),

nn.Linear(C, C // 2),

nn.GELU(),

nn.Linear(C // 2, 4),

)

# GREC: target count head N_O

self.count_head = TargetCountHead(cfg)

def forward(

self,

fi: torch.Tensor, # (B, HW, C) image features from FPN

ft: torch.Tensor, # (B, Nt, C) language features from BERT

fm: torch.Tensor, # (B, HW, C) mask features from PixelDecoder

) -> Dict[str, torch.Tensor]:

"""

Returns dict with keys:

'mask' (B, HW) GRES output mask

'xr' (B, P²) region probabilities (for minimap loss)

'region_masks' (B, P², HW) individual regional masks Mr

'boxes' (B, P², 4) GREC box predictions (cx,cy,w,h ∈ [0,1])

'count_logits' (B, 7) N_O target count logits

"""

# ── Step 1: RIA — dynamic soft-region assembly ──────────────────────

fr_prime, ari = self.ria(fi) # (B,P²,C), (B,P²,HW)

# ── Step 2: RLA — region-region + region-language interaction ───────

fr = self.rla(fr_prime, ft) # (B, P², C)

# ── Step 3: GRES head ────────────────────────────────────────────────

# Region probability map xr ∈ [0,1] (B, P²)

xr = torch.sigmoid(self.region_prob(fr).squeeze(-1))

# Region filter Ff (B, P², C)

ff = self.region_filter(fr)

# Regional masks: Mr = σ(Ff × Fm^T) (B, P², HW) [Eq. 4 analogue]

mr = torch.sigmoid(torch.bmm(ff, fm.transpose(1, 2)))

# Weighted aggregation M = Σ_n(xr_n · Mr_n) [Eq. 1]

mask = (xr.unsqueeze(-1) * mr).sum(dim=1) # (B, HW)

# ── Step 4: GREC head ────────────────────────────────────────────────

boxes = torch.sigmoid(self.box_head(fr)) # (B, P², 4)

count_logits = self.count_head(fr) # (B, 7)

return {

"mask": mask,

"xr": xr,

"region_masks": mr,

"boxes": boxes,

"count_logits": count_logits,

}

# ─── 11. FULL RELA MODEL ──────────────────────────────────────────────────────

class ReLA(nn.Module):

"""

Complete ReLA model for joint GRES and GREC.

Architecture (Section 4.1 / Fig. 7):

Image → VisualEncoder (Swin-B) → FPNNeck

Text → LanguageEncoder (BERT)

Fi, Ft → PixelDecoder → Fm (mask features)

Fi, Ft → ReLABlock → mask, boxes, N_O, xr

Multi-task training jointly optimises GRES and GREC (Table 6 shows

joint training matches or slightly outperforms single-task variants).

Note: In production, replace VisualEncoder with:

from transformers import AutoModel

self.visual = AutoModel.from_pretrained('microsoft/swin-base-patch4-window12-384')

"""

def __init__(self, cfg: ReLAConfig):

super().__init__()

self.cfg = cfg

self.visual_enc = VisualEncoder(cfg)

self.lang_enc = LanguageEncoder(cfg)

self.fpn = FPNNeck(cfg)

self.pixel_dec = PixelDecoder(cfg)

self.rela_block = ReLABlock(cfg)

# AdamW, lr=1e-5, weight_decay=0.01, lr drops 10× at 11K and 140K iters

self._init_weights()

def _init_weights(self) -> None:

"""Xavier/normal initialisation for linear layers."""

for m in self.modules():

if isinstance(m, nn.Linear):

nn.init.xavier_uniform_(m.weight)

if m.bias is not None:

nn.init.zeros_(m.bias)

elif isinstance(m, nn.LayerNorm):

nn.init.ones_(m.weight)

nn.init.zeros_(m.bias)

def forward(

self,

images: torch.Tensor, # (B, 3, H, W)

input_ids: torch.Tensor, # (B, Nt)

attention_mask: Optional[torch.Tensor] = None,

) -> Dict[str, torch.Tensor]:

"""

Args:

images: (B, 3, 480, 480) normalised input images

input_ids: (B, Nt) tokenised referring expression

attention_mask: (B, Nt) BERT padding mask

Returns:

outputs dict — see ReLABlock.forward()

"""

# ── Visual encoding (multi-scale backbone) ──────────────────────────

c2, c3, c4 = self.visual_enc(images) # 1/8, 1/16, 1/32 features

B = images.size(0)

# Recover spatial dims from sequence lengths

HW2 = c2.size(1); H2 = W2 = int(HW2 ** 0.5)

HW3 = c3.size(1); H3 = W3 = int(HW3 ** 0.5)

HW4 = c4.size(1); H4 = W4 = int(HW4 ** 0.5)

# ── Language encoding ────────────────────────────────────────────────

ft = self.lang_enc(input_ids, attention_mask) # (B, Nt, C)

# ── Feature pyramid neck — fuse multi-scale visual features ─────────

fi, H, W = self.fpn(c2, H2, W2, c3, H3, W3, c4, H4, W4)

# fi: (B, HW, C=256)

# ── Pixel decoder — produce mask features Fm ─────────────────────────

fm = self.pixel_dec(fi, ft) # (B, HW, C)

# ── ReLA block — relationship modeling + task heads ──────────────────

outputs = self.rela_block(fi, ft, fm)

return outputs # dict of all predictions

# ─── 12. MULTI-TASK LOSS — EQUATION (2) ──────────────────────────────────────

class ReLALoss(nn.Module):

"""

Multi-task training loss from Eq. (2):

L = λM·LM + λB·LB + λxr·Lxr + λNO·LNO

LM — Binary cross-entropy on segmentation mask (GRES)

LB — L1 + GIoU loss on bounding boxes (GREC)

Lxr — BCE on region minimap (GRES supervision signal)

LNO — Cross-entropy on target count (GREC)

Default weights from ablation Table 8:

λM=2.0, λB=5.0, λxr=0.2, λNO=1.0

"""

def __init__(self, cfg: ReLAConfig):

super().__init__()

self.lam_m = cfg.lambda_mask

self.lam_b = cfg.lambda_box

self.lam_xr = cfg.lambda_xr

self.lam_no = cfg.lambda_no

def forward(

self,

outputs: Dict[str, torch.Tensor],

mask_gt: torch.Tensor, # (B, HW) binary float

minimap_gt: torch.Tensor, # (B, P²) float in [0,1]

count_gt: Optional[torch.Tensor] = None, # (B,) long {0..6}

boxes_gt: Optional[torch.Tensor] = None, # (B, N, 4) [cx,cy,w,h]

) -> Dict[str, torch.Tensor]:

"""

Returns dict with 'total' loss and individual components.

"""

losses = {}

# LM — mask segmentation BCE

losses["mask"] = F.binary_cross_entropy(

outputs["mask"].clamp(1e-6, 1 - 1e-6), mask_gt

)

# Lxr — minimap BCE (links region queries to spatial patches)

losses["xr"] = F.binary_cross_entropy(

outputs["xr"].clamp(1e-6, 1 - 1e-6), minimap_gt

)

total = self.lam_m * losses["mask"] + self.lam_xr * losses["xr"]

# LNO — target count classification

if count_gt is not None:

losses["count"] = F.cross_entropy(outputs["count_logits"], count_gt)

total = total + self.lam_no * losses["count"]

# LB — L1 + GIoU box regression

if boxes_gt is not None and boxes_gt.numel() > 0:

N = boxes_gt.size(1)

pred_boxes = outputs["boxes"][:, :N, :]

losses["l1"] = F.l1_loss(pred_boxes, boxes_gt)

losses["giou"] = giou_loss(

pred_boxes.reshape(-1, 4), boxes_gt.reshape(-1, 4)

)

total = total + self.lam_b * (losses["l1"] + losses["giou"])

losses["total"] = total

return losses

# ─── 13. TRAINING STEP ───────────────────────────────────────────────────────

def train_step(

model: ReLA,

criterion: ReLALoss,

optimizer: torch.optim.Optimizer,

batch: Dict[str, torch.Tensor],

device: torch.device,

) -> Dict[str, float]:

"""

One forward + backward + optimiser step.

The paper uses AdamW (lr=1e-5, weight_decay=0.01) and trains for

150K iterations with batch size 48 on 8 × 32G V100 GPUs.

Learning rate drops by 10× at iterations 11K and 140K.

Args:

batch keys: 'images', 'input_ids', 'attention_mask',

'mask_gt', 'minimap_gt',

'count_gt' (optional), 'boxes_gt' (optional)

"""

model.train()

images = batch["images"].to(device)

ids = batch["input_ids"].to(device)

attn = batch.get("attention_mask")

if attn is not None:

attn = attn.to(device)

mask_gt = batch["mask_gt"].float().to(device)

minimap_gt = batch["minimap_gt"].float().to(device)

count_gt = batch.get("count_gt")

boxes_gt = batch.get("boxes_gt")

if count_gt is not None:

count_gt = count_gt.long().to(device)

if boxes_gt is not None:

boxes_gt = boxes_gt.float().to(device)

optimizer.zero_grad()

outputs = model(images, ids, attn)

losses = criterion(outputs, mask_gt, minimap_gt, count_gt, boxes_gt)

losses["total"].backward()

nn.utils.clip_grad_norm_(model.parameters(), max_norm=1.0)

optimizer.step()

return {k: v.item() for k, v in losses.items()}

# ─── 14. INFERENCE ───────────────────────────────────────────────────────────

@torch.no_grad()

def inference(

model: ReLA,

images: torch.Tensor,

input_ids: torch.Tensor,

attention_mask: Optional[torch.Tensor],

img_h: int, img_w: int,

device: torch.device,

no_target_thresh: float = 0.5,

) -> Dict[str, torch.Tensor]:

"""

Run inference and return GRES mask + GREC boxes.

For GRES: if N_O predicts 0 (no target), mask is zeroed out.

For GREC: N_O determines how many boxes to return (top-N_O by confidence xr).

Args:

no_target_thresh: confidence below which mask is suppressed.

img_h, img_w: original image spatial dims for mask upsampling.

Returns dict:

'mask' (1, img_h, img_w) — binary segmentation mask

'boxes' (N_O, 4) — top bounding boxes [cx,cy,w,h]

'no_target' bool — True if expression matches nothing

"""

model.eval()

images = images.to(device)

input_ids = input_ids.to(device)

if attention_mask is not None:

attention_mask = attention_mask.to(device)

outputs = model(images, input_ids, attention_mask)

# ── Determine N_O ────────────────────────────────────────────────────────

count_pred = outputs["count_logits"].argmax(dim=-1).item() # {0..6}

no_target = (count_pred == 0)

# ── GRES mask ─────────────────────────────────────────────────────────────

B, HW = outputs["mask"].shape

H_feat = W_feat = int(HW ** 0.5)

mask_feat = outputs["mask"].view(B, 1, H_feat, W_feat)

mask_up = F.interpolate(mask_feat, size=(img_h, img_w),

mode='bilinear', align_corners=False)

mask_bin = (mask_up.squeeze(1) > no_target_thresh).float()

if no_target:

mask_bin = torch.zeros_like(mask_bin)

# ── GREC boxes ────────────────────────────────────────────────────────────

if no_target or count_pred == 0:

selected_boxes = torch.empty(0, 4, device=device)

else:

# Rank P² regions by xr and take top-N_O

xr_scores = outputs["xr"][0] # (P²,)

actual_n = min(count_pred, xr_scores.size(0))

top_idx = xr_scores.topk(actual_n).indices

selected_boxes = outputs["boxes"][0, top_idx, :] # (N_O, 4)

return {

"mask": mask_bin.squeeze(0), # (img_h, img_w)

"boxes": selected_boxes, # (N_O, 4)

"no_target": no_target,

}

# ─── 15. SMOKE TEST ──────────────────────────────────────────────────────────

def _smoke_test() -> None:

"""

End-to-end smoke test with random dummy tensors.

Verifies forward pass, loss computation, and inference pipeline.

Uses a tiny config (P=4, feat_dim=32) for fast CPU execution.

"""

print("=== ReLA Full Model Smoke Test ===")

cfg = ReLAConfig(

img_size=64, embed_dim=16,

swin_depths=(1,1,1,1), swin_heads=(1,1,2,2),

lang_dim=32, feat_dim=32,

num_regions=16, # P=4

pixel_dec_layers=2,

max_targets=7, n_heads=4,

)

device = torch.device("cpu")

model = ReLA(cfg).to(device)

crit = ReLALoss(cfg)

B, Nt = 2, 10

batch = {

"images": torch.rand(B, 3, 64, 64),

"input_ids": torch.randint(0, 100, (B, Nt)),

"attention_mask": torch.ones(B, Nt, dtype=torch.long),

"mask_gt": (torch.rand(B, 256) > 0.5).float(),

"minimap_gt": (torch.rand(B, 16) > 0.5).float(),

"count_gt": torch.randint(0, 7, (B,)),

"boxes_gt": torch.rand(B, 2, 4),

}

# Training step

opt = torch.optim.AdamW(model.parameters(), lr=1e-3, weight_decay=0.01)

losses = train_step(model, crit, opt, batch, device)

for k, v in losses.items():

print(f" {k:12s}: {v:.4f}")

assert all(math.isfinite(v) for v in losses.values()), "Non-finite loss!"

print(" Training step ✓")

# Inference

result = inference(

model, batch["images"][:1], batch["input_ids"][:1],

batch["attention_mask"][:1],

img_h=64, img_w=64, device=device,

)

print(f" mask shape: {result['mask'].shape}")

print(f" boxes shape: {result['boxes'].shape}")

print(f" no_target: {result['no_target']}")

print(" Inference ✓")

print("=== All checks passed ✓ ===")

if __name__ == "__main__":

_smoke_test()