10 Best ChatGPT Prompts for Data Analysis

You have a spreadsheet with 50,000 rows, a deadline in three hours, and absolutely no idea where the anomaly in column G is coming from. Or maybe you have clean data but no clue how to turn it into a story your stakeholders will actually read. This is where ChatGPT stops being a chatbot and starts being the data partner you always wished you had — one that writes Python, explains statistics without the condescension, and can generate a full business insights report from your pasted numbers in minutes.

Data analysis used to require three different skill sets: coding (Python or R), statistical knowledge, and the ability to communicate findings clearly in plain English. Most analysts are strong in one or two of those areas and quietly struggle with the third. ChatGPT does not replace any of those skills — but it dramatically lowers the cost of the weak spots. A marketing analyst who has never touched pandas can now clean a dataset. A data scientist who hates writing reports can now generate one. A business student running their first regression analysis can now understand what their p-value actually means.

The prompts in this guide are built around how ChatGPT’s Advanced Data Analysis feature (the Python code execution sandbox) actually behaves — not how a generic AI writing tool behaves. They account for the model’s tendency to default to overly commented code, the way it handles ambiguous column names, and the specific instructions that produce analysis reports your colleagues will want to read rather than politely ignore. Whether you work in Excel, pandas, SQL, or plain spoken English, there is a prompt here that will change how fast you move through data.

Why ChatGPT Handles Data Analysis Differently

The feature that makes ChatGPT genuinely useful for data analysis — rather than just interesting — is the Advanced Data Analysis tool (formerly called Code Interpreter). When you upload a CSV, Excel file, or paste data directly into the chat, ChatGPT does not just describe what it thinks the data contains. It actually executes Python code in a sandboxed environment, reads the file, runs real computations, and shows you the output. That distinction matters enormously. Most AI tools hallucinate statistical results when given data. ChatGPT with Advanced Data Analysis computes them.

Compared to Gemini or Claude in this specific use case, ChatGPT has a meaningful edge in the size and maturity of its code execution environment. The sandbox supports pandas, numpy, matplotlib, seaborn, scipy, scikit-learn, and most of the standard data science stack. You can upload a dataset, ask for a regression analysis, and get working Python code alongside the actual output — charts, tables, and coefficient values — all in the same response. Claude is a strong writer for explaining analysis, but its code execution environment is newer. Gemini’s data handling is improving but still less reliable for multi-step analytical workflows.

The other edge is GPT-5’s improved reasoning about statistical validity. Ask it whether your sample size supports a particular test, and it will give you a thoughtful answer that references the assumptions behind the test — not just a yes or no. That kind of methodological awareness is what separates a useful data analysis partner from an expensive autocomplete tool.

ChatGPT’s Advanced Data Analysis (Python sandbox) actually runs your code and computes real results — it does not hallucinate statistics. Upload your data file and use the prompts below for genuine, reproducible analysis rather than AI-generated guesswork.

Before You Start: How to Get the Best Results

Three setup choices determine whether your data analysis session is fast and accurate or frustrating and circular. Get these right before you paste a single prompt.

Enable Advanced Data Analysis. In ChatGPT, make sure you are using GPT-4o or GPT-5, and that the Advanced Data Analysis tool is active (it shows a chart icon in the input bar). Without it, ChatGPT cannot actually run code or read your uploaded files. It will write code that looks plausible but is not verified against your actual data — a different and less useful experience.

Upload your data first, then prompt. Attach your CSV, Excel, or JSON file before sending your analysis request. When you lead with the file, ChatGPT automatically infers column names, data types, and likely analytical directions. Prompting without the file forces it to write generic template code that you then have to adapt manually — slower and more error-prone.

Describe your goal in business terms, not technical terms. “Find which sales regions underperformed in Q4” produces better analysis than “run a descriptive statistics summary.” The business framing gives ChatGPT the context to decide which statistics matter and which visualisations are worth generating. Technical jargon in the prompt often produces technically correct but analytically useless output.

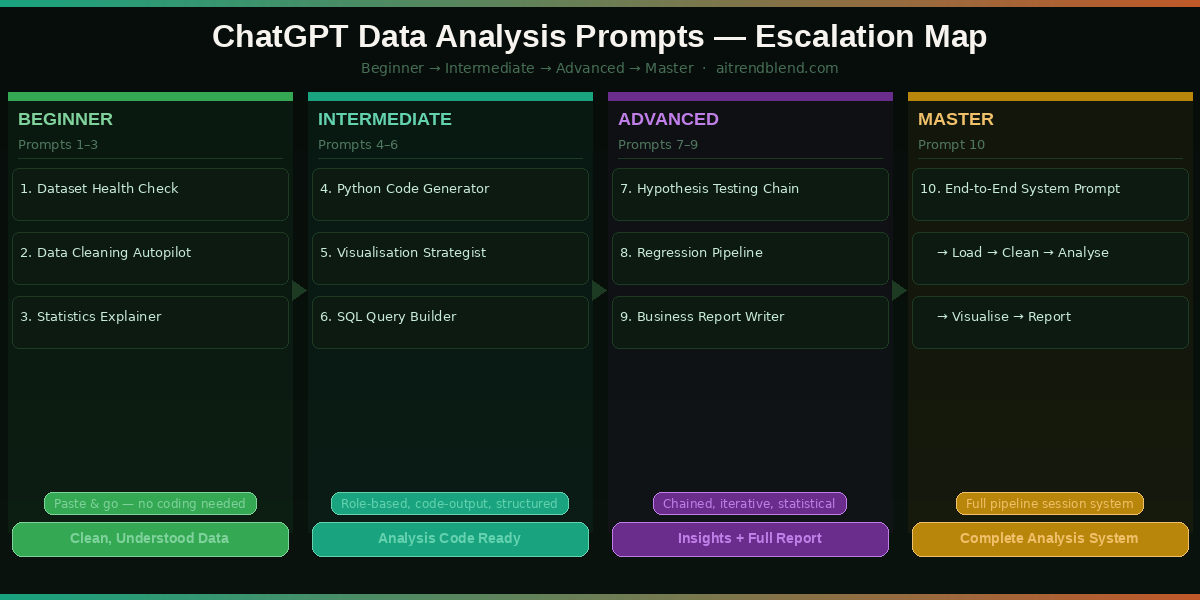

The 10 Best ChatGPT Prompts for Data Analysis

Prompt 1: The Instant Dataset Health Check

Before you analyse anything, you need to know what you are dealing with. Missing values, duplicated rows, wrong data types, outliers that will skew every calculation downstream — these are the invisible landmines in every real-world dataset. This prompt runs a complete health check in one shot and gives you a plain-English summary alongside the technical output. It is the first thing I run on any unfamiliar dataset.

Prompt 2: The Data Cleaning Autopilot

Data cleaning is the part of the job everyone knows is necessary and nobody enjoys. It typically eats 60–80% of an analyst’s time on any new project. This prompt does not just flag problems — it fixes them and shows you the before/after comparison so you can verify the changes were sensible. The key is asking for a cleaning report alongside the code, so you stay in control of every decision rather than having a black-box transformation happen invisibly.

Prompt 3: The Plain-English Statistics Explainer

Statistics outputs are full of numbers that most people cannot interpret on the fly — correlation coefficients, standard deviations, p-values, confidence intervals. This prompt runs descriptive statistics on your dataset and then does something most analysts forget to do: it explains what the numbers actually mean for your specific data, in sentences a non-statistician can act on. Paste this prompt to any stakeholder who asks “what does the data say?” and you will have a usable answer in under a minute.

Prompt 4: The Python Analysis Code Generator

Here is where many analysts get stuck: they know what analysis they need but not exactly how to write the pandas code to run it. Googling pandas syntax sends you down a Stack Overflow rabbit hole for twenty minutes. This prompt generates complete, runnable analysis code for any specific analytical question — including the parts most tutorials skip, like handling edge cases where groups have fewer than the minimum sample size, or dealing with NaN values mid-calculation.

Prompt 5: The Data Visualisation Strategist

Most people default to bar charts for everything. That is not always wrong, but it is often not the clearest way to show what your data actually says. This prompt first recommends the right chart types for your specific analysis goals — explaining why — and then generates publication-quality Python code to produce them. The recommendation step is the part that saves you time: stopping you from building a pie chart that obscures the trend a line chart would have shown immediately.

Prompt 6: The SQL Query Builder and Explainer

SQL is the language most business analysts actually use to pull data — and the one where a missing JOIN condition or an incorrect GROUP BY can silently produce totals that are off by an order of magnitude. This prompt does not just write SQL queries. It explains each clause in plain English, flags potential performance issues, and warns you about the specific gotchas (like NULL handling in aggregates) that trip up even experienced analysts on first drafts.

Prompts 1–6 give you clean data, descriptive statistics, custom Python analysis code, visualisations, and SQL queries. The advanced prompts below build toward full statistical testing, automated reporting, and a complete end-to-end data analysis system. Make sure Advanced Data Analysis is enabled before continuing.

“The goal is to turn data into information, and information into insight. ChatGPT handles the translation — but the question you ask still determines the answer you get.”

— Adapted from Carly Fiorina’s frequently quoted principle on data leadership

Prompt 7: The Statistical Hypothesis Testing Guide

Hypothesis testing is where data analysis gets genuinely misused — p-hacking, inappropriate test selection, assumptions that go unchecked. This chained prompt first helps you choose the right statistical test for your question, then checks whether your data meets the assumptions for that test, and only then runs it. That three-step validation process is what separates a defensible statistical conclusion from an embarrassing one that gets flagged in peer review or a board presentation.

Prompt 8: The Regression Analysis and Interpretation Engine

Regression is one of the most powerful and most misread tools in data analysis. Coefficients get interpreted incorrectly, multicollinearity goes undetected, and R² scores get reported without context. This prompt runs a complete regression pipeline — model building, diagnostics, interpretation — and produces output that a non-statistician can read alongside the technical summary that a statistician can verify. The result is a regression analysis that is both rigorous and communicable.

Prompt 9: The Business Insights Report Writer

You have done the analysis. Now you have to write a report that a VP, a client, or a non-technical team will actually read, remember, and act on. This is the step where most analysts lose momentum — the writing. This prompt turns your analytical findings into a structured narrative report with executive summary, section breakdowns, data-backed recommendations, and clear next steps. It is the difference between sending a colleague a Python notebook and sending them something they will open.

Prompt 10: The End-to-End Data Analysis System Prompt

This is the prompt you paste at the start of any serious data analysis session. It turns ChatGPT into a persistent data analysis partner that knows your dataset, your goals, your technical stack, and your output requirements before you ask a single analytical question. Think of it as configuring the environment before opening a Jupyter notebook — except the environment remembers your business context, not just your kernel state. Once this is set, every subsequent prompt in the session builds on a shared foundation rather than starting from scratch.

Common Mistakes and How to Fix Them

The prompts above work well when used correctly. Here are the five mistakes that consistently produce bad results — and the exact fix for each one.

Mistake 1 — Forgetting to upload the file. If you ask ChatGPT to “analyse my sales data” without attaching a file, it invents a plausible-looking analysis using fictional numbers. Always attach before prompting. If you see numbers in the output that you cannot trace back to your data, that is a sign ChatGPT is hallucinating rather than computing.

Mistake 2 — Asking for charts without specifying the insight. “Make a chart of revenue by month” produces a generic chart. “Make a chart that clearly shows whether Q4 revenue recovered after the August dip” produces a chart designed to answer a question. The framing difference is small; the output difference is significant.

| Mistake | ❌ Wrong Approach | ✅ Right Approach |

|---|---|---|

| No file uploaded | “Analyse my customer data” | Upload CSV first, then: “Analyse the uploaded customer data. Show me the output.” |

| Vague chart request | “Make me a bar chart” | “Make a chart that shows which product categories declined in Q4. Use insight-driven title.” |

| Skipping assumptions | “Run a t-test on these two groups” | “Check normality and equal variance first, then recommend and run the appropriate test.” |

| Trusting all statistics | Accept p-values and R² at face value | Ask: “What are the limitations of this result? What could make it unreliable?” |

| One-shot reports | “Write a report about my data” | Use Prompt 9 with full context: audience, intended action, and the specific findings to include. |

What ChatGPT Still Struggles With for Data Analysis

None of this works perfectly all the time, and the failure modes for data analysis are worth naming explicitly because some of them can produce results that look correct but are not.

The most serious limitation is what happens with very large datasets. Advanced Data Analysis has memory and file size constraints — if your CSV exceeds roughly 50MB or has more than a few hundred thousand rows, the session can time out, truncate the data without warning, or slow to unusable speeds. For genuinely large-scale analysis, ChatGPT is best used to write the code that you then run locally in your own environment, not to run the analysis itself inside the chat session.

The second limitation is domain-specific statistical nuance. ChatGPT handles standard statistical tests reliably but can give overconfident answers about edge cases in specialised domains — survival analysis in clinical trials, panel data models in econometrics, time-series models with structural breaks. If you are working at the frontier of a technical statistical discipline, treat ChatGPT as a starting point for your code and always have someone with domain expertise review the methodology. A good test: if ChatGPT does not spontaneously flag the key assumptions of the test you asked for, probe further before relying on the result.

Finally, ChatGPT cannot access live databases or real-time data feeds. Every analysis runs on data you have already exported and uploaded. For dashboards, live reporting, or continuous monitoring workflows, ChatGPT is a code generator for those pipelines — not the pipeline itself. Build the analysis logic with ChatGPT, then implement it in your own infrastructure.

Make Your Data Talk — One Prompt at a Time

What you have in these ten prompts is a structured progression from raw data to clean data, clean data to insight, insight to statistical validation, and validation to communication. That is the entire data analysis workflow — compressed into a set of prompts you can adapt to almost any dataset, domain, or business question. The escalation matters: Prompts 1–3 build the foundation, Prompts 4–6 run the core analysis, Prompts 7–9 handle rigour and reporting, and Prompt 10 turns every future session into a smooth, context-aware collaboration.

There is something deeper worth saying about what these prompts reflect. Good data analysis has always been partly a communication problem — the challenge of translating numbers into decisions. ChatGPT does not replace the analytical judgment that determines which questions matter. But it dramatically reduces the technical friction between having a question and getting a rigorous, communicable answer. For anyone who has stared at a pandas error for forty minutes or rewritten a stakeholder report three times because “it was too technical,” that friction reduction is genuinely valuable.

There are things these prompts cannot give you. They cannot replace domain expertise — an analyst who understands their industry will always ask better questions than one who does not. They cannot replace critical thinking about whether the data you have actually answers the question you are asking. And they cannot replace the judgment calls that happen when your findings are uncomfortable and someone needs to decide whether to act on them anyway. Those remain human responsibilities, and they are the ones that matter most.

ChatGPT’s data analysis capabilities are improving with each model update, and GPT-5’s improved long-context reasoning already makes multi-step analytical sessions noticeably more coherent than they were a year ago. The direction of travel — toward AI that can maintain analytical context across a full project rather than resetting with each conversation — suggests that the Prompt 10 system approach will become the standard way serious analysts work with AI in the next twelve to eighteen months. Start building that habit now, and you will be ahead of the curve when the tools catch up to the workflow.

Start Analysing Your Data Right Now

Open ChatGPT with GPT-4o or GPT-5, enable Advanced Data Analysis, upload your dataset, and paste Prompt 10’s system context. Your data has a story — let’s help you find it.

All prompts tested on ChatGPT (GPT-4o and GPT-5 with Advanced Data Analysis) as of March 2026. Statistical outputs depend on uploaded data quality and may vary. Always validate critical analytical results independently. This article is original editorial content by AITrendBlend.com and is not affiliated with OpenAI.