Agentic AI vs. Generative AI: What’s Actually Different — and Why It Changes Everything

That gap is not just a feature difference. It is a fundamental shift in what AI is actually doing. Generative AI gives you an answer. Agentic AI takes action. The distinction sounds simple until you try to build something real on top of either — and then the differences hit you fast.

This article is not going to drown you in jargon or wave vaguely at “the AI revolution.” What you’ll walk away with is a working mental model: what separates these two paradigms, where each genuinely excels, where each genuinely fails, and how to decide which one you actually need for a given task. We will look at real examples, honest trade-offs, and the current state of both as of 2026 — not the hyped version, the real one.

The AI space moves fast. Keeping these two concepts muddled costs you real time, real money, and sometimes real mistakes. Let’s fix that.

The Gap Is Bigger Than You Think

Here is where it gets interesting. Most people treat “Generative AI” and “Agentic AI” as points on a single spectrum, as if one is simply a more powerful version of the other. That framing is wrong, and it’s worth being precise about why.

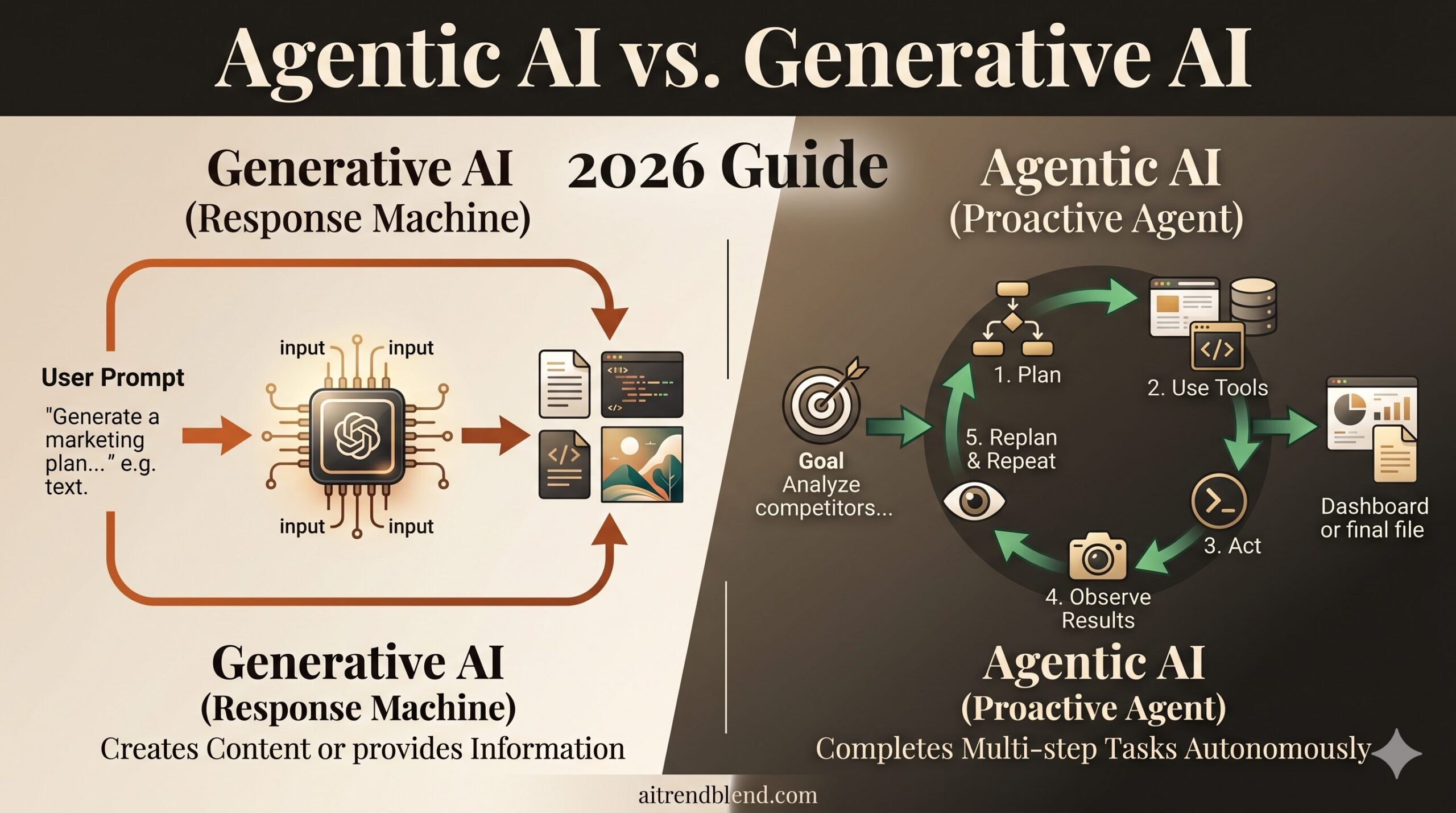

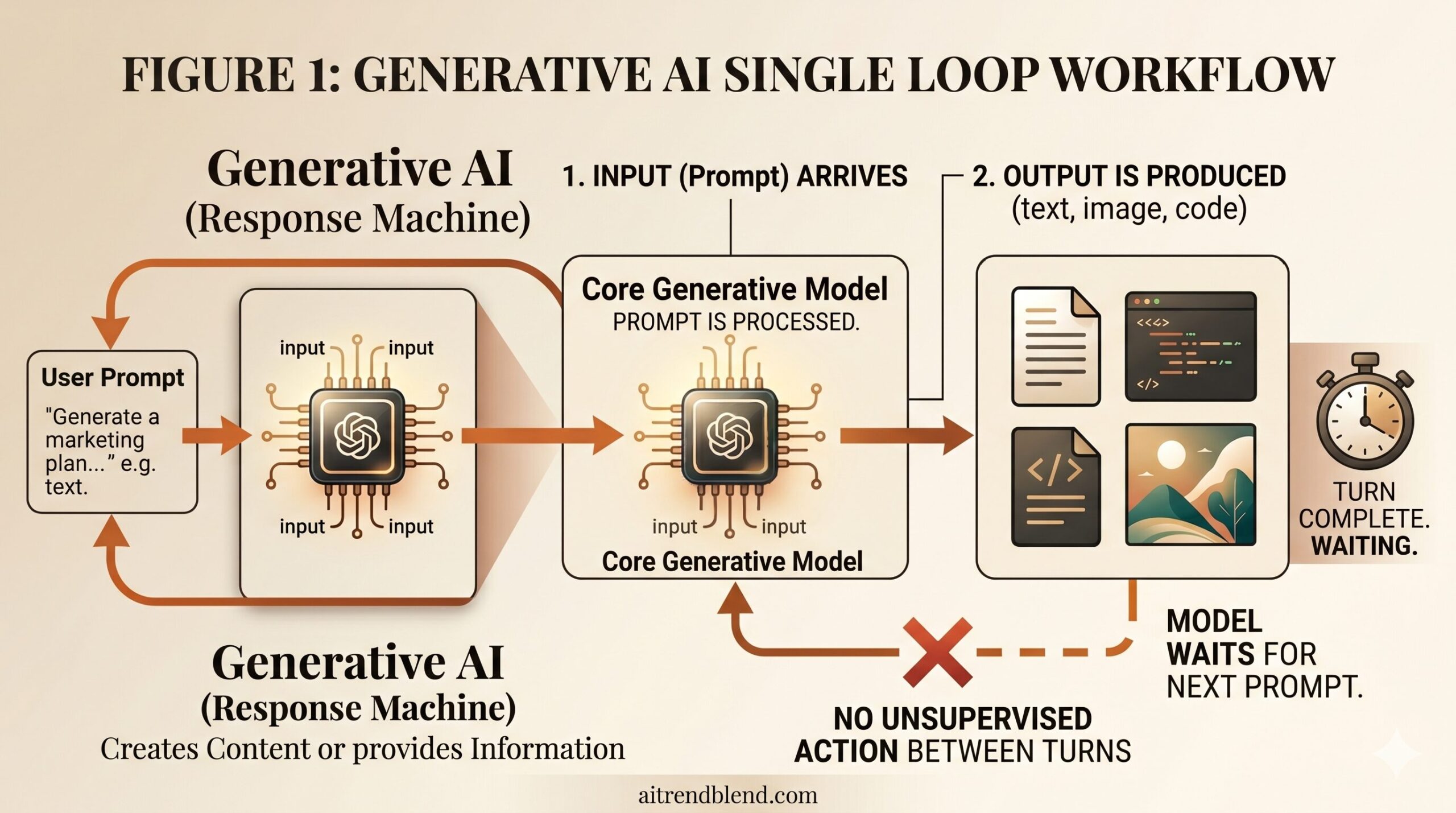

Generative AI — the category that includes models like Claude, ChatGPT, Gemini, and image generators like Midjourney — is fundamentally a response machine. You provide input. It produces output. Beautifully, often. But the transaction ends there. The model does not go on to do anything with what it produced. It has no concept of a next step. It cannot notice that its own answer was wrong and try again. The loop closes the moment text appears on your screen.

Agentic AI is built on a different assumption: that the goal matters more than a single response. An agent takes a high-level objective — “research this competitor and write a brief,” or “find the bug in this codebase and submit a fix” — and then figures out the steps itself. It uses tools. It browses the web, runs code, reads files, calls APIs, checks its own output, and if something breaks, it tries something else. The loop does not close after one response. It closes when the task is done — or when the agent gets stuck and asks you what to do next.

Generative AI is reactive — it answers what you ask. Agentic AI is proactive — it pursues a goal. Choosing the wrong one for your task doesn’t just mean worse results; it often means the tool cannot perform the task at all.

Think of it this way. Generative AI is like asking a brilliant chef to describe a recipe in detail. Agentic AI is like hiring that same chef, giving them access to your kitchen, and coming back in an hour to find dinner on the table. The underlying knowledge might be the same. The experience is not.

How Generative AI Actually Works

Generative AI models are trained on enormous datasets — text, code, images, audio — and learn statistical patterns within that data. When you prompt one, you are essentially asking it to complete a pattern in a way that is plausible given everything it has seen during training. The output feels like understanding because the model has compressed a staggering amount of human knowledge into its weights. It is not “understanding” in any philosophical sense. It’s pattern completion at enormous scale, done with extraordinary nuance.

The practical strengths are well established by now. Generative AI excels at writing and editing, code suggestions, summarizing long documents, translating, explaining complex concepts, brainstorming, and generating images or audio from descriptions. These are tasks where the value is in the artifact produced — a draft, a plan, a piece of code, an image. Once the artifact exists, the AI’s job is over.

Its weaknesses are also well known, even if people don’t always act accordingly. These models hallucinate — they produce confident-sounding text that is factually wrong. They have a knowledge cutoff, so anything that happened after training is invisible to them unless you feed it explicitly. They have no persistent memory between sessions by default, meaning every conversation starts cold. And they cannot take real-world actions — they cannot book your flight, run a test suite, or send an email. They can only write the text describing how to do those things.

“Generative AI gives you an incredibly capable thought partner. But when the session ends, it forgets you entirely — and it never moved a single file on your behalf.”

— aitrendblend.com editorial observation, 2026

Understanding these constraints is not pessimism. It is the foundation for using generative AI well. You delegate the thinking, the drafting, the explaining. You remain the agent who acts on what it produces.

What Agentic AI Changes About the Picture

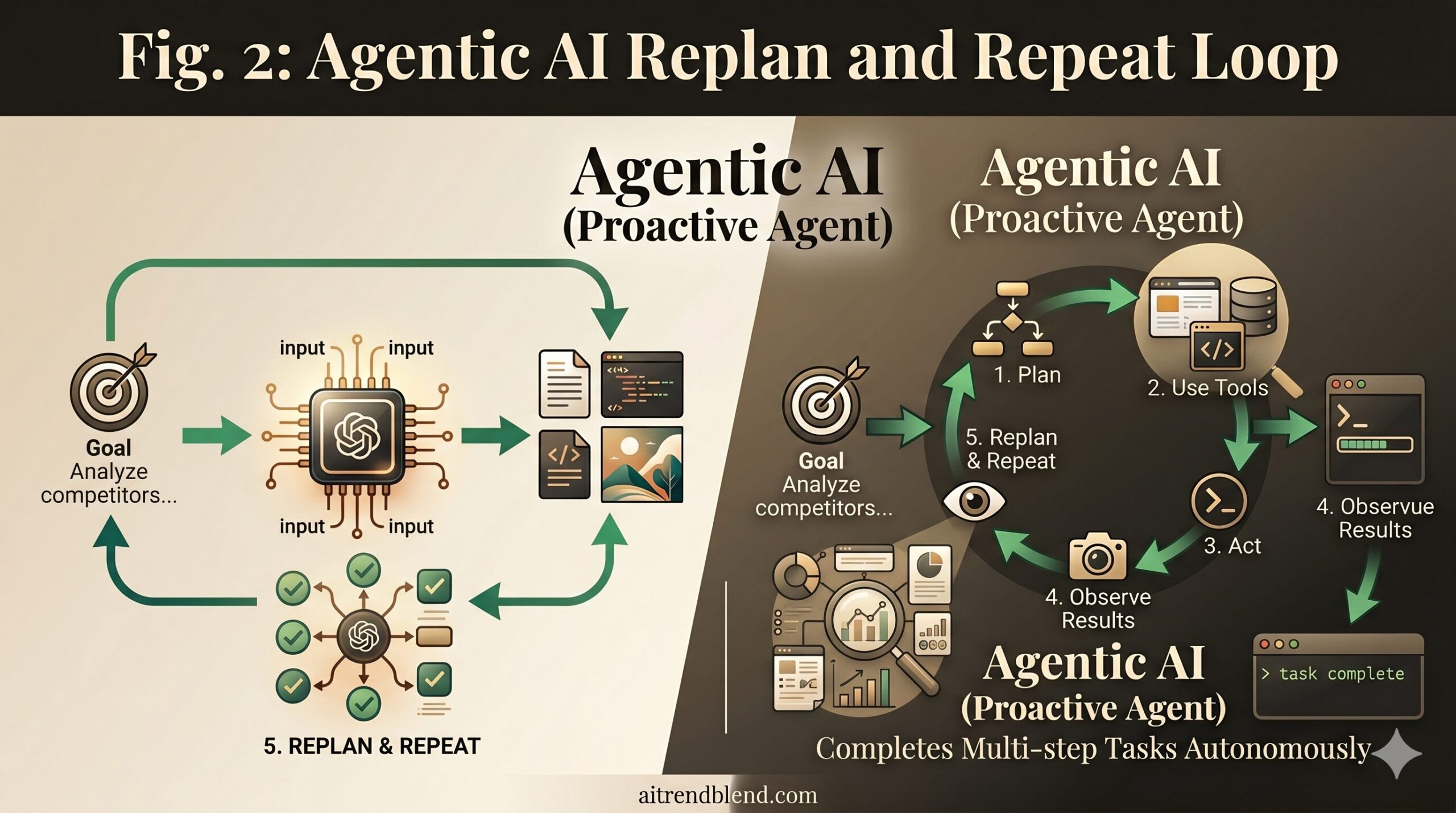

Agentic AI takes a language model — usually one of the same powerful generative models described above — and wraps it in an architecture that lets it reason, plan, use tools, and iterate. The underlying “brain” might be Claude, GPT-4o, or Gemini. What changes is everything around it.

The problem most people run into when first encountering AI agents is assuming they work by magic. They don’t. An agent typically operates in a loop: it receives a goal, generates a plan, executes the first step using a tool (a web browser, a code executor, a file system, an API), observes the result, updates its plan, and repeats. This is sometimes called a ReAct loop — Reason, Act, Observe. The model reasons about what to do, takes an action in the real world, and incorporates what it observes back into its next thought.

The tools available to agents are what make them genuinely powerful. Claude Code can read and write files, execute shell commands, and run your tests. OpenAI’s Operator can navigate websites, click buttons, and fill forms. Research agents like Perplexity’s new agentic features can open ten browser tabs, extract data from each, and synthesize a report. These are not simulated actions. They are real operations happening in real systems.

That last sentence is also the source of the biggest risks. A generative model that hallucinates a fact causes a human to read a wrong sentence. An agentic model that makes a confident wrong decision might delete a folder, submit a bad form, or spend API credits on something useless. The stakes scale with the autonomy. This is not a reason to avoid agents — it is a reason to use them with appropriate oversight, especially for high-impact tasks.

Agentic AI is not “smarter” generative AI — it is generative AI equipped with the ability to act. The same model that was safe to hand any prompt can now make real-world changes. Design your workflows with that in mind.

Side by Side: When to Use Which

This is not a small distinction. Choosing between generative and agentic approaches is not like choosing between a hammer and a nail gun — both do a related job. It is more like choosing between a consultant and a contractor. The consultant gives you a brilliant report. The contractor builds the thing. You probably need both, at different times.

Here is a direct comparison across the dimensions that matter most:

| Dimension | Generative AI | Agentic AI |

|---|---|---|

| Primary function | Creates content or provides information | Completes multi-step tasks autonomously |

| What you give it | A prompt or question | A goal or objective |

| Memory | Context window only; resets between sessions | Persistent state across steps and sessions |

| Tool use | None by default | Browsers, file systems, APIs, code execution |

| Autonomy level | Low — responds, waits | High — plans, acts, iterates |

| Error handling | You retry with a better prompt | Agent observes errors and self-corrects |

| Speed for simple tasks | Very fast | Slower (multi-step overhead) |

| Oversight needed | Minimal | Significant — real actions can have real consequences |

| Cost | Per-token, predictable | Variable; longer tasks run longer loops |

| Best for | Writing, summarizing, explaining, brainstorming | Research automation, code execution, workflow automation |

The practical rule of thumb: if the output of the task is a piece of content (text, image, plan, code snippet), generative AI is likely enough. If the task requires a series of actions in the real world to get done — clicking, executing, fetching, sending — you need an agentic approach.

None of this comes free. Agentic systems cost more to run, take longer to complete, and carry real risks if not supervised. For generating a first draft of a blog post, spinning up an agent is overkill. For crawling 50 competitor pricing pages, compiling a report, and uploading it to your shared drive — that’s exactly what agents are built for.

The Misconceptions That Keep Tripping People Up

Most of the confusion in this space comes from a handful of recurring misunderstandings. Worth naming them directly.

Misconception 1: “Agentic AI is just a better version of Generative AI”

Think about what this actually requires you to believe: that a tool capable of browsing the internet and executing code is simply “stronger” than a tool that writes text. That’s like saying a car is “a better bicycle.” Both solve a transportation problem, but they operate on entirely different principles with different risks and requirements. Agentic AI is built on top of generative models — it uses them as its reasoning engine — but the architecture, use cases, and risk profile are fundamentally different.

| Misconception | Wrong Approach | Right Approach |

|---|---|---|

| Agentic AI = smarter GenAI | Treat agents as ChatGPT with extra steps | Understand agents as goal-directed systems with real-world reach |

| Prompt hard enough to make GenAI agentic | Ask ChatGPT to “go research X and come back with results” | Use a tool with actual browser/web access for research tasks |

| Agents replace workers | Hand an agent full autonomy over high-stakes systems with no oversight | Use agents for repetitive, bounded tasks with human checkpoints |

| GenAI is becoming obsolete | Abandon prompt engineering in favor of agent pipelines for everything | Match the tool to the task — GenAI is still faster and cheaper for most creative work |

| Agents are always reliable | Trust an agent to complete a task and check results only at the end | Build in intermediate checkpoints, especially for tasks with irreversible actions |

Misconception 2: “You can prompt your way into agentic behavior”

This one is tempting. Sophisticated prompt engineering can get a generative model to simulate step-by-step reasoning — chain-of-thought prompting, self-reflection prompts, and structured output all push models further than naive single-shot prompts. But there is a hard ceiling. A generative model without tool access cannot actually browse a website. It can describe what it would find on that website, which is not the same thing, and it can hallucinate convincingly. Agentic behavior requires infrastructure, not just better prompts.

Misconception 3: “AI agents will replace human workers soon”

The current reality is more nuanced. AI agents in 2026 are genuinely useful for bounded, well-defined tasks with clear success criteria. They struggle significantly with tasks that require sustained judgment across ambiguous situations, deep domain expertise, or stakeholder relationships. The “replace” framing also misses the more immediate and practical dynamic: agents are amplifying individual workers dramatically, letting one person do the work that previously required a team — not by replacing the team, but by automating the most mechanical parts.

What Neither Can Do Well — Yet

Here is where the honest part comes in. Both paradigms carry real limitations in 2026, and any article that skips this section is not trying to help you — it’s trying to sell you something.

Generative AI still hallucinations with alarming frequency on specific factual claims, especially numbers, citations, dates, and proper nouns. Ask Claude or GPT-4o to cite five academic papers on a niche topic and there is a meaningful chance some of those papers simply do not exist — but the citations look convincing. The model is not lying in any intentional sense. It is completing a pattern, and sometimes the most plausible completion of a pattern is wrong. For any task where factual accuracy matters, you need to verify outputs against authoritative sources. This has not meaningfully changed despite improvements in reasoning capabilities.

Agentic AI faces its own category of problems — and they are arguably more serious because the stakes are higher. The most common failure mode is what researchers sometimes call “planning drift”: an agent begins a multi-step task correctly, but as the steps accumulate, small errors compound and the agent drifts away from the original goal. By step eight of a twelve-step process, it might be solving a subtly different problem than the one you gave it. A concrete example: ask an agent to “clean up and reorganize the project codebase,” and without precise constraints, it may confidently delete files it deems redundant — files that were actually critical. The agent had no way to know the context you hadn’t stated. Humans fill in unstated context automatically; agents do not.

Explainability is a shared weakness. With generative AI, you rarely know why the model said what it said — which particular training examples, which associations, drove the output. With agentic AI, the reasoning is often more visible (you can see the tool calls), but the underlying model decisions within each step remain as opaque as ever. For regulated industries — finance, healthcare, legal — this opacity is a genuine barrier to adoption, not a theoretical concern.

The honest workaround for both: use them as powerful assistants within human-supervised workflows, not as autonomous decision-makers for high-stakes outcomes. That framing is less exciting than the headlines, but it is where most successful deployments actually live right now.

Prompting Each Type Effectively: 10 Practical Templates

Knowing the theory is one thing. Knowing how to actually talk to each type of system is where it pays off. Below are ten prompt structures — five for generative AI, five for agentic AI contexts — arranged from beginner to master level.

Prompt 1: Simple Explanation Request (Generative AI — Beginner)

When you need a generative model to explain something clearly, structure matters. The most common mistake is under-specifying the audience. This prompt fixes that by anchoring the explanation to a specific reader profile, which forces the model to calibrate complexity precisely.

Why It Works: Generative models are sensitive to audience specification. Telling the model the exact role of the reader — and explicitly banning undefined jargon — constrains the output toward genuinely useful clarity rather than technically correct but opaque prose.

How to Adapt It: Replace the role with “a 12-year-old” for maximum simplification, or “a senior engineer unfamiliar with this subfield” for technical depth without condescension.

Prompt 2: Structured Comparison (Generative AI — Beginner)

Generative models produce mediocre comparisons when left to free-form. The problem is that “compare X and Y” invites sprawling prose that buries the useful distinctions. This prompt forces structured output with clear decision criteria.

Why It Works: The instruction “Do not hedge excessively” is doing real work here. Models trained with RLHF tend toward diplomatic non-answers when asked to compare things. Explicitly calling this out results in more useful, opinionated output.

How to Adapt It: Add “include a counterargument to your recommendation” to get a balanced critique built into the response.

Prompt 3: First Draft Generator (Generative AI — Beginner)

The blank page is the hardest part of any writing task. Generative AI is genuinely excellent at solving it — but only if you give it enough context to produce something worth iterating on, rather than something generic you’ll discard.

Why It Works: The “Avoid” line is consistently underused by people who prompt for writing. Exclusions are often more powerful than inclusions — they eliminate the most common failure modes before they happen.

How to Adapt It: Add three bullet points of “source material” (facts, quotes, anecdotes you want included) to ensure the draft contains your specific knowledge rather than generic filler.

Prompt 4: Role-Based Analysis (Generative AI — Intermediate)

Role-prompting is one of the most effective intermediate techniques with generative models. Assigning a specific expert persona changes which part of the model’s training knowledge is activated most strongly, steering the output toward domain-appropriate reasoning rather than generic advice.

Why It Works: The “biggest risk they haven’t considered” instruction is where this prompt earns its keep. It forces the model to reason about blind spots rather than just validating the framing you started with — which is where expert advisors genuinely add value.

How to Adapt It: Change the expert role to match your domain. A “senior ER physician” gives you medical situation analysis with very different priors than a “healthcare startup founder.”

Prompt 5: Critique and Improve (Generative AI — Intermediate)

Most tutorials skip this part entirely: generative models are significantly better at improving existing work than creating from scratch. Handing the model something imperfect and asking for honest critique reliably produces more useful output than asking it to generate cold.

Why It Works: The three-step structure prevents the model from skipping straight to a rewrite without reasoning about what’s wrong first. The reasoning step makes the final output significantly better and, importantly, more learnable for you.

How to Adapt It: Replace the critique step with “identify exactly what a competitor would attack about this argument” to turn it into a steel-manning exercise for strategic documents.

Prompt 6: Agentic Research Brief (Agentic AI — Intermediate)

Agentic AI systems with web access are genuinely powerful for research tasks — but only if you frame the goal precisely. The biggest failure mode is giving an agent a vague research directive and getting back a hallucinated synthesis of things it couldn’t actually look up. This prompt structure forces the agent to work from real sources.

Why It Works: The instruction “Do not include information you cannot verify with a source” is non-obvious but critical. It creates a verification contract that pushes the agent to distinguish between what it browsed and what it inferred from training data.

How to Adapt It: Add “compare findings against [COMPETITOR NAME]’s public statements” to turn this into a competitive intelligence brief automatically.

Prompt 7: Agentic Code Debugging Loop (Agentic AI — Advanced)

This is where the architecture of agentic AI really earns its place. A generative model can suggest fixes to code you paste in. An agent with code execution access can actually run the failing code, read the error, try a fix, run it again, and tell you what worked — iterating to a solution rather than producing one suggestion and stopping.

Why It Works: The three-iteration limit is doing important safety work. Left unconstrained, agents can spiral into increasingly complex fixes that obscure the original problem. Hard stopping conditions make agents faster and more useful, not slower.

How to Adapt It: Change the three-iteration limit to “if you haven’t fixed it in two attempts, write a failing test case that isolates the bug” to get a test-first debugging approach.

Prompt 8: Chained Workflow Automation (Agentic AI — Advanced)

That third prompt in a chain is often doing something subtle — and often the place where agents drift. Chained workflows benefit from explicit handoff instructions that tell the agent how the output of each step should inform the next. This prompt structure builds those guardrails in.

Why It Works: The explicit step gating — “do not begin Step 2 until Step 1 is confirmed” — prevents the agent from parallelizing steps in ways that cause compounding errors. Sequential dependency is made explicit rather than assumed.

How to Adapt It: Add a “human checkpoint” instruction after Step 2 to pause for your review before the agent takes any irreversible actions in Step 3.

Prompt 9: Autonomous Research-to-Draft Pipeline (Agentic AI — Advanced)

Combining web research with writing output in a single agent task is one of the most practically valuable use cases available today. The failure mode — agents mixing real research with hallucinated filler — is solved by source-anchoring requirements built into the prompt itself.

Why It Works: The three-phase separation prevents the most common agentic research failure: starting to write before the research is actually solid. The self-review pass with [CHECK] markers creates an internal quality gate rather than relying solely on your post-hoc review.

How to Adapt It: In Phase 1, add “note any source that contradicts another and flag the disagreement in the final piece” to get built-in intellectual honesty.

Prompt 10: Master — Full AI Workflow Orchestration

This is the master prompt — the one that integrates role assignment, goal specification, constraints, multi-tool orchestration, self-review, and human checkpoints into a single powerful agentic instruction. Use this for your highest-value, most complex automated tasks. Build in oversight proportional to stakes.

Why It Works: The “generate a plan, then wait for confirmation” instruction before any action is taken is the single most important safety feature in agentic prompting. It surfaces misunderstandings before they become expensive mistakes — and ensures the agent’s understanding of your goal matches your actual intent.

How to Adapt It: For lower-stakes tasks, remove the confirmation checkpoint and trust the agent to run end-to-end. For higher-stakes tasks, add a checkpoint after every major step, not just one.

What You’ve Actually Learned Here

The core skill this piece aimed to build is discrimination — the ability to look at a task and know immediately whether it calls for a generative or agentic approach. Not because one is better, but because they serve genuinely different purposes. A hammer is not a better screwdriver. Generative AI is not a weaker version of agentic AI. They are different tools with different strengths, costs, and risks — and matching the tool to the task is where real productivity gains come from.

There is a deeper principle here about working with AI generally. The people getting the most value from these systems in 2026 are not the ones using the most advanced tools. They are the ones who have taken the time to understand what each tool actually does — its real capabilities and real limits — and who design workflows accordingly. That requires thinking, which is not something AI can fully replace. What it can do is amplify the quality of the workflows you design.

Human judgment remains essential — and not only for the obvious high-stakes decisions. It is needed for knowing when to stop trusting an agent’s output and verify it yourself. For knowing when a generative model’s confident answer is actually a hallucination. For catching planning drift before a ten-step agentic task drifts off-course. The tools are powerful. The judgment about when and how to use them is still yours.

Looking ahead, the line between generative and agentic AI is blurring at the edges. Models are gaining persistent memory. Agentic scaffolding is becoming more reliable. The cost and latency of multi-step agent runs is falling fast. By late 2027, many applications that currently require careful prompt engineering or custom agent pipelines will likely be accessible through simple natural language requests to systems that figure out the appropriate execution strategy on their own. The principles in this article — specify goals clearly, build in verification, define success criteria, maintain appropriate oversight — will remain relevant regardless of how the underlying infrastructure evolves. The paradigm shifts. The fundamentals do not.

You now know more than most people about how to think about this. Use it well.

Put These Frameworks to Work

Explore the aitrendblend.com prompt library for more tested templates — or read our deep-dive guide to building your first agentic AI workflow from scratch.

Disclaimer: aitrendblend.com is independent editorial content. We are not affiliated with Anthropic, OpenAI, Google, or any AI company mentioned in this article. No sponsored content. No affiliate links.